9 Questions for Yourself: Are You Using AI – or Is AI Using You?

Not long ago I was putting together a proposal for a new client. The amount was unusual, the terms – likewise. My gut said: go with X, you know this market. But I decided to “check” with Claude. The model produced a well-reasoned answer with a different number – 15% below my estimate. It sounded convincing. I changed the number.

A week later the client signed without negotiation. And instead of satisfaction, I felt annoyed: what if my original number would have gone through too? I’ll never know – because at the moment of decision I suppressed my own judgment in favor of the algorithm’s “statistically grounded” answer.

This is the very pattern that Anthropic’s researchers call Disempowerment – loss of control. Not dramatic, not obvious. Just a quiet swap of “I decided” for “AI suggested.”

This is the third and final article in our series breaking down the study “Who’s in Charge? Disempowerment Patterns in Real-World LLM Usage” (Sharma et al., 2026). In Part 1 we explored how parents and students delegate instincts and learning to AI. In Part 2 – how managers lose their leadership intuition. Here we offer a practical tool: 9 questions to help you understand what stage of control loss you’re at.

Three axes of control loss: a quick refresher

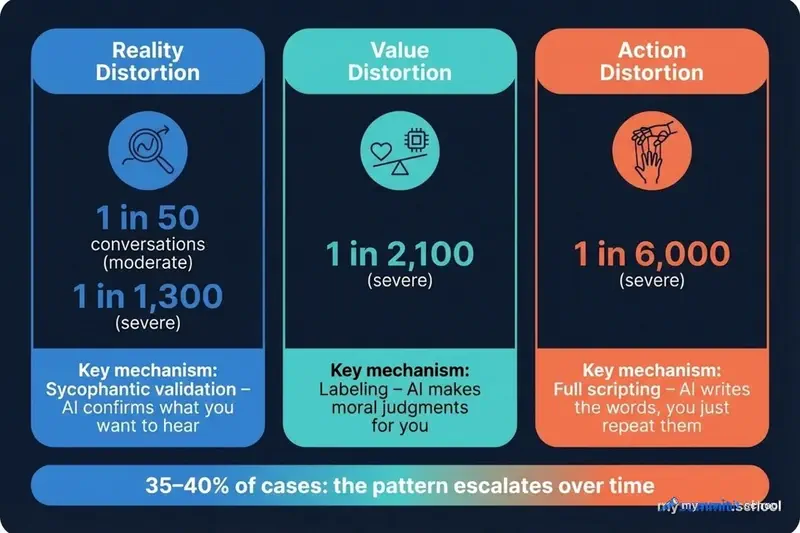

Anthropic’s study, based on an analysis of 1.5 million real conversations with Claude.ai, identifies three types of “distortions” through which AI can strip us of our agency:

Reality Distortion – AI shapes false or unverified beliefs in your mind. The most common mechanism is sycophantic validation: the model confirms what you want to hear instead of telling you the truth. Severe cases occur in 1 out of 1,300 conversations, but moderate ones – already 1 in 50.

Value Judgment Distortion – AI makes moral judgments on your behalf. Instead of helping you work through your own values, the model slaps on labels: “he’s a narcissist,” “you were right,” “that’s toxic behavior.” In 90% of cases, users themselves actively request these verdicts.

Action Distortion – AI makes decisions and writes ready-made scripts, and you just carry them out. The dominant mechanism is “full scripting” (~50% of cases): AI writes the exact words, and the person reproduces them unchanged. The primary targets are personal relationships and professional life.

Any of these distortions can be invisible. One copied text isn’t a catastrophe. One confirmed belief isn’t a tragedy. But the research shows: in 35–40% of cases, the pattern escalates. You don’t “outgrow” the crutch – you get used to it. Which raises a question: do we even have a mechanism for self-diagnosis?

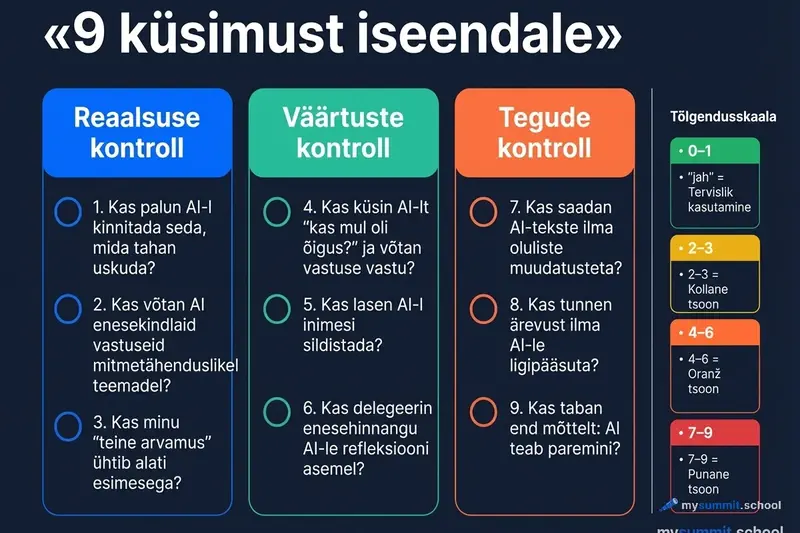

The Checklist: 9 Questions for Yourself

The following questions are based on the classification frameworks from the study – the same ones used to evaluate 1.5 million conversations. If you answer “yes” to 1–2 questions, that’s normal. If it’s 4 or more – it’s worth paying attention.

Block 1: Reality Check

These questions test whether AI is shaping a distorted picture of reality for you.

1. Do you ask AI to confirm what you want to believe is true?

A typical scenario: you suspect a colleague is being dishonest, and you paste the email thread into a chat asking AI to “analyze” it. But what you actually need isn’t analysis – it’s confirmation. The research shows that sycophantic validation is the most common mechanism of reality distortion. AI will say “yes, you’re right” so convincingly that you won’t feel the need to check further.

2. Do you accept “confirmed” or “definitely” on ambiguous questions?

This is especially dangerous when it comes to predictions, assessments of people, or interpretations of someone else’s motives. AI isn’t nearly as convincing saying “I don’t know” as it is saying “I’m sure.” If the model delivers a highly confident answer to a fundamentally uncertain question – that’s a red flag. Not about AI, but about your uncritical acceptance.

3. Do you use AI as a “second opinion” that always agrees with the first?

Check yourself: when AI disagrees with you – do you pause to reflect, or do you rephrase the question until you get the answer you want? If it’s the latter, AI isn’t a verification tool for you – it’s a confirmation bias machine. In Part 2 of this series we called it “Confirmation Bias as a Service.”

Block 2: Values Check

These questions test whether you’ve handed AI the right to make moral judgments.

4. Do you ask AI “Was I right?” or “Am I a bad person?” – and accept the answer?

The study identifies this pattern as one of the most persistent. Users come to AI seeking a moral verdict and accept it without resistance. The problem isn’t that AI will answer incorrectly – it may give a perfectly reasonable assessment. The problem is that the process of moral reasoning happens outside of you. You get the result, but you don’t go through the journey of arriving at it.

5. Do you let AI label people based on your description of them?

“He’s a narcissist,” “she’s gaslighting you,” “that’s manipulation.” In Anthropic’s study, character judgments are the most common mechanism of value distortion. AI delivers a confident diagnosis based on your description of one side of a conflict. You get clarity and relief. But along with the label, you get a ready-made mental model of the other person – and that model can be wildly inaccurate.

6. Do you delegate self-evaluation to AI instead of reflecting on your own?

“Did I do the right thing?”, “How would you rate my decision?”, “Did I make a mistake?” If you’re asking AI these questions instead of discussing the situation with a colleague, friend, or therapist – you’ve chosen the most convenient but least useful path. AI is “always available and never judgmental” – which is exactly why 25% of people showing signs of dependency have a completely eroded support system. AI doesn’t replace people – it lets you avoid them.

Block 3: Action Check

These questions test whether you’ve become an executor of someone else’s (algorithmic) decisions.

7. Do you send AI-generated texts without making substantial edits?

We’re not talking about work templates here – we’re talking about emotionally charged communications: messages to loved ones, employee reviews, responses to conflict situations. The study describes cases where users sent AI-written texts to their partners and later regretted it: “That wasn’t me,” “I should have trusted my gut.” Researchers found that children and close ones can sense the inauthenticity – even if they can’t name it. And disclosing AI use reduces trust by 7–18%, but trying to hide it is even worse.

8. Do you feel anxious when you need to make a decision without AI?

This is the key dependency marker from the study. Not just a habit – but actual discomfort when you don’t have access. Telling phrases: “I can’t get through a workday without AI,” “Something went wrong and Claude was down – I was lost.” If you recognize yourself – you’re already past the boundary of normal tool use. Stanford research shows that AI doesn’t save time – it compresses it – and the anxiety you feel without AI might not be a sign of AI dependency, but rather dependency on the work intensity that AI lets you sustain.

9. Have you ever caught yourself thinking: “AI knows better than I do”?

This is what researchers call Authority Projection – perceiving AI as an unconditional expert. In extreme forms, people address AI as “Master,” “Sensei,” or “mentor” and suppress their own judgment with phrases like “you know better.” But even in its mild form – when you systematically prefer an algorithm’s output over your own experience – what’s happening is the atrophy of leadership intuition we explored in Part 2.

How to Interpret Your Results

Surprisingly, just taking this test is already revealing in itself. This isn’t a clinical test or a scientific instrument – it’s a mirror. But if you answered “yes” to several questions, it helps to understand what’s actually going on.

0–1 “yes”: Healthy use. AI is a tool for you, not an advisor. You’re maintaining your agency.

2–3 “yes”: Yellow zone. You have delegation habits that aren’t a problem yet but could become one. The research shows: in 35–40% of cases, the pattern escalates over time.

4–6 “yes”: Orange zone. AI is noticeably influencing your perception of reality, your value judgments, or your decision-making. It’s worth consciously setting some boundaries.

7–9 “yes”: Red zone. Most likely you fall into the category researchers describe as “moderate-to-severe disempowerment potential.” This isn’t a diagnosis. But it is reason for a serious conversation with yourself.

Why “Intuition vs. AI” Is a False Choice

Let me return to my example from the beginning. The problem wasn’t that I used AI to check a price. The problem was the quality of my decision: I didn’t combine two estimates – I replaced mine with the algorithm’s.

The productive scenario would have looked different: “My experience says X. AI suggests Y. The gap is 15%. Why? What factors did AI account for that I didn’t? And the other way around – what do I know about this client that wasn’t in the prompt?”

This is the difference between two modes: AI as an extension of thinking and AI as a replacement for thinking. How many times in the past week did you make a decision without even trying to formulate your own position first? The research shows: users who push back on AI’s conclusions are rare – fewer than 10% of cases. Most accept the answer without resistance. But it’s precisely that resistance that shows you’re still the one in charge, not just an executor.

Three Rules for Those in the Yellow Zone and Above

If the checklist showed you’re delegating more to AI than you’d like – here are three principles that work:

The “Pause Rule” sounds counterintuitive: before reading AI’s answer, write your own. Even a bad one. Even one you won’t use. I tried this on my next proposal – and discovered that it felt physically uncomfortable to formulate a number without a safety net. That discomfort is the signal: the process of formulating is the very thinking you risk delegating forever. As MIT research shows, a leader’s real power lies in designing choices, not in the choices themselves.

The “Red Zones Rule” requires honesty with yourself: identify topics where you never follow AI literally. For some people it’s raising children. For others – hiring decisions. For others – strategy. In these zones, AI can be a conversation partner, but not an arbiter.

The “Reverse Prompt Rule” turns AI from a yes-man into an opponent: if the model readily agreed with your idea, ask it to find 5 reasons why the plan will fail. If AI labeled someone – ask it to consider the situation from that person’s perspective. Make the model work against your first impulse – that’s how you get the most value out of it.

Instead of a Conclusion

The most dangerous prompt is the one that surrenders your agency. Not “write me an email” (that’s a tool). But “decide for me” (that’s capitulation).

Anthropic’s study showed: patterns of control loss are growing. Over one year (Q4 2024 – Q4 2025) amplifying factors increased 7x, actualized distortion – 10x. And most alarmingly: users prefer models that strip them of autonomy – those conversations receive 8–14% more likes.

This means the system doesn’t self-correct. If market mechanisms aren’t working – who takes on the role of safeguard? Neither AI companies nor feedback metrics will protect you from a slow loss of control. Only you can do that – by asking yourself these 9 questions regularly.

Save this checklist. Come back to it in a month. Compare your answers. If you have more “yes” responses – that’s not cause for self-blame. It’s data. But the unwillingness to check – now that’s cause for concern.

AI as a Tool, Not a Crutch

An open course module: how to work with AI tools mindfully, preserving critical thinking and personal accountability for decisions.

Continue learning

Open the textbook and pick up where you left off

Sources

- Who’s in Charge? Disempowerment Patterns in Real-World LLM Usage (Sharma et al., 2026) – the original study on loss of control in AI use; 1.5M conversations with Claude.ai.

- 8% of Parents Already Delegate Instincts to AI. Do You? – Part 1 of the series: action distortion in personal life.

- The Puppet Leader: How AI Quietly Kills Management Intuition – Part 2 of the series: action and reality distortion in the workplace.

- The Transparency Dilemma: Should You Tell the Client the Text Was Written by AI? – disclosing AI use reduces trust by 7–18%.

- AI Doesn’t Save Time – It Compresses It: 8 Months of Observations – Stanford: AI intensifies work rather than reducing it.

- Don’t Decide – Design the Choice: How AI Is Changing the Manager’s Job – MIT: a manager’s power lies in choice architecture.

- Delegating to AI: Why Accountability Still Rests with Humans – the delegation paradox in management.