86% of Students Use AI, But Are Getting Worse. One Experiment Changed Everything

Traditional approaches to education are breaking down. AI writes essays and papers in minutes – and that has permanently changed the purpose of creative assignments in schools. Banning neural networks doesn’t work, and isolating students from technology is a dead end. The question is not whether to use AI. The question is how to use it so the technology develops students’ skills rather than replacing their thinking.

Get AI use cases for your lesson

Enter your subject and lesson topic – get ready-made AI classroom scenarios, prompts, and anti-cheating assignments your students can't delegate to a neural network (currently in Russian).

No registration · Results in 30 seconds

Two studies from 2025 reveal a paradox: 86% of students use AI in their studies, yet half of them feel they’re losing their connection with teachers. You’d think the technology should help – but somehow, things are going sideways.

The interesting part is that the problem isn’t AI itself. The University of Illinois demonstrated the opposite result – when AI is properly calibrated, 5 out of 6 students improve their writing skills. Let’s break down the difference between failure and success – and what schools that want to keep up with technology without sacrificing education quality should actually do.

What’s Happening in Schools Right Now

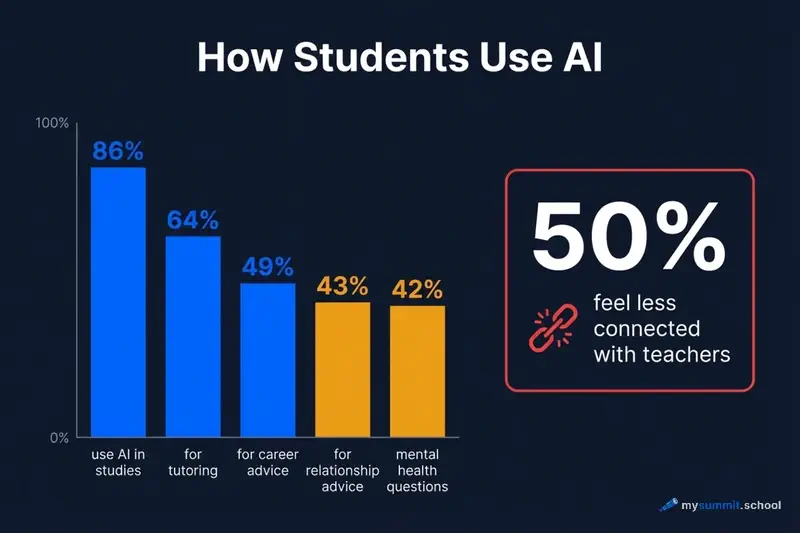

The numbers from the Education Week report are staggering. 86% of students in the US use AI – that’s practically every student. Two-thirds use it for tutoring, nearly half for career advice. Sounds like an education revolution. A technology that helps people learn.

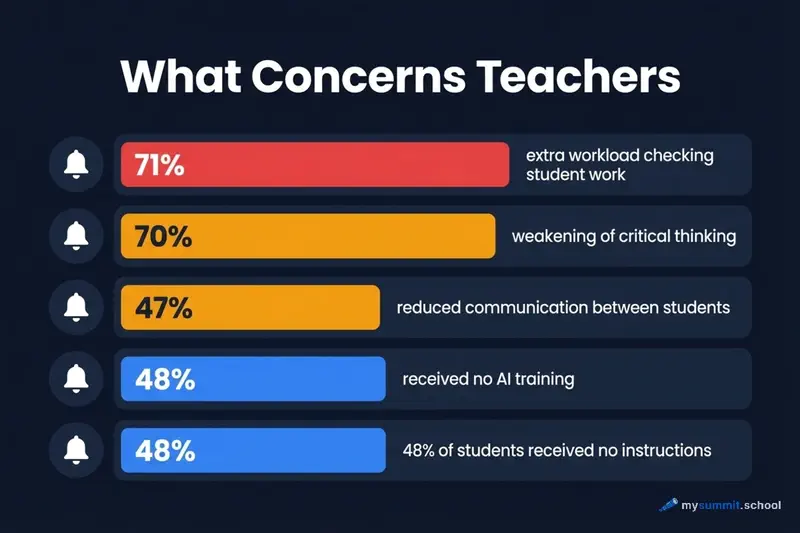

But there’s a catch. Half of those same students admit they feel less connected to their teachers when working with AI. 70% of teachers see critical thinking atrophying. Students stop thinking – they just copy ready-made answers from ChatGPT.

And here’s where it gets truly alarming. 43% of students use AI for relationship advice. 42% turn to it for mental health questions. A teenager with depression asks ChatGPT what to do. The bot responds with something generic – and instead of real help, it creates an illusion of support. Isolation only deepens.

Teachers, meanwhile, are overwhelmed. 71% report additional workload – now every assignment needs to be checked for “bot content.” Did the student actually write this? Or did they just copy it from ChatGPT and slightly rephrase? And the most absurd part – only 48% of teachers have received any AI training at all. And exactly the same percentage of students have received instructions on how to properly use AI.

The result is a paradox: schools are mass-adopting a technology that neither teachers nor students know how to use. And instead of help, they’re getting problems – isolation, skill degradation, and teacher burnout.

Is There Another Way?

While some schools battle the chaos, a team from the University of Illinois (Zheldibayeva et al., 2025) tried a different approach. Not banning AI. Not letting it run wild. But calibrating it – tuning it to a specific teacher, their assignments, and their grading criteria.

The main takeaway from their research sounds simple: “GenAI is not suitable for education without calibration. It needs recalibration to the teacher, their rubrics, and their materials.” Logical. But does it actually work?

The experiment was conducted at a regular, underfunded school in the American Midwest. Six 11th-grade students, one English teacher with two master’s degrees. The assignment was straightforward – write a 200-word essay comparing two texts.

The researchers approached it rigorously: IRB protocol (ethical approval), multiple data sources (drafts, final versions, focus groups, teacher surveys). All participants gave consent, anonymity was preserved. This wasn’t just “we tried it and told the story” – it was a methodologically sound study.

The key lay in two calibration elements. First – the AI was tuned to the teacher’s specific assignment. It only worked with materials that had been loaded into the system (this is called RAG – Retrieval-Augmented Generation). No random data from the internet.

Second – the AI graded work strictly according to the teacher’s rubric. Six criteria: compare and contrast, grammar, thesis statement, textual evidence, detail, and analysis. Not a generic “well-written” comment, but a specific assessment on each point.

And What Happened?

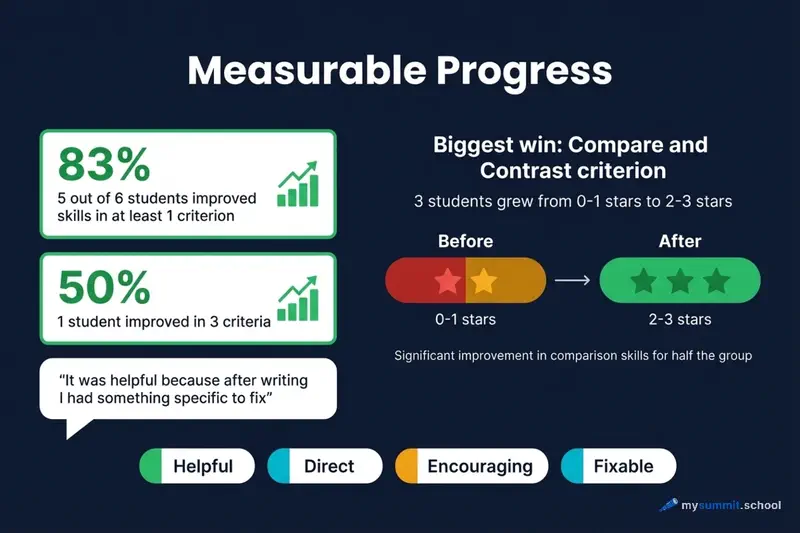

Five out of six students improved their work on at least one criterion. One student progressed on three criteria at once. The most significant growth was in “compare and contrast” – three students went from 0–1 stars to 2–3 stars.

But numbers are one thing. What the students themselves say is more telling. Here’s a quote from the focus group:

“It was helpful because after writing, I had something specific to fix. It told me exactly what needed to change.”

Another student adds: “I can go back and see what I can do right, so I can fix it later.”

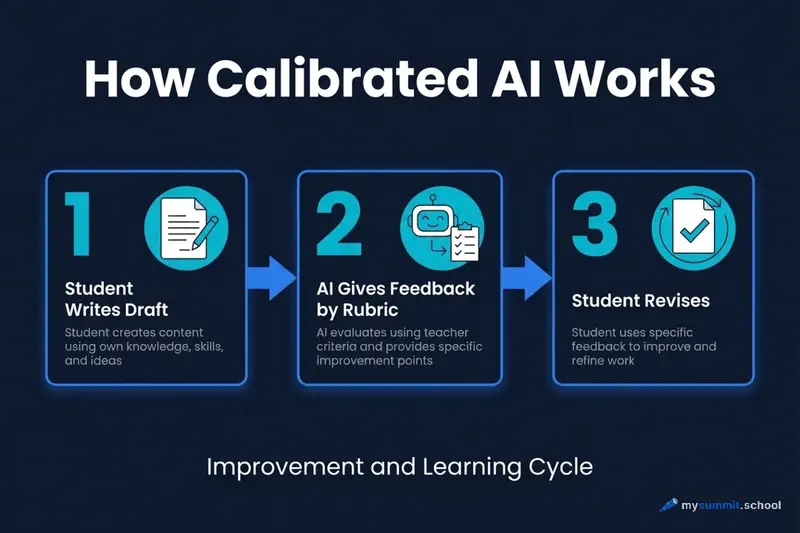

The teacher also noticed the difference: “The tool motivated students to revise their work. That was hard to achieve before.” Normally, students submit an essay and forget about it. Here, there was a reason to revise – because the feedback was specific and actionable.

When researchers analyzed all the feedback, four marker words stood out: Helpful, Direct, Encouraging, Fixable. The AI wasn’t just criticizing – it was providing a clear roadmap for improvement.

Wait, Can We Trust This Study?

Honestly, when I first saw these results, my first thought was: “Only 6 students? Seriously?”

But let’s think about it. This is a pilot study – a proof of concept, not a final verdict. For a pilot study, six participants is normal. Plus the methodology is transparent: multiple data sources (drafts, final versions, focus groups, surveys), IRB protocol (strict ethical standards), and publication in a peer-reviewed journal.

And what’s especially important – the authors themselves acknowledge the limitations. That’s always a good sign. They explicitly state: yes, the sample is small. Yes, it’s only one underfunded school – results may not scale to wealthy private schools. Yes, it’s a short-term experiment – the effect after six months is unknown. Yes, incomplete data – not all students completed the pre/post surveys.

Does that mean the study proves nothing? No. It means we’re looking at a proof of concept. Not a definitive answer, but a convincing “yes, calibrated AI works better than just ChatGPT in the wild.” Enough to try. Not enough to declare a revolution.

What’s the Difference Between Failure and Success?

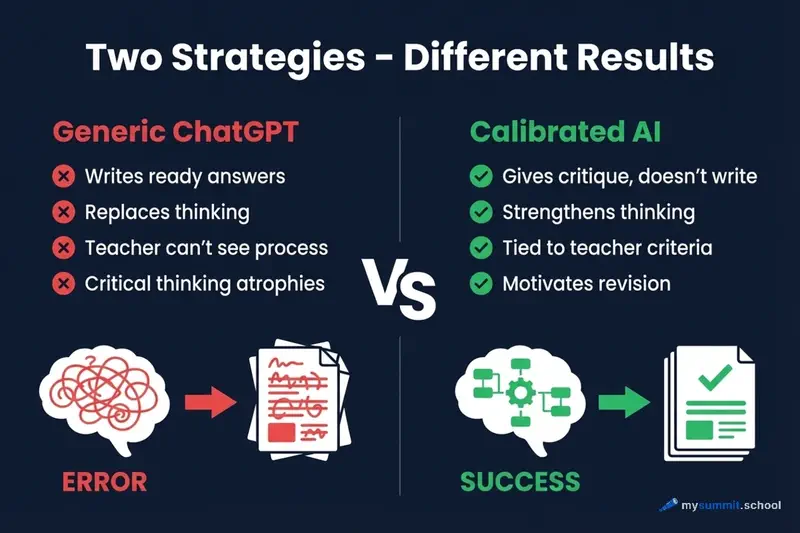

Same technology. ChatGPT there, LLM here. But opposite results. Why?

When schools simply allow ChatGPT without methodology, students copy ready-made answers. Teachers can’t tell student work from bot work. Critical thinking atrophies – that’s not my assumption, that’s what 70% of teachers report. Social isolation grows. Why talk to classmates when ChatGPT is always available?

But when AI is properly configured – it’s a different story. AI acts as a critic, not an author. Feedback is specific and tied to the teacher’s criteria. The student writes on their own, and AI points out weaknesses. Motivation to revise increases – the Illinois teacher confirms this.

Paradoxical, isn’t it? Generic AI replaces thinking. Calibrated AI amplifies thinking. Same technology, opposite results. It all comes down to how it’s applied, not what it can do.

Calibrate AI for your educational programs – the method from the University of Illinois study

No payment required • Get notified on launch

What About Schools Beyond the US?

The Illinois experiment is impressive. But they used CGScholar AI Helper – a specialized tool that may not be available everywhere. Depending on your region, you might have access to ChatGPT, Claude, YandexGPT, GigaChat, or other local models. Does the calibration approach work with them?

In short – yes. The calibration principle is universal; it’s not tied to a specific model. Here’s what’s available:

ChatGPT and Claude – the most widely accessible options globally. Both support custom instructions (system prompts), file uploads for context, and can be configured as grading assistants. Claude’s Projects feature is particularly useful for loading course materials. ChatGPT’s Custom GPTs can be set up as dedicated teaching assistants with specific rubrics built in.

YandexGPT – available through Yandex 360 for education in Russian-speaking markets. Works without VPN, supports custom prompts, and materials can be loaded via API or integration. The downside – no built-in rubric system, everything needs to be configured manually through prompts.

GigaChat by Sber – has a free tier for education. Understands Russian well, and you can create custom assistants with instructions. Requires a Sber ID account, but that’s not a barrier.

The question is: what do you do if you don’t have a specialized tool? Can you apply the calibration method manually through regular LLMs? Yes, you can.

Step one – create a system prompt with your rubric. For example:

You are a teacher's assistant. Your task is to evaluate a student's essay based on the following criteria: 1. Compare and contrast (0–3 stars) 2. Grammar and spelling (0–3 stars) 3. Thesis statement (0–3 stars) 4. Textual evidence (0–3 stars) 5. Detail and specificity (0–3 stars) 6. Analysis (0–3 stars) Do not write the essay for the student. Only identify specific weaknesses and how to improve them.

Step two – load materials into context. In ChatGPT or Claude, upload reference documents directly. In YandexGPT, add texts directly to the request. In GigaChat, use a custom assistant with a knowledge base.

Step three – verify that AI is not writing instead of the student. Request only critique: “Evaluate this essay, but DO NOT suggest replacement text.” If the AI starts rewriting – refine the prompt.

Here’s a full example:

System instruction: "You are a strict essay reviewer. Evaluate the student's work based on the teacher's criteria. Point out only weaknesses. Do not rewrite text for the student." User prompt: "Here is the grading rubric: [insert criteria]. Here is the student's essay: [text]. Evaluate each criterion and specify exactly what needs improvement."

This isn’t an identical replacement for CGScholar, but the principle is the same – AI as a critic, not an author.

What Should Teachers and Schools Do?

First – don’t allow “AI in the wild.” Simply permitting ChatGPT or any other LLM without methodology leads to skill degradation. Clear protocols are needed. Not “you can use AI,” but “here’s how to properly use AI.”

Second – calibrate AI to your tasks. Even without specialized software. Create a system prompt with your grading rubric. Load teaching materials into the request context. AI should work as a critic, not an author.

Third – use the “Sandwich” method (Human-AI-Human). Stage one: the student writes a draft independently. Stage two: AI provides critique based on specific criteria. Stage three: the student revises the work based on the critique. Human at the beginning, human at the end. AI in the middle, as a reviewer.

Implement the Human-AI-Human method in employee training – without losing quality or motivation

No payment required • Get notified on launch

Fourth – evaluate the process, not just the result. Oral defense of the work – the student must explain every thesis. Version history in Google Docs – the entire writing process is visible. Evaluate prompt quality – a good prompt demonstrates deep understanding of the topic.

Fifth – preserve human contact. AI for homework, live interaction for the classroom. Mandatory discussions and debates without devices. Five-minute interviews after every written assignment. Technology should not replace communication.

For Managers and Parents

If you’re choosing a school or evaluating a current learning program, there are several key questions worth asking. Have the teachers received AI training? Currently only 48% have, and this is a critically important factor. Does the school have a clear AI usage policy, or is it just “feel free to use ChatGPT”? Is the AI configured for the specific curriculum, or is the school using generic solutions? How exactly does the school prevent student social isolation? And finally – is there an ethical code for working with AI?

Good signs look like this: the student can explain exactly how they used AI for a specific assignment. Teachers have grading rubrics that account for AI use. AI is used for feedback and critique, not for simply generating ready-made content. And most importantly – sufficient time is preserved for live interaction with the teacher, because without that, education loses its meaning.

Red flags include: the student simply copy-pastes from ChatGPT and can’t explain the content. Teachers don’t know how to evaluate work in the AI era and are visibly confused. The school has no coherent AI usage policy – everyone does what they want. And the most dangerous sign – the child’s motivation to learn has noticeably decreased because “you can just ask the bot.”

For Corporate Training and L&D Professionals

Interestingly, the exact same principles work excellently for employee training. Calibrate your corporate AI to your standards and processes – just as a teacher tunes AI to their rubric. The “Sandwich” method works perfectly for preparing presentations, reports, and strategies – the employee writes a draft, AI critiques it, the employee refines it.

Evaluate the quality of your employees’ prompts – it’s a direct indicator of task comprehension. If someone can formulate a good prompt, they deeply understand the problem. And an oral defense of ideas always matters more than polished AI-generated slides.

Here’s a concrete example. Instead of “Ask ChatGPT to write a strategy,” try this: “Write your strategy thesis points independently, ask AI to find logical weaknesses, refine based on the critique, and present orally to the team.” See the difference? In the first case, the person is removed from the process. In the second – they’re at the center, and AI amplifies them.

An Ethical Code for AI in Education

Based on both studies, four key principles emerge that every school should adopt.

Transparency – the first principle. If a student used AI, they must disclose it. Not just “yes, I used ChatGPT,” but specifically: “The introduction was generated by ChatGPT, the body was written by me, the conclusions were formulated collaboratively.” This level of detail teaches honesty and helps the teacher assess the student’s actual contribution.

Accountability – the second principle. The student is responsible for any text submitted to the teacher. Period. The argument “that’s what the bot wrote” is not accepted and should never be accepted. If you submit work with errors or factual inaccuracies – that’s your problem, even if ChatGPT let you down.

Humans remain at the center – the third principle. AI works as a co-pilot, not autopilot. The final decision always belongs to the human. These aren’t just nice words – it’s the critical difference between skill amplification and skill degradation.

Honesty about limitations – the fourth principle. AI hallucinates – teach students to verify facts against primary sources. AI can be biased – teach them to critically evaluate its suggestions. AI doesn’t understand the context of your specific situation – teach them to add their own experience and judgment. The technology is powerful, but not magic.

Conclusions: What’s Next?

Let’s pull it all together. Three key insights emerge from the 2025 research.

First – mass uncontrolled AI adoption is destroying education. The numbers speak for themselves: 86% of students use AI, but half of them feel they’re losing their connection with teachers. 70% of educators see critical thinking degrading in their students. Social isolation grows – why talk to classmates when ChatGPT is always available?

The second insight – calibrated AI produces the opposite effect. Five out of six students improved their writing skills when working with properly configured AI. The students themselves describe the feedback as “helpful, direct, encouraging, fixable.” Teachers report increased motivation to revise work – something that was previously almost impossible to achieve.

The third insight – the key to success isn’t in bans, but in methodology. Calibrating AI to a specific teacher through RAG and grading rubrics. The “Sandwich” method with a Human-AI-Human cycle. Evaluating the work process, not just the final result. And preserving human contact – without it, education loses its meaning, no matter how good the AI.

What to Do Right Now

Concrete steps for different roles.

If you’re a teacher – start small, but start now. Take AI training, even if it’s just a 1–2 hour webinar – basic understanding is critically important. Create a clear grading rubric that accounts for AI use – without it, you’re grading blind. Try the “Sandwich” method on at least one assignment and see the results yourself.

If you’re an education program manager – here’s where investment is needed. Invest in teacher training, because right now only 48% have been trained, and that’s critically low. Implement calibrated solutions rather than just saying “use ChatGPT.” Generic AI without methodology is a path to degradation. Create an ethical code for AI use so everyone plays by the same rules.

If you’re a parent – ask uncomfortable questions. Ask the school about their AI usage policy. Discuss with your child how exactly they use AI – for getting feedback or for plain cheating? Insist on preserving human contact with teachers, because without it, education becomes a formality.

If you’re a corporate trainer – adapt the “Sandwich” method for business training; it works just as well as in schools. Evaluate the quality of your employees’ prompts – it’s the best indicator of task understanding. Implement oral defense of ideas rather than settling for polished AI-generated presentations.

Master AI Calibration for Educational Programs

Learn to apply the research principles: configuring AI to your criteria, the Sandwich method, evaluating prompt quality. For trainers, HR, and learning program leaders.

The Future of Education: A Hybrid Model

The 2025 research shows the way forward – and it’s not about extremes. Not a total AI ban, not full immersion in technology. A hybrid model.

At home, alone with a computer and calibrated AI – gathering material, structuring thoughts, receiving specific critique aligned to the teacher’s criteria. Here, technology helps students think deeper, see weaknesses, and strengthen their arguments.

In the classroom, without devices and AI – final conclusions are written by hand. Debates and discussions with real people, where you need to react quickly and defend your position. Oral defense before the teacher, where you can’t hide behind someone else’s text.

Paradoxically, the best result comes not from “AI vs human,” but from “AI + human” – each in their proper role. AI as co-pilot, not autopilot. Sound familiar? The exact same principle applies to corporate training – technology amplifies the human, but does not replace them.

Sources and Additional Materials

Primary research:

Zheldibayeva, R., Nascimento, A. K. de O., Castro, V., Kalantzis, M., & Cope, B. (2025). The impact of AI-driven tools on student writing development: A case study. Online Journal of Communication and Media Technologies, 15(3), e202526. Full text on arXiv

Vilcarino, J., & Langreo, L. (2025, October 8). Rising use of AI in schools comes with big downsides for students. Education Week. Article on Education Week