AI Saves Teachers 6 Hours a Week. But 97% Don't Notice

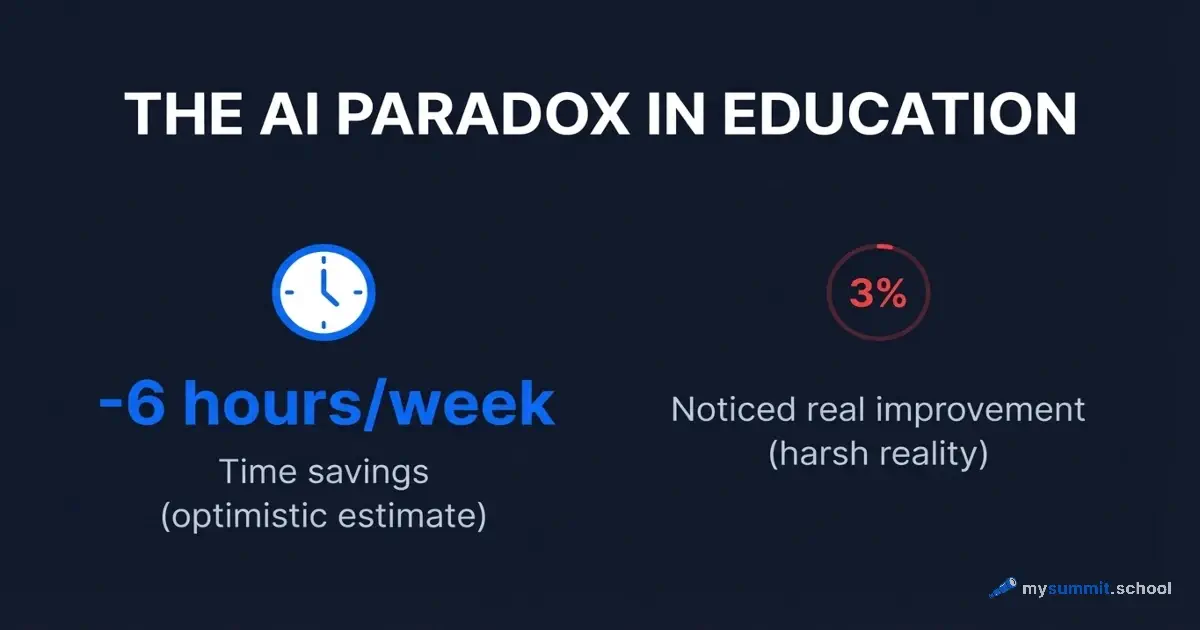

A Gallup and Walton Family Foundation survey (2024–2025, representative sample of US teachers) produced an impressive number: teachers who regularly use AI save an average of 5.9 hours per week – the equivalent of six full work weeks per school year. Sounds like a solved problem.

But a parallel Royal Society of Chemistry survey (2024, UK) paints a different picture: 44% of teachers tried AI, yet only 3% reported a real reduction in workload. A maths teacher from Ireland explained the gap more precisely than any statistic: “AI generates worksheets quickly, but they need thorough checking – and the time savings turn out smaller than expected.”

Who is right? We previously examined the AI crisis in education from the student side – 86% of students use AI, yet critical thinking is declining. Now – the instructor side. Over the past two years, enough experimental data has accumulated to answer this question with numbers, not opinions.

What the experiments showed

Structured tasks: AI works

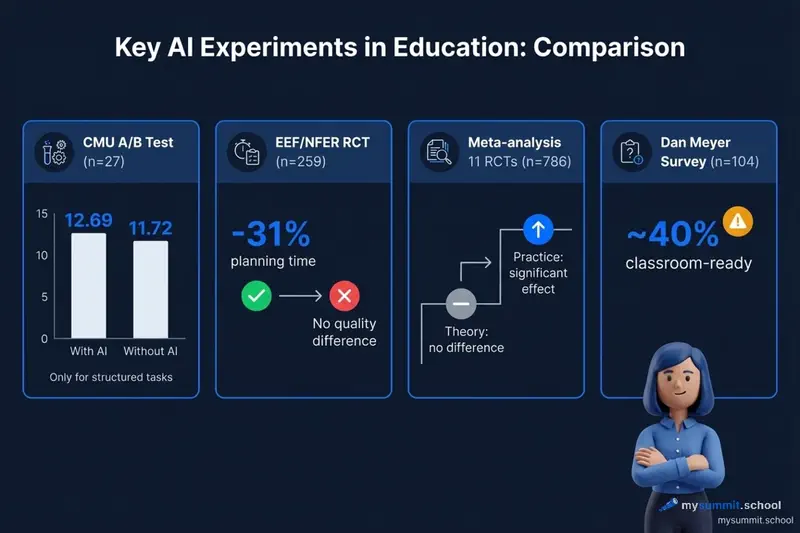

A Carnegie Mellon University study (Moore et al., AIED 2025) ran an A/B experiment: 27 master’s students each created 8 micro-lessons – four with ChatGPT (GPT-4), four without. Evaluation was blind, using a 5-criterion rubric (maximum 15 points).

Result: micro-lessons made with AI scored 12.69/15 versus 11.72/15 without AI. The difference was statistically significant. But – and this is key – the effect appeared only for structured tasks: universal design for learning, guided discovery, “explain–practise.” For tasks requiring creative approaches or social sensitivity – collaborative learning, peer instruction – there was no difference.

Philippa Hardman confirmed the same pattern in her study: for “structured and well-known” tasks, AI improved quality; for unconventional and socially nuanced ones, it dropped by 19 percentage points.

The conclusion from both studies is the same: AI amplifies what is already well-formalised. Where an original pedagogical solution is needed, it is useless or harmful.

Real classrooms: time saved, quality unchanged

The largest randomised controlled trial in this area was conducted by the Education Endowment Foundation (EEF) together with NFER: 259 teachers in 68 UK schools. Teachers received ChatGPT and a detailed usage guide.

Time result: 25.3 minutes saved per week – a 31% reduction in planning time. But an independent expert panel assessing lesson quality found no noticeable difference between AI-prepared lessons and the control group.

This is the most uncomfortable finding in the entire body of research. AI saves time – but does not improve what teachers produce with that time.

Meta-analysis: practical skills – yes, theory – no

A meta-analysis of 11 randomised controlled trials (PMC, 2025, 786 medical students) confirmed the pattern: theoretical knowledge in AI and non-AI groups did not differ. But practical skills were significantly higher in the AI group – with a notable effect size.

AI helps master “how to do it.” It does not help understand “why it works.”

Where AI breaks down: three systemic problems

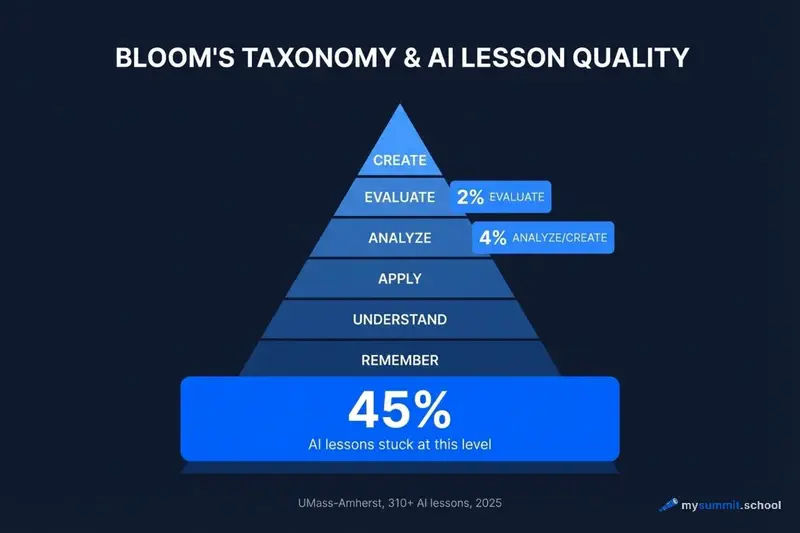

45% of lessons stuck on “remember”

A UMass-Amherst study (2025, 310+ civics lessons, 3 AI systems) revealed the scale of the problem. Of all AI-generated lessons:

- 45% stayed at the “remember” level – the lowest in Bloom’s taxonomy (a hierarchy of cognitive tasks: from memorising facts to analysis, evaluation, and creative synthesis)

- Only 4% offered analysis or creation

- Only 2% asked students to evaluate information

- Just 11–16% included meaningful use of educational technology

AI produces lessons that look structured, but in terms of cognitive depth are on par with a 1990s workbook. This is not a flaw in any particular model. It is a consequence of how language models are trained: on text corpora where simple explanations and recall questions appear far more often than critical thinking tasks.

Teachers overestimate AI lesson readiness

Dan Meyer’s survey (104 maths teachers, 2024) gave a concrete estimate: AI materials are approximately 40% ready for classroom use. The myth of the “80/20 rule” – AI does 80% of the work, the teacher finishes 20% – turned out closer to the reverse ratio. One respondent put it bluntly: “It’s easier to create slides from scratch than to salvage what AI produced.”

A SUNY Geneseo study (pre-service teachers, 2024) confirmed this from another angle: when trainee teachers were asked to carefully analyse AI-generated lesson plans, their confidence in the tool dropped significantly. They found misalignments between objectives and activities, factual errors, and missing scaffolding for students at different levels.

The conclusion is paradoxical: the more deeply a teacher examines an AI lesson, the worse they rate it. A surface-level check creates an illusion of quality.

The “verification tax” eats up the savings

This explains the gap between Gallup (5.9 hours saved) and RSC UK (3% actually noticed a difference). Teachers who report savings often do not account for time spent checking and reworking. Those who do account for it find that savings partially or fully disappear.

Why structured prompts change outcomes

Research shows that roughly 50% of quality improvement in AI lessons is determined not by the model, but by how the request is formulated.

Specific data:

- Structured prompts produce ~60% more accurate and relevant educational content compared to unstructured ones

- Specifying the Bloom’s level shifted the distribution from 78% recall questions to a majority at the analysis and application levels

- Including backward design principles (from outcome to activities) improved alignment of objectives, tasks, and assessment by 40–60%

- Specifying evaluation criteria reduced post-generation rework by ~35%

The difference between “Create a lesson on quadratic equations for 9th grade” and a structured prompt specifying the Bloom’s level, activity format, expected outcomes, and assessment criteria is the difference between 40% and 75% lesson readiness.

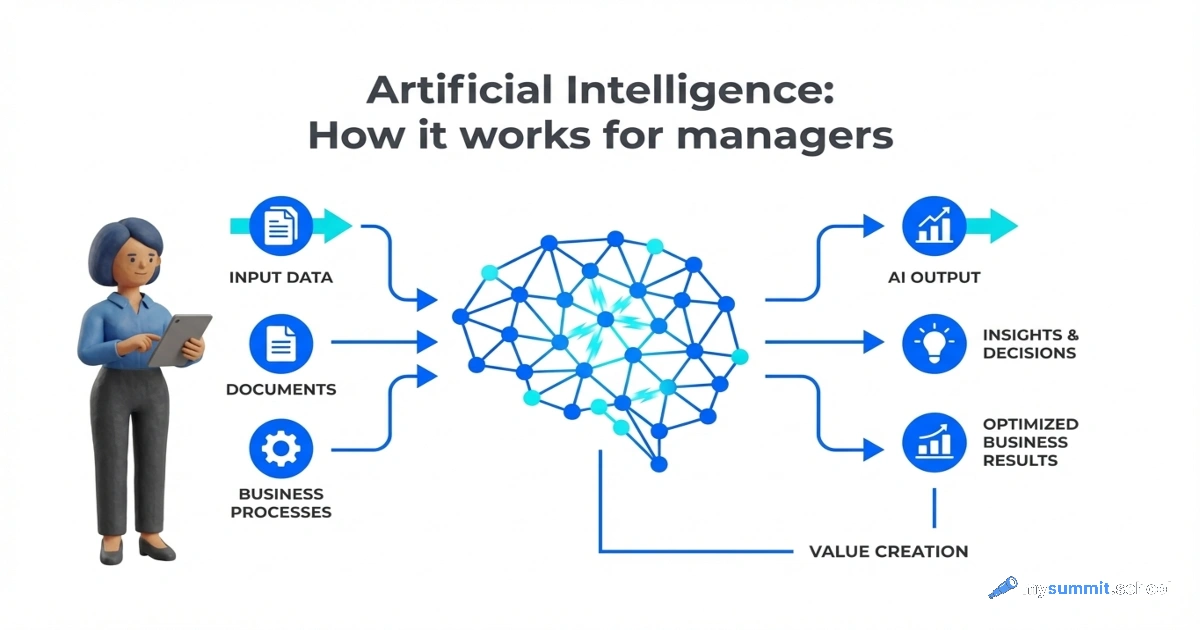

This also explains why prompt structure matters not only for managers – for instructors it is critical.

A separate line of evidence: purpose-built educational tools (Curipod, MagicSchool AI, Diffit, SchoolAI) show significantly higher teacher satisfaction compared to ChatGPT without configuration. The reason is not the model – it is that the prompt already contains pedagogical constraints: standards, age filters, lesson templates. Teachers using ChatGPT directly have to formulate these constraints themselves. Most do not.

Get AI use cases for your lesson

Enter your subject and lesson topic – get ready-made AI classroom scenarios, prompts, and anti-cheating assignments your students can't delegate to a neural network (currently in Russian).

No registration · Results in 30 seconds

The Global Training Gap

The experimental evidence is clear: AI works when teachers know how to work with it. This raises a separate question – what happens when entire countries try to bridge this gap at scale? The pattern is remarkably consistent worldwide: mass exposure to AI is widespread, but sustained classroom use remains rare.

United States: trying is not using

The Gallup data shows that over 50% of US teachers have tried AI at least once. But EdWeek and RAND Corporation surveys paint a more granular picture: only about 20% use AI regularly in their practice. The gap between a one-time experiment and sustained adoption is universal – and the US, despite leading in AI tool availability, is not immune.

The same “verification tax” applies: teachers try AI, find that checking and reworking output takes longer than expected, and quietly go back to their previous workflow. The Gallup headline number (5.9 hours saved) comes from the self-selected minority who persisted long enough to build efficient routines.

United Kingdom: workload unchanged for most

The RSC data tells the story concisely: 44% tried AI, but only 3% noticed a real workload reduction. The EEF randomised trial added the critical detail – even with dedicated training and a structured guide, AI saved planning time but produced no measurable improvement in lesson quality. Teachers got faster at producing the same quality of work, not better at producing higher-quality work.

This is the core tension: efficiency gains without quality gains are fragile. Once teachers realise the output still needs the same level of reworking, the perceived time savings shrink.

Mass certification vs. deep training: two case studies

The most striking contrast comes from two internationally documented programmes that illustrate the gap between breadth and depth.

Kazakhstan ran one of the largest AI teacher-training initiatives in the world: 324,000 registrations and 252,000 completions through the Orleu centre with UNESCO support (2025). The programme was mandatory and free. But the content was general AI literacy based on the UNESCO framework – not subject-specific application. 252,000 teachers are now certified as “AI-literate,” yet not a single published report documents how many of them actually use AI in real lessons. Certificate ≠ practice.

An indirect confirmation: in February 2026, several Kazakh pedagogical universities announced the creation of dedicated AI faculties for educators – a signal that current training is recognised as insufficient.

Belarus (BSPU) trained 97 teachers in a deep, practice-oriented AI-in-teaching programme. Result: 70%+ developed their own AI-based teaching methods and implemented them in practice. This is the highest “training → practice” conversion rate documented anywhere.

Against the broader national backdrop (113th out of 160 on the AI Adaptation Index, 43% of the population has never used AI, fewer than 10% of teachers use AI regularly), the BSPU result is an outlier. It confirms what the Western experiments show: depth of training matters more than breadth of certification. 97 teachers with real skills produce more impact than 252,000 certificates without practical application.

This finding connects directly to what research shows about the “illusion of depth” in AI training: mass programmes without practical grounding create a sense of competence that is not backed by actual skill.

Practical recommendations: how to use AI for lesson planning

The combined research provides a concrete framework.

Principle: AI is the first draft, not the finished lesson

No study has shown that an AI-generated lesson can be used without revision. Dan Meyer’s data (40% readiness), EEF (no quality difference), UMass (45% at “remember” level) all agree: AI produces a starting point, not a final product.

The value lies in eliminating the blank-page problem. AI helps you quickly get a structure you can critically rework. This is faster than starting from scratch – but only if the teacher knows what exactly to rework.

What to specify in the prompt when generating a lesson

Bloom’s level should be specified explicitly for each section – without it, 45% of content will be stuck at “remember” (UMass data). Example: “Introduction – understanding, practice – application/analysis, reflection – evaluation.”

A concrete student deliverable should be stated directly: not “discuss in groups” but “students create X demonstrating understanding of Y.” This separates a working lesson from a perfunctory one.

Anticipating common misconceptions is worth requesting in the prompt: “What typical misconceptions do students encounter when studying this topic?” This is what distinguishes an expert lesson plan from a template – and what AI does not do without an explicit request.

Backward design reverses the order: start with assessment criteria, then derive activities. By default, AI does the opposite – generates activities first, then retrofits assessment.

The “verification tax” must be budgeted upfront: an AI lesson will require 30–60% of time for fact-checking, adaptation to a specific class, and cognitive-level adjustment. If you did not budget this time – you did not save time, you shifted the burden.

What to check in an AI-generated lesson

- Alignment between activities and stated objectives – the main weakness found in the SUNY Geneseo study

- Factual accuracy – AI hallucinates in 3–9% of cases on general topics and up to 30% on specialised ones

- Cognitive level of each activity – label by Bloom’s manually

- Scaffolding and hints for students at different readiness levels

- Connections to preceding and following lessons (AI treats each lesson as isolated)

AI works best for those who need it least

The most uncomfortable conclusion from seven experiments: AI tools for lesson preparation deliver the greatest effect in the hands of instructors who already understand what a good lesson looks like. For them, AI is an accelerator – a quick draft they know how to rework.

For instructors without this foundation, AI creates a different risk. SUNY Geneseo showed: without critical review, AI lessons look convincing but contain systemic weaknesses. If a teacher does not see these weaknesses, they reproduce them in the classroom.

This is exactly the same mechanism that Anthropic’s research documented among developers: AI reduces cognitive friction – and with it, depth of understanding. For an instructor still developing their pedagogical thinking, this is not acceleration. It is a bypass.

The global evidence confirms this: 252,000 Kazakh teachers with AI-literacy certificates from a UNESCO-backed programme do not know how to apply it in their Tuesday lesson. The US shows the same pattern at a different scale – over half have tried AI, but only one in five uses it regularly. The problem is not the AI tools. The problem is the gap between knowing “AI exists” and the skill of “I can use AI to improve a specific lesson on Tuesday.”

The 97 teachers from the BSPU programme in Belarus closed that gap – and 70% of them created their own methods. The difference: not the volume of training, but its depth and subject-specific grounding. I see the same pattern in conversations with managers and instructors: knowing that AI exists and knowing how to apply it on Tuesday are different competencies with different development paths.

A question for every instructor reading this: your next AI-generated lesson plan – did you check it against Bloom’s? Or did you accept it because it looks structured?

You use AI – but do you catch the hidden errors?

9 diagnostic lessons: try applying AI to real tasks – and discover the mistakes most people miss. Free, no registration required.