AI Detection Test 2025: 77% Accuracy Among 140 Participants – Try It Yourself

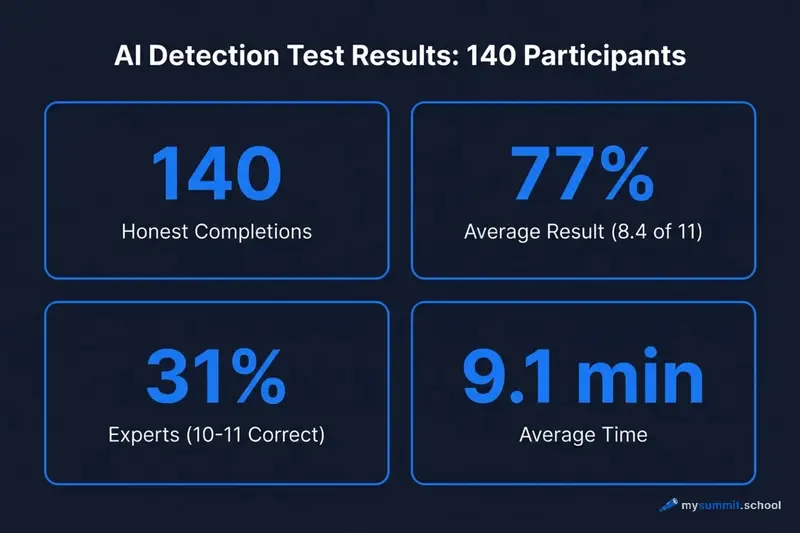

At mysummit.school, we ran an experiment: we created a quiz with 11 pairs of texts where participants had to figure out which was written by a human and which by an AI. From June to December, 140 people completed the entire quiz. The results surprised us – and the participants.

A note on context: This experiment was conducted with a Russian-speaking audience. All quiz texts were in Russian, and some examples in this article reference Russian-language content and platforms (VK, Bashorg, Samizdat). The patterns we discovered, however, are universal – they apply to AI-generated content in any language.

⚠️ A Note on Data Quality

After careful analysis, we excluded from the statistics:

- Incomplete attempts (fewer than 11 questions answered)

- 21 cases of cheating (15%): participants retook the test after memorizing the answers

Final sample: 140 honest completions. Methodology details are at the end of this article.

The Key Numbers

140 people completed the quiz. The average score was 77% (8.4 out of 11 correct answers). It took an average of 9.1 minutes to finish.

77% correct answers – that’s notably above random guessing (50%), but still far from perfect. Every fourth answer was wrong.

Score Distribution: How Did People Do?

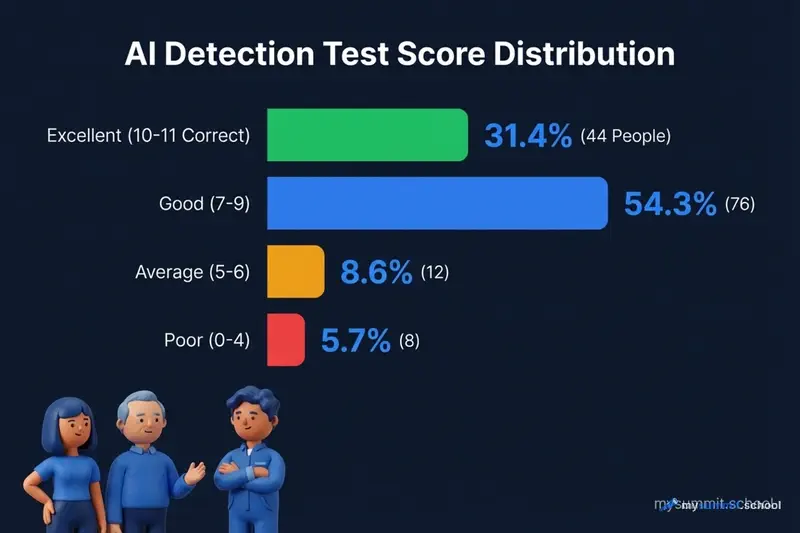

Nearly a third of participants (31.4%) scored 10–11 correct answers – that’s 44 people out of 140. Another 54.3% showed good results (7–9 correct). With careful analysis, distinguishing AI from human text is quite doable – 86% scored “good” or better.

But there’s another side: 5.7% of participants (8 people) guessed fewer than half correctly – worse than flipping a coin. This suggests that without knowing the basic tells of AI writing, detecting AI content is nearly impossible.

Which Questions Were Hardest?

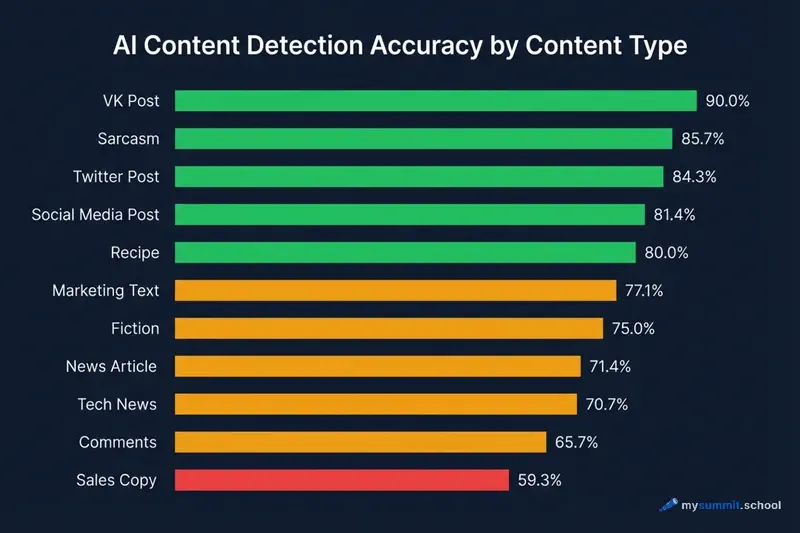

This is where things get interesting. Different types of content are detected at very different rates.

Top accuracy – VK posts (VK is Russia’s largest social network) at 90% and sarcasm at 85.7%. Participants easily picked up on the authentic voice of personal stories and subtle humor that AI handles poorly.

In the middle – recipes (80%), social media and Twitter posts (81–84%), fiction (75%). Here AI gets closer to human writing, but specific details and personal memories still give away the real author.

At the bottom – news articles and comments (68–71%) and sales copy (59.3%). The latter is practically a coin flip: corporate language is so formulaic that AI reproduces it perfectly.

How to Tell AI from Human: Patterns from the Quiz

Analyzing participant responses, we identified several signs that help detect AI-generated text.

AI gives itself away through:

Grandiose openings. A recipe starts with “a true journey through time, filled with the aroma of cinnamon.” A human just writes about waffles.

Over-structured text. Neat bullet points, numbered lists, “Top 5 must-reads” – AI loves organizing everything into tidy categories.

Marketing buzzwords. “Game-changers,” “competitive advantage,” “don’t miss this opportunity” – classic AI vocabulary.

Hashtags and emojis. Human post: “My grandparents. Simple Soviet-era people.” AI version ends with #loveforever #family 🙈

Text that’s too polished. No hesitations, no pauses, no filler words – the text reads like a press release.

Humans give themselves away through:

Specific period details. References to “20 u.e.” (a slang term for US dollars in 1990s Russia), “Rendezvous” (a popular café chain), “ZIL” (a Soviet-era truck brand) – AI either doesn’t know these nuances or doesn’t think to include them.

Colloquial speech. “And no Bali trips were needed,” “damn them all” – living language with real emotion.

Personal memories. “Rolled wafer tubes with condensed milk at auntie’s house” – a specific experience, not abstract “nostalgia.”

Imperfect structure. Humans jump between topics, leave thoughts unfinished – and that’s normal.

Three Difficulty Tiers

Easy to Detect (78–87% accuracy)

Social media posts – our participants were great at sensing the difference between an authentic LinkedIn post and AI generation. The post about books for product managers was especially telling: 87% accuracy.

Sarcasm and humor – AI still struggles with subtle humor. A text from Bashorg (a Russian humor site, similar to Bash.org) about sparrows, pigeons, and construction workers in hard hats was identified by 81% of participants.

Twitter – short personal stories with emotion are easy to distinguish from AI generation.

Medium Difficulty (68–78% accuracy)

Recipes – a human stroopwafel recipe with childhood memories was identified by 78%. The AI version with a grandiose intro about “a journey through time” gave itself away.

Tech news – a 1998 article from Cnews about 1C (Russia’s dominant enterprise software) certification was recognizable thanks to period-specific details.

Fiction – paradoxically, prose from Samizdat (a Russian self-publishing platform) was easier to detect (78%) than we expected.

Hard to Detect (54–68% accuracy)

Product reviews – only 62% accuracy. An emotional bag review from irecommend.ru and the AI version with emojis were nearly indistinguishable.

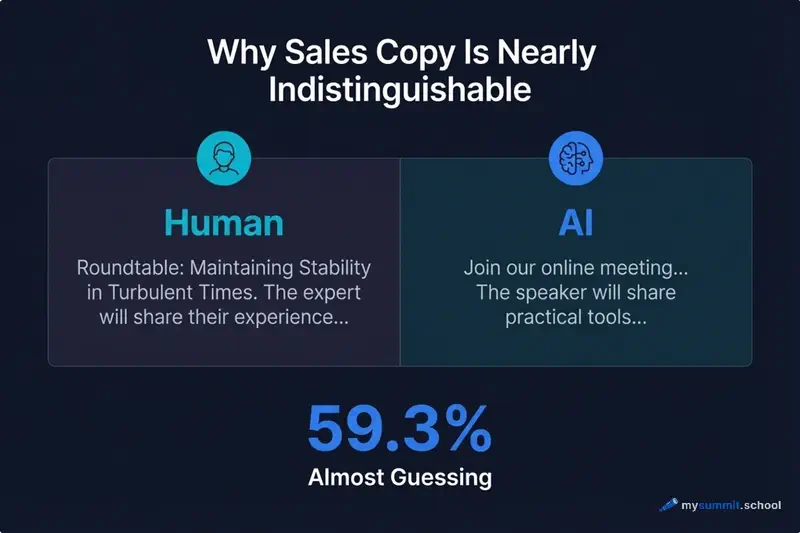

Sales copy – just 54%, practically guessing. A roundtable announcement from a professional association and the AI version used the same exact clichés.

Why Sales Copy Is the Hardest to Detect

Both texts use the same templates: “the expert will share their experience,” “practical tools,” “don’t miss this opportunity.” Corporate language is so standardized that AI reproduces it perfectly.

Takeaway: when human-written text is already formulaic, AI becomes indistinguishable. Only 59% of participants could tell the sales copy apart – that’s practically a coin flip.

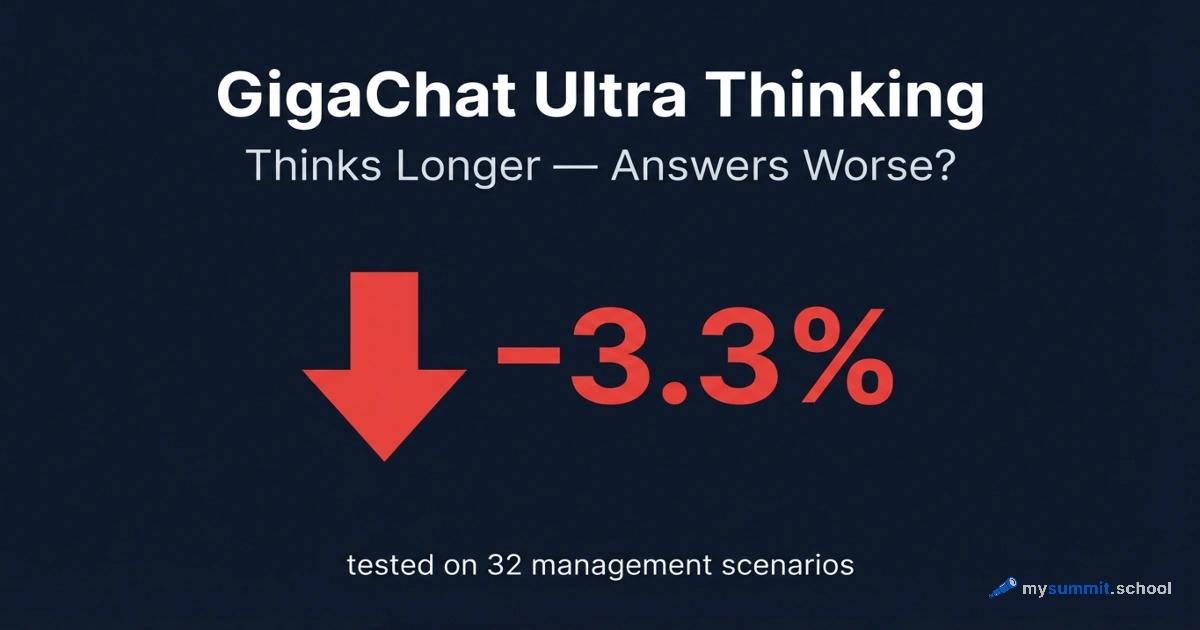

Response Time: Thinking Longer Doesn’t Mean Guessing Better

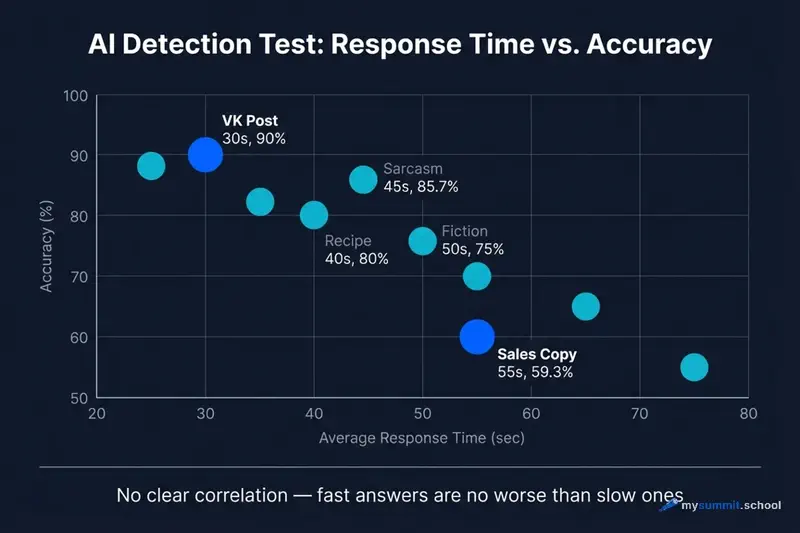

An interesting pattern: thinking longer doesn’t mean guessing better. The average response time was 41.7 seconds per question, but there’s no correlation with accuracy.

The most accurately detected questions (VK social media posts, 90%) were answered quickly – without much deliberation. Sales copy, on the other hand, took more time, but accuracy remained low (59%).

Intuition works better than lengthy analysis – first impressions are often correct.

What This Means for Managers

1. Your Intuition Works – But Not Everywhere

77% overall accuracy is well above random guessing, but 23% errors remain. There are content types where intuition particularly fails:

- Sales copy (59%) – practically a coin flip

- Comments (66%) – AI does a good job imitating conversational style

- News articles (68–71%) – formal style is easy to reproduce

2. The More Formulaic the Text, the Harder It Is to Detect AI

When the human original uses clichés and standard phrases, AI reproduces it flawlessly. Telling them apart becomes impossible.

Practical takeaway: evaluate content by its substance, not by how “human” it feels.

3. Quick Decisions Are No Worse Than Slow Ones

The data shows: lengthy analysis doesn’t improve accuracy (average speed: 41.7 seconds per question). Trust your first impression, especially for social media and personal stories – that’s where intuition works best.

Take the Quiz Yourself

We’re still collecting data. Test your intuition and compare your result with the average.

What you’ll get:

- 11 rounds with text pairs

- Instant results with explanations

- Your percentile – how you compare to others

Conclusions

- 77% average accuracy among those who completed the test – people detect AI noticeably better than random guessing, but 23% errors remain

- 31% of participants were experts (10–11 correct), 86% scored “good” or better

- Sales copy – the hardest category (59%), AI and humans write equally formulaically

- Social media and sarcasm – easiest (85–90%), AI still struggles with authentic voice and humor

- Time doesn’t affect accuracy – quick intuitive answers work just as well as lengthy deliberation (average time: 41.7 sec/question)

Methodology and Data Cleaning

What We Found During Analysis

While collecting data, we encountered quality issues:

- Many participants didn’t finish the test (fewer than 11 questions)

- 21 cases of cheating (15% of valid attempts) – users retook the test multiple times, memorizing correct answers

How We Cleaned the Data

Excluded incomplete attempts:

- Participants who answered fewer than 11 questions

- This distorted statistics by mixing those who gave up with those who completed the full quiz

Corrected cheating attempts:

- Detected via matching IP address + User Agent

- Pattern: 0–3 answers → abandoned → new attempt → 11 answers with high accuracy

- For such cases, we only counted unique correct answers across all attempts

- If question #3 was wrong in the first attempt but correct in the second – we counted it as wrong (they knew the answer in advance)

Worst case of cheating:

- One participant: 11 attempts (9 abandoned, 2 completed)

- The system automatically corrected their score based on unique correct answers

Final Sample

140 honest completions – participants who:

- Answered all 11 questions

- Did not retake the test OR their repeated attempts didn’t improve results through memorization

Confidence level: 95% (for a sample of 140 people, margin of error ±8.3%)

Conclusion: Data cleaning showed that people are better at detecting AI (77%) than incomplete attempts would suggest. But the cheating rate (15% of attempts) shows the test is genuinely challenging.

Want to Go Beyond Detecting AI – and Actually Use It Effectively?

Being able to tell AI text from human writing is a useful skill. But knowing how to apply AI to your own tasks – that’s a competitive advantage.

At mysummit.school, we teach managers to:

- Use ChatGPT, Claude, and YandexGPT for everyday tasks

- Write prompts that deliver the results you need

- Critically evaluate AI content and spot errors

- Integrate AI into your team’s workflows

3 free lessons – no theory, just practice.