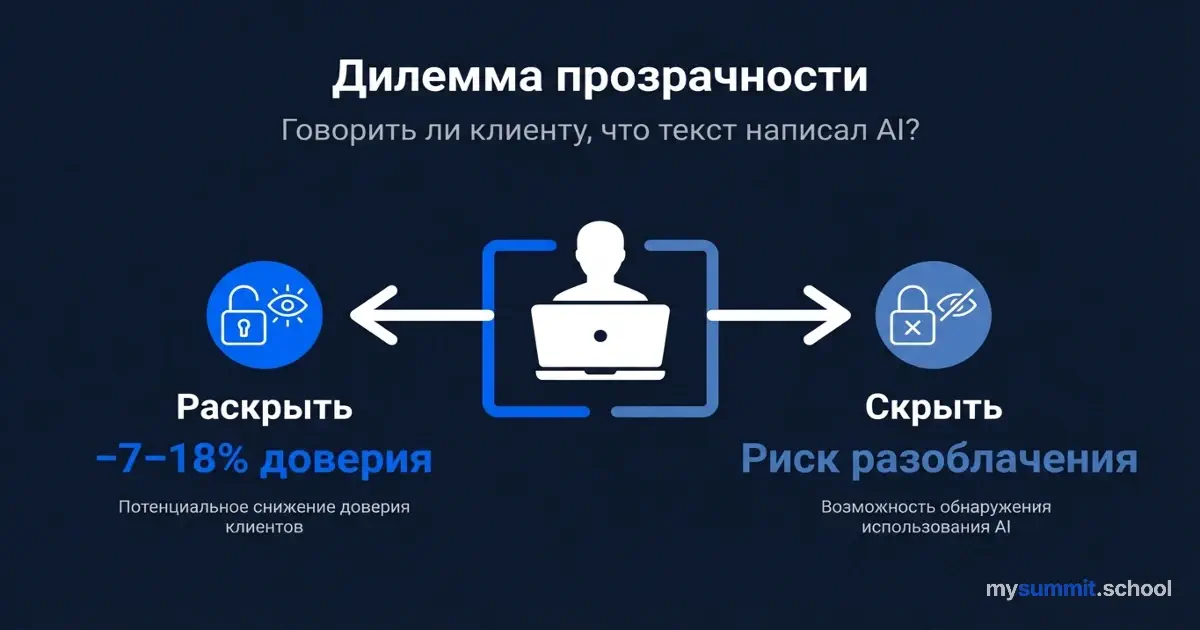

The Transparency Dilemma: Should You Tell Clients the Text Was Written by AI?

You’ve written the perfect client email. The tone is spot-on, the arguments flow, there’s even a well-placed joke. One problem: you didn’t write it. Claude did. Or ChatGPT. Or Gemini – doesn’t matter.

Now the question: do you tell the client?

Instinct says: “Of course not. Who cares how it was written if it’s written well?” Corporate ethics whispers: “You should be transparent.” And the science says something unexpected: both options erode trust – but in different ways and with different consequences.

The Experiment That Changed Everything

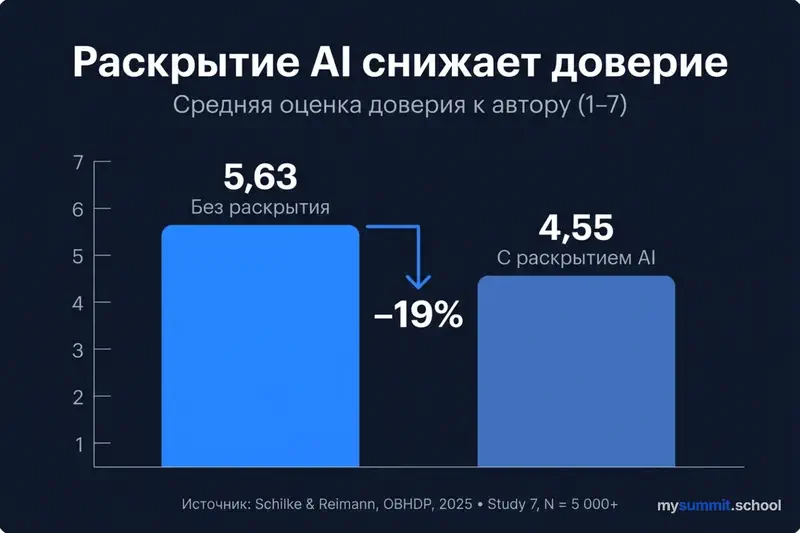

In 2025, researchers Oliver Schilke and Martin Reimann published in Organizational Behavior and Human Decision Processes a series of 13 pre-registered experiments involving more than 5,000 participants. The range of scenarios was unusually broad: professors writing recommendation letters; analysts preparing investment reviews; executives drafting corporate correspondence; creative professionals developing concepts.

The methodology was elegant in its simplicity. Participants received identical texts. The only variable – whether they knew AI was involved in creating it. The actual use of the technology remained constant; only the disclosure changed.

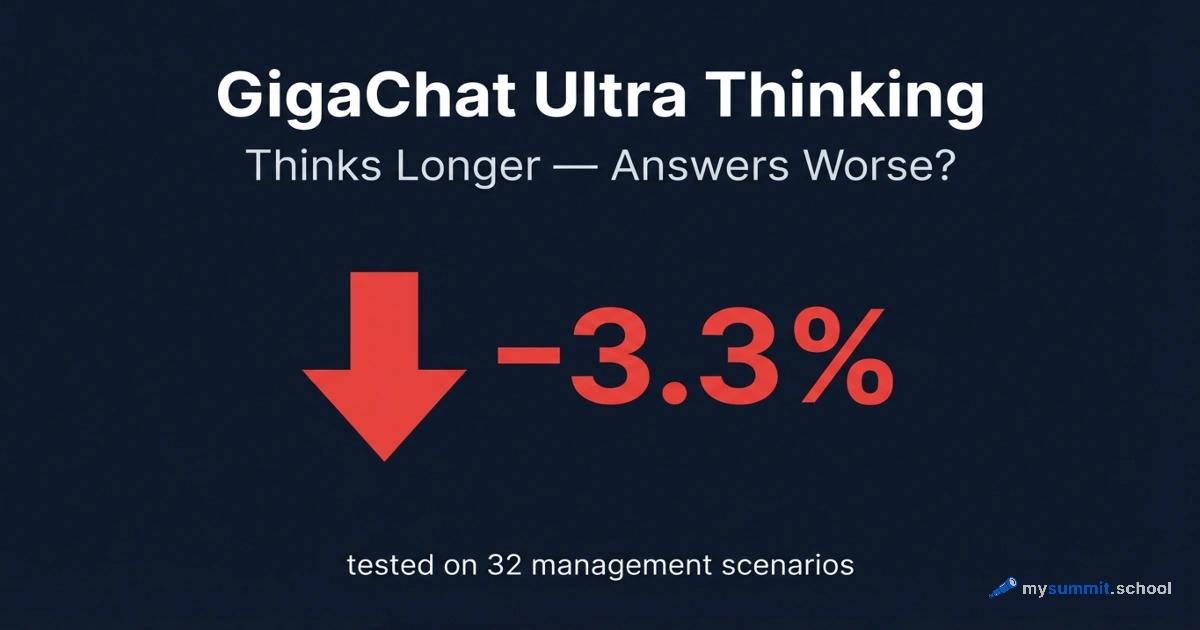

The result was counterintuitive: explicit disclosure of AI use systematically reduced trust – not in the quality of the text, but in the person who sent it. Specific numbers: trust in professors who used AI for recommendation letters dropped by ~16%. For investment funds that disclosed AI involvement in analytical reviews – by ~18%. Simply labeling something “created with AI assistance” cost 7–14 percentage points of trust.

The researchers also tested “softer” framings: “AI was only used for spell-checking,” “AI helped with structure.” It didn’t help. Any mention of AI triggered the same devaluation mechanism.

The Authenticity Penalty

The reason lies in what the researchers called the authenticity penalty. Schilke and Reimann identified three components that sequentially break down upon disclosure: typicality (is this normal behavior for this role?), commitment (did the author invest effort?), and authenticity (is there a real person behind these words?).

When recipients learn that a text was created with AI assistance, an instant cognitive shift occurs. They begin to wonder: are these your thoughts, or did an algorithm select statistically probable phrasing? Is the warmth in the email genuine, or is it a template?

Linguistic analysis confirms the paradox: AI-generated texts objectively score higher on emotional warmth, structural complexity, and vocabulary diversity. Yet they consistently lose to human-written texts on authenticity – the sense that there’s a real person behind the words with real intentions.

And the worst-case scenario isn’t your own disclosure. As the researchers put it: “If a third party reveals that you used AI – that’s the worst possible outcome.” Because the authenticity penalty gets compounded by a deception penalty. The mechanism mirrors what happens with algorithmic management: the loss of perceived control triggers a chain reaction of distrust.

The Blind Test: When AI Wins – and Loses

The scale of the authenticity penalty is vividly illustrated by research from Gagan Jain, Samridhi Pareek, and Per Carlbring (2024).

In the first phase (blind testing), 140 participants evaluated responses to 10 psychological support scenarios – from interpersonal conflicts to stress management. The source was hidden. AI responses (ChatGPT) scored at or above the level of clinical psychology graduate students across all three parameters – authenticity, professionalism, and practicality. The differences were not statistically significant.

In the second phase (six months later, with disclosure), 111 participants from the same group evaluated the exact same responses, but now they knew who wrote them. The result: authenticity ratings for human responses increased (from 36.33 to 37.66), while AI ratings stayed flat (34.85 in both phases).

The studies reveal another curious nuance: general trust in AI correlated only with practicality. No link was found with either authenticity or professionalism.

People are willing to accept that AI is useful. They’re not willing to accept that AI can be sincere. Utilitarian trust and emotional trust are two separate circuits, and disclosure destroys precisely the second one.

Catch-22: Hiding It Is Even Worse

The obvious solution, then – just don’t say anything. And indeed, Salesforce data (survey of 14,000 employees) shows: 64% pass off AI-generated content as their own. According to WalkMe, 78% use unapproved AI tools at work. Among C-level executives the figure is even higher – 93% admit to using “shadow AI.”

But researchers Schilke and Reimann warn: third-party disclosure – by a colleague, accidental discovery, a technical glitch – inflicts catastrophic and often irreparable damage to reputation.

This is no longer “they used a tool.” This is “they deliberately misled me.”

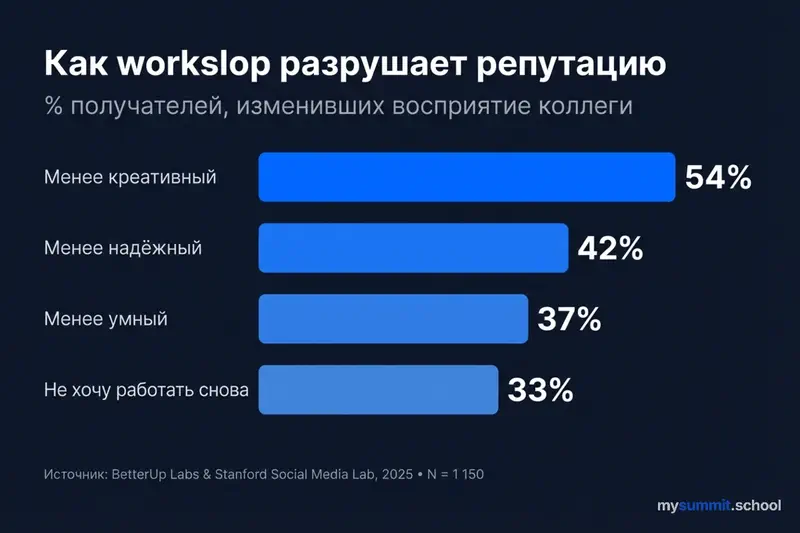

Data from a joint study by BetterUp Labs (Kate Niederhoffer) and the Stanford Social Media Lab (Jeff Hancock), surveying 1,150 full-time employees in the US, quantifies this effect. When recipients discover that a colleague’s work is AI-generated “workslop” (content with surface polish but no substantive depth):

- 54% consider the sender less creative

- 42% – less trustworthy

- 37% – less intelligent

- 33% wouldn’t want to work with that person again

A single exposed AI email can erase months of carefully built professional reputation. Which raises a question worth sitting with: how fragile was what we’d been calling “professional trust” all along?

The Hidden Tax: The Effort Heuristic and $9M Per Year

To understand the depth of this reaction, you need to look at the effort heuristic – a well-documented cognitive bias. Decades of research show: people instinctively equate visible effort with quality of output. A poem that “took three years” is rated higher than an identical one written “in half an hour.” A handmade suit costs more than a factory-made one, even if they’re indistinguishable.

AI broke this link. A flawless text can now be produced in seconds – and it has stopped functioning as a signal of competence. When a manager discovers that a subordinate’s brilliant report is the product of a single prompt, the feeling of deception kicks in. Not because the report is bad, but because the assumed effort turned out to be an illusion. A synthesis by Microsoft Research (~50 studies) confirms: users who received incorrect AI recommendations performed more slowly than those who did the task with no AI at all.

And the financial damage from workslop is concrete. Per BetterUp and Stanford:

| Metric | Value |

|---|---|

| Share of employees who received workslop in the past month | 40% |

| Average time to deal with a single incident | 1 hour 56 minutes |

| Cost of lost time per employee per month | $186 |

| Hidden cost for a 10,000-person organization | >$9M / year |

The workslop recipient spends nearly two hours restoring context, fact-checking, and correcting errors. AI saved the sender 30 minutes – and shifted two hours of work onto a colleague. One person’s “productivity” becomes everyone else’s loss.

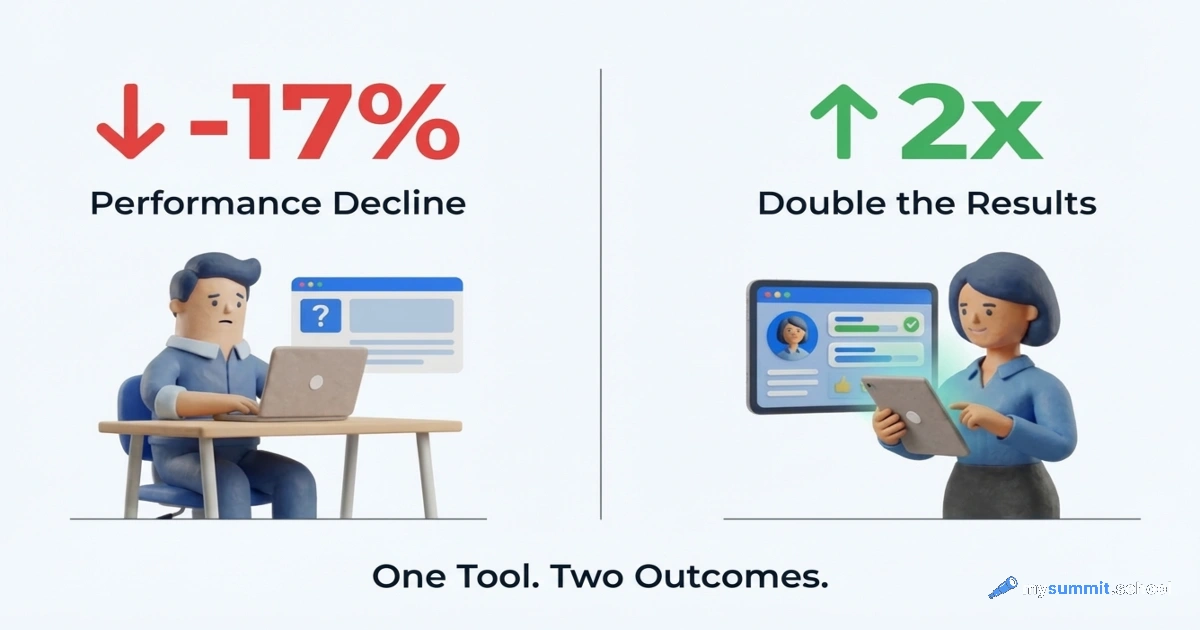

But BetterUp also found the opposite pattern. 28% of employees use AI as “pilots” – actively editing, verifying, supplementing with their own context. The remaining 72% are “passengers,” delegating to AI and sending the output without revision. The difference in outcomes: “pilots” show 3.6x higher productivity and 3.1x higher loyalty to their company. A similar pattern – work intensification through AI – has been described in research from the Institute for the Future of Work.

Why Explanations Don’t Help (But Social Proof Does)

The intuitive fix is to make AI more transparent: explain how it works, show the logic behind recommendations. But research from Harvard’s Laboratory for Innovation Science (LISH) found the opposite effect.

The scale of the experiment: 17,245 decisions about inventory allocation across 425 product lines in 186 stores. When managers could see the algorithm’s logic – which variables it considered, what weights it assigned – they started overriding it more often. The researchers called this mechanism “overconfident troubleshooting”: transparency created an illusion of understanding. “I can see what it factors in, and I know better.” Personal experience and intuition beat data.

Meanwhile, the “black box” – an algorithm with no visible logic – received significantly more trust. Under one condition: employees knew that their colleagues had participated in developing and testing the system. The researchers called this “social proofing” – trust in technology through trust in the people behind it.

The financial impact was measurable: following algorithm recommendations yielded +$36.95 in additional revenue per allocation decision in the fourth quintile and +$104.96 in the top quintile. Managers who overrode the algorithm because of its “transparent logic” were losing real money.

Work from Microsoft Research confirms this paradox: explanations do not reduce over-reliance on AI, and in some cases amplify it. Users interpret the presence of explanations as a signal that the system has been vetted – even when the explanations are uninformative. Research by Vasconcelos et al. from Stanford showed: explanations reduce excessive trust only when they are simpler than the task itself. If the explanation is as complex as the task, the user rationally skips verification (note: the study was published back in 2023, but dealt with a sensitive topic – vaccination).

The Gap Between Leadership and Teams

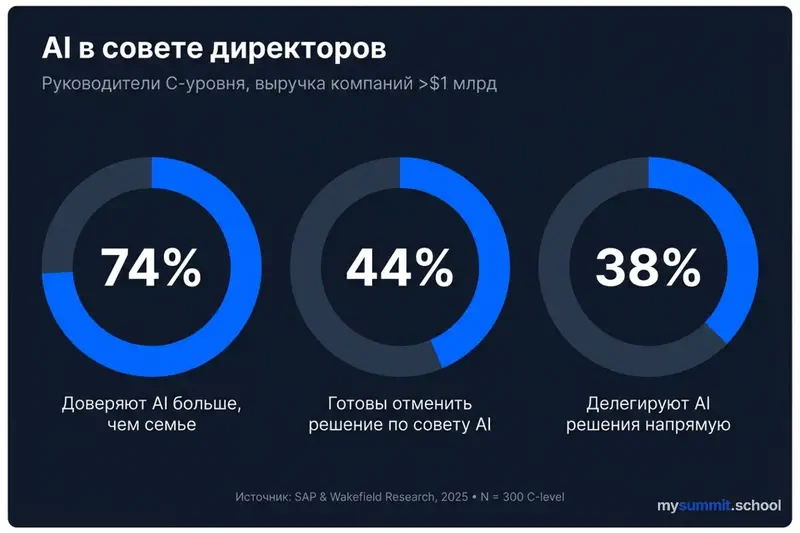

The situation is complicated by a radical divergence in how AI is perceived at different organizational levels – a pattern that’s clearly visible in corporate AI adoption more broadly.

A study by SAP and Wakefield Research (“AI Has a Seat in the C-Suite,” March 2025) surveyed 300 C-level executives at companies with revenues over $1 billion. The figures are striking:

- 74% trust AI more than family and friends for strategic recommendations

- 44% are willing to let AI override an already-made business decision

- 38% delegate decision-making directly to AI

- At companies where AI is already replacing traditional decision-making processes, that share reaches 55%

At the frontline level – the opposite dynamic. Per BCG’s “AI at Work 2025” (10,600+ employees, 11 countries), 72% of workers use AI regularly – but that’s an average. 78% of managers and 85%+ of executives work with AI weekly, while frontline adoption has stalled at 51% – unchanged since 2023. Only 25% of frontline employees receive any guidance from managers on AI use, and just 36% consider their training sufficient.

Meanwhile, 54% of employees are willing to use AI tools even without company approval – shadow AI keeps growing. And 41% of respondents fear losing their jobs to AI within the next decade, with executives worrying more (43%) than frontline workers (36%).

Low AI literacy amplifies the gap. Per HR Brew and Censuswide (2024), employees with low AI literacy experience 6x more anxiety, 7x more fear, and 8x more feelings of being overwhelmed – compared to AI-literate colleagues. And an experiment by Jacobs et al. (2021, 220 clinicians, antidepressant prescriptions) showed: doctors with low AI literacy were 7 times more likely to follow incorrect algorithm recommendations.

This is the mirror image of the transparency dilemma: leadership imposes AI “for efficiency,” employees respond with shadow AI “for survival.” Transparency from above breeds opacity below.

From Paradox to Protocol: What the Science Recommends

The research doesn’t just diagnose the problem – it offers concrete mechanisms for breaking the deadlock. Four strategies, each with an evidence base.

1. Normalization Instead of Disclosure

Schilke and Reimann point to a key nuance: the authenticity penalty kicks in when AI use is perceived as atypical for the role. A professor using AI for grading – atypical. An analyst using AI for a review – already closer to the norm.

The takeaway: instead of deciding whether to disclose or hide, make AI use normatively expected. When everyone on the team openly uses AI, the typicality component stops breaking down. It’s no longer “they outsourced their work to an algorithm” – it’s “they used a standard tool, like everyone else.”

The experience of SAP SuccessFactors confirms this: when AI is embedded into routine support processes – goal setting, peer feedback – it stops being an exception:

| Metric | Change |

|---|---|

| Feedback quality (per employee ratings) | 80% positive consensus |

| Feedback frequency (4+ times per year) | +47% |

| Goal-setting process satisfaction | +30% |

2. Social Proof Instead of Technical Transparency

The Harvard study at Tapestry offers a concrete recipe: involve end users in selecting, testing, and validating AI tools. When employees know that their peers with similar experience participated in building the system, trust in the “black box” turns out to be higher than trust in a transparent algorithm.

This scales: you don’t need to explain to everyone how the model works. It’s enough that people the team trusts have said: “We tested it – it works.” BetterUp’s data adds to this: training in relational skills (listening, asking questions, providing context) increases employees’ engagement with AI by 30% and raises the quality of outputs.

3. Cognitive Friction Points Instead of Automatic Acceptance

Buçinca et al. (2021, 199 respondents, Harvard) showed: if you ask people to think for themselves first and then show them the AI’s answer, they’re less likely to blindly defer to the model. Microsoft Research identifies three specific mechanisms:

- Uncertainty signals: An AI that says “I’m not confident about this answer” reduces over-reliance more effectively than numerical indicators like “73% confidence.” The first triggers critical thinking; the second creates an illusion of precision.

- AI self-critique: A model that argues against its own conclusion helps the user see weak spots. This is one of the most promising patterns from the 2025 synthesis.

- Prior judgment: The user formulates their own answer before seeing the AI’s recommendation. A simple change in sequence changes everything.

But there’s an important caveat: users rated these interfaces as the least convenient. There’s an inverse correlation between effectiveness and appeal. What works – annoys. What feels good – doesn’t help. When implementing such mechanisms, you need to provide clear explanations of why you’re doing it.

4. “Pilots,” Not “Passengers”

BetterUp and Stanford offer a clear framework. 28% of employees who act as “pilots” – those who actively edit, verify, and supplement AI output with their own context – show 3.6x higher productivity and 3.1x higher loyalty. The remaining 72% are “passengers,” delegating to AI without revision.

The difference isn’t in the tool – it’s in the mindset. And that mindset is trainable: employees who received relational skills training were 30% more active in engaging with AI and produced higher-quality content. Organizations that establish clear rules for AI use, define specific scenarios, and reinforce human judgment are significantly less likely to encounter workslop.

What to Do About It: An Action Map

The data points to concrete steps for any manager who uses AI and leads a team that uses AI.

For personal communication:

- Instead of “AI wrote this,” say “I prepared this with AI assistance and refined it for our context.” Focus on your contribution, not the tool – this way you avoid both the authenticity penalty and the deception penalty.

- Add traces of human thinking: personal experience, specific examples from your working relationship, references to previous discussions – anything AI couldn’t possibly know.

- Form your own position before launching AI. This transforms you from “passenger” to “pilot” – and reduces the risk of blind acceptance.

For team management:

- Implement process audits: evaluate not the polished output, but the path to it – drafts, iterations, points where the employee disagreed with AI.

- Normalize AI use within the team: when everyone openly works with AI, the typicality component stops breaking down.

- Build social trust: involve the team in selecting and testing AI tools. The Harvard study at Tapestry proved it: trust in a system grows through trust in the colleagues who endorsed it.

- Put AI in people’s hands, not over their heads. The SAP experience: AI that helps employees builds trust; AI that monitors them destroys it.

- Close the AI literacy gap. Doctors with low AI literacy were 7 times more likely to follow incorrect recommendations. Training isn’t optional – it’s trust infrastructure.

The transparency dilemma has no perfect solution. Disclosure cuts trust by 7–18%. Concealment destroys it when the truth surfaces. But between these poles there’s a working zone: use AI as a tool, not as an author. Add your voice rather than delegating it.

It might be worth distinguishing two questions: “Did AI write this?” and “Is there a person behind this text who actually cares?” The first question is about technology. The second is about relationships. And it’s the answer to the second that determines whether you keep their trust.

AI writes – you decide how to present it

Open course module: how to use AI in professional communication while maintaining trust with clients and colleagues.

Continue learning

Open the textbook and pick up where you left off

How AI helped create this article (practicing what we preach – we disclose):

- Topic research. AI helped find and organize studies, articles, and case studies on the topic. But every source was read by a human, data was cross-checked against original publications, and the article draft was written by hand.

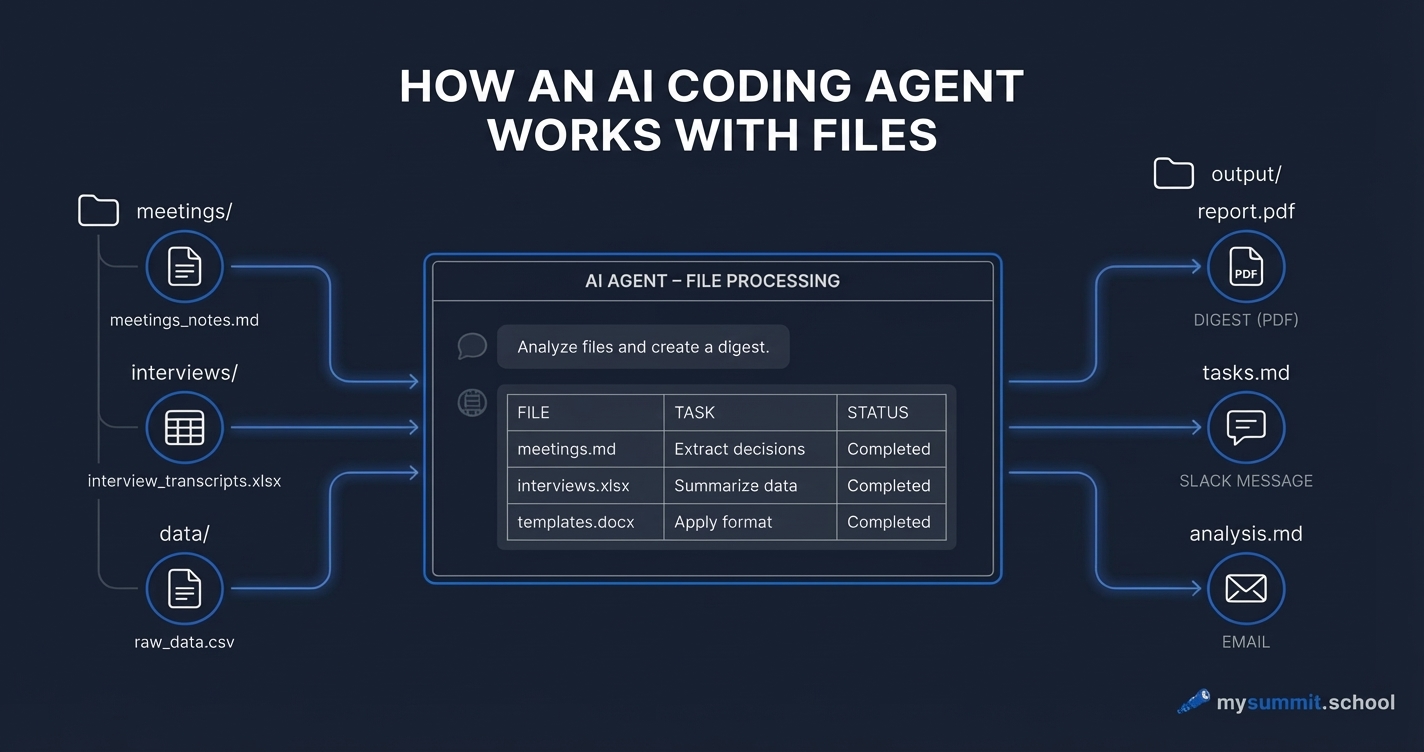

- Infographics. All images were AI-generated. But the prompts – descriptions of data, composition, and sources – were written by a human based on the research we’d read.

- Editing. AI checked the article structure for alignment with editorial standards and audience focus. But those standards, the brand voice, and the editorial policy were developed by us.

We’re “pilots,” not “passengers.”

Sources

- Schilke, O. & Reimann, M. (2025). The authenticity penalty of AI disclosure. Organizational Behavior and Human Decision Processes, 188. DOI

- Niederhoffer, K. & Hancock, J. (2025). AI-generated workslop is destroying productivity. Harvard Business Review. HBR

- Jain, G., Pareek, S. & Carlbring, P. (2024). Perception of AI-generated mental health responses. Internet Interventions, 38. DOI

- BCG (2025). AI at Work 2025: Momentum Builds, but Gaps Remain. BCG

- SAP & Wakefield Research (2025). AI Has a Seat in the C-Suite. SAP

- Bucinca, Z. et al. (2021). To trust or to think: Cognitive forcing functions. Proceedings of the ACM on Human-Computer Interaction, 5(CSCW1). DOI

- Vasconcelos, H. et al. (2023). Explanations can reduce overreliance on AI systems during decision-making. Proceedings of the ACM on Human-Computer Interaction, 7(CSCW1). DOI

- Jacobs, M. et al. (2021). How machine-learning recommendations influence clinician treatment selections. Translational Psychiatry, 11, 108. DOI

- Microsoft Research (2024). Appropriate Reliance on GenAI. PDF