AI Doesn't Save Time – It Compresses It: 8 Months of Observations

Companies are worried about getting employees to use AI. The promise is seductive: AI will handle the drudgery – document drafts, information summarisation, code debugging – freeing up time for higher-value work.

But are companies ready for what happens if they actually succeed?

Researchers at Stanford conducted an 8-month observational study of roughly 200 employees at an American tech company that had rolled out generative AI. The company didn’t mandate AI use – it simply provided corporate subscriptions to commercial tools. Employees decided for themselves whether to adopt them.

The result was paradoxical. AI didn’t reduce work. It intensified it. Workers moved faster, took on more tasks, spread their work across more hours in the day – often without any explicit external pressure. AI made “doing more” possible, accessible, and in many cases internally rewarding.

Strikingly, the same pattern shows up in other research. Microsoft found that 62% of product managers use Gen AI daily, yet while 81% say AI saves time, 56% deny that effort has decreased. A paradox? No – a pattern.

Why AI Doesn’t Reduce Work: Three Mechanisms

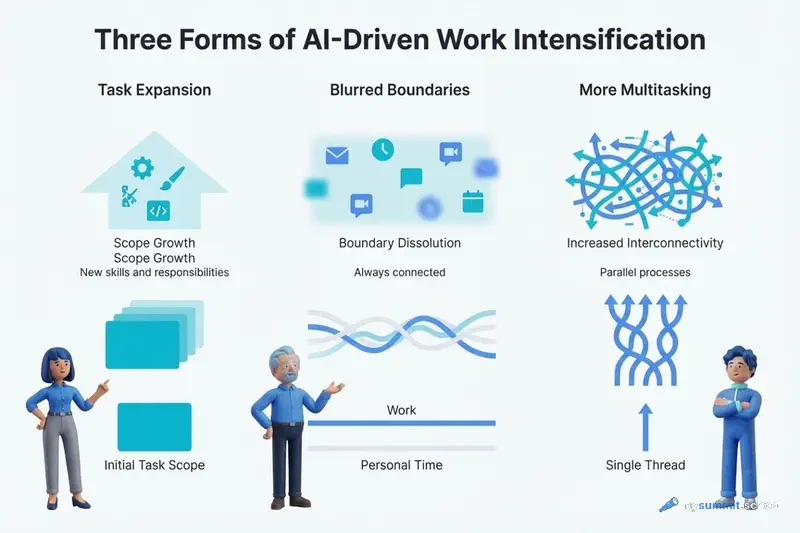

The researchers identified three forms of work intensification that emerged in the company they observed.

1. Task Expansion

AI fills knowledge gaps. This allows workers to take on tasks that previously belonged to someone else.

Product managers and designers started writing code. Researchers took on engineering tasks. People began attempting work they would have previously delegated, deferred, or avoided entirely.

Generative AI made these tasks suddenly accessible. The tools provided what many experienced as an “expansive cognitive boost” – they reduced dependency on others, offered immediate feedback, and allowed course corrections on the fly. Workers described it as “just trying things out with AI,” but these experiments accumulated into a meaningful expansion of job scope. In effect, workers increasingly absorbed work that would previously have justified additional hiring or headcount.

There was a side effect to this expanded scope. Engineers, in turn, spent more time reviewing, correcting, and mentoring colleagues whose AI-generated or AI-assisted work now required oversight. These demands went beyond formal code review. Engineers increasingly found themselves coaching colleagues who were “vibe-coding” and finishing partially completed pull requests. This oversight often arose informally – in Slack threads or quick desk consultations – adding load on engineers that wasn’t visible in any workload tracker.

The paradox: AI was supposed to lighten the team’s burden. Instead, it redistributed the load – some people took on more tasks, others spent more time checking.

2. Blurred Boundaries Between Work and Not-Work

AI made starting a task too easy. It lowered the friction of facing a blank page or an unknown starting point. As a result, workers began wedging small portions of work into moments that used to be breaks.

Many prompted AI during lunch, in meetings, while a file was loading. Some described sending “one last quick prompt” right before stepping away from their desk so AI could work while they were gone.

These actions rarely felt like “doing more work.” But over time, they created a workday with fewer natural pauses and more continuous engagement.

The conversational style of prompting softened the experience further: typing a line to an AI system felt closer to chatting than to executing a formal task, making it easy to extend work into evenings or early mornings without conscious intent.

Some workers described realising – often in retrospect – that as break-time prompting became habitual, downtime no longer delivered the same sense of recovery. Work started to feel less bounded and more ambient – something that could always be nudged forward just a little more.

The boundary between work and not-work didn’t disappear. But crossing it became easier.

3. More Multitasking

AI introduced a new rhythm in which workers managed multiple active threads simultaneously: manually writing code while AI generated an alternative version, running several agents in parallel, reviving long-deferred tasks because AI could “handle them” in the background.

Workers did this partly because they felt they had a “partner” who could help move through the workload.

While the sense of having a “partner” created a feeling of momentum, the reality was constant attention-switching, frequent checking of AI outputs, and a growing number of open tasks. This produced cognitive load and a persistent feeling of juggling, even when the work appeared productive.

Over time, this rhythm raised speed expectations – not necessarily through explicit demands, but through what became visible and normalised in daily work.

Many workers noted they were doing more simultaneously – and feeling greater pressure – than before using AI, even though the time savings from automation were supposedly meant to reduce that pressure.

The Self-Reinforcing Cycle: From Savings to Overload

All of this created a self-reinforcing cycle.

AI accelerated certain tasks → this raised speed expectations → higher speed made workers more dependent on AI → increased dependency expanded the range of what workers attempted → a broader range further increased the volume and density of work.

One engineer summed it up: “You thought that, maybe, since you can be more productive with AI, you’d save time, you could work less. But actually you don’t work less. You just work the same amount or even more.”

Organisations might view this voluntary work expansion as an unambiguous win. After all, if workers are doing it on their own initiative, what’s the problem? Isn’t this the explosive productivity growth we were promised?

But the study reveals the risks of letting work informally expand and accelerate. What looks like higher productivity in the short term can mask a quiet build-up of workload creep and growing cognitive strain, as employees juggle multiple AI-assisted workstreams.

Because the additional effort is voluntary and often framed as enjoyable experimentation, it’s easy for leaders to miss how much extra load workers are carrying. Over time, overwork can impair judgement, increase error rates, and make it harder for organisations to distinguish genuine productivity gains from unsustainable intensity.

For workers, the cumulative effect is fatigue, burnout, and a growing sense that work is harder to step away from.

I think it’s worth distinguishing between productivity and intensity.

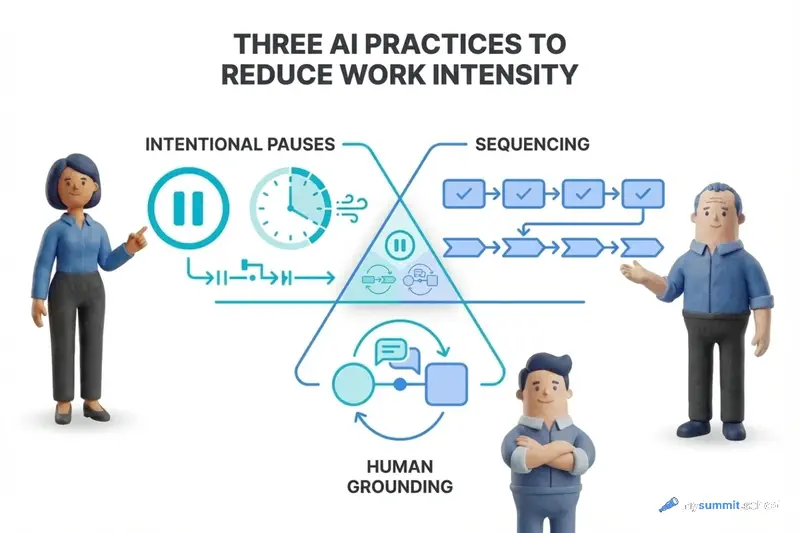

AI Practice: Three Practices Against Intensification

Rather than passively reacting to how AI tools reshape work, both individuals and companies need to adopt an “AI practice” – a set of intentional norms and routines that structure how AI is used, when it’s appropriate to stop, and how work should and shouldn’t expand in response to newfound capability.

Without such practices, the natural tendency of AI-assisted work is not reduction but intensification – with consequences for burnout, decision quality, and long-term sustainability.

The researchers propose three key practices.

1. Intentional Pauses

As tasks accelerate and boundaries blur, workers can benefit from brief, structured moments that regulate pace: protected intervals for assessing alignment, revisiting assumptions, or absorbing information before moving forward.

These pauses wouldn’t slow work overall. They would simply prevent the quiet accumulation of overload that arises when acceleration goes unchecked.

For example, a decision pause might require, before a major decision is finalised, one counterargument and one explicit link to organisational goals – widening the field of attention just enough to guard against drift.

Embedding such pauses into daily workflow is one way organisations can support better decisions, healthier boundaries, and more sustainable forms of productivity in AI-augmented environments.

2. Sequencing

As AI makes constant background activity possible, organisations can benefit from norms that intentionally shape when work moves forward, not just how fast.

This includes batching non-urgent notifications, holding updates until natural checkpoints, and protecting focus windows in which workers are shielded from interruptions.

Rather than reacting to every AI-generated output as it appears, sequencing encourages work to advance in coherent phases.

When coordination is structured this way, workers experience less fragmentation and fewer costly context switches, while teams maintain overall throughput.

By regulating the order and timing of work – rather than demanding continuous responsiveness – sequencing can help organisations preserve attention, reduce cognitive overload, and support more thoughtful decision-making in AI-driven workplaces.

3. Human Grounding

As AI enables more solo, self-sufficient work, organisations can benefit from protecting time and space for listening and human connection.

Brief opportunities to connect with others – whether through short check-ins, moments of shared reflection, or structured dialogue – interrupt the continuous solo engagement with AI tools and help restore perspective.

Beyond perspective, social exchange supports creativity. AI provides a single, synthesised point of view, but creative insight depends on exposure to multiple human perspectives.

By institutionalising time and space for listening and dialogue, organisations re-anchor work in social context and help counteract the depleting, individualising effects of rapid, AI-mediated work.

Managing Your Team's AI Transformation?

Our open module on AI for managers: delegation practices, automation boundaries, quality control, workload management – no registration required.

Continue learning

Open the textbook and pick up where you left off

What This Means for Leaders and Teams

The promise of generative AI lies not only in what it can do for work, but in how thoughtfully it is integrated into daily rhythm.

The study shows: without intention, AI makes it easier to do more – but harder to stop.

AI practice offers a counterweight: a way to preserve moments of recovery and reflection even as work accelerates. The question facing organisations is not whether AI will change work, but whether they will actively shape that change – or let it quietly shape them.

For team leaders:

Monitor workload creep, not just productivity. If the team is working faster with AI, that doesn’t necessarily mean they’re working sustainably. Check regularly: is voluntary task expansion masking a growing burden?

Formulate explicit norms for AI delegation. Which tasks can be safely delegated? Which require human oversight? If this isn’t spelled out, workers will experiment without boundaries – often with consequences for quality.

Create protected focus windows. If AI enables continuous activity, actively protect time without interruptions. Not every notification requires an immediate response. Not every AI output requires immediate review.

For individual workers:

Distinguish between “I can” and “I should.” The fact that AI makes a task possible doesn’t mean you should take it on. Expanding your scope without accounting for long-term sustainability is a path to burnout.

Set prompting boundaries. If prompting AI has become so easy that you do it during breaks, ask yourself: is this still a break? Or has work simply become ambient?

Demand decision pauses for high-stakes tasks. Before finalising an important decision based on AI output, articulate one counterargument and one explicit link to your goals. This doesn’t slow work – it guards against drift.

For organisations:

Develop AI-specific best practices for your domain. Generic prompt engineering courses aren’t enough. Workers need cases from their field: how to use AI for specifications, data analysis, design, code – with explicit examples of what works and what doesn’t.

Institutionalise human grounding. As AI enables more solo work, protect time for human interaction – check-ins, reflection, dialogue. Creativity and perspective require exposure to multiple human viewpoints, not just a single synthesised AI perspective.

Revisit evaluation criteria. If roles are shifting – PMs writing code, researchers taking on engineering tasks – perhaps success criteria should shift too. Are you evaluating the ability to critically review AI outputs? Or only the speed of task completion?

Productivity or Intensity?

The 8-month study of a tech company reveals what many intuitively sense but can’t always articulate: AI changes not so much what we do, but how we experience work.

AI didn’t reduce the workload. It compressed it.

Workers began doing more tasks in the same time. Taking on responsibilities that used to belong to others. Spreading work into hours that used to be breaks. Juggling multiple threads simultaneously because AI created the illusion of a “partner” who could handle the load.

But the cumulative effect isn’t more free time. It’s fatigue. Blurred boundaries. A growing sense that work is harder to put down.

The paradox is that AI made work easier to start, but not easier to stop.

It’s worth asking: what matters more – productivity or sustainability? Speed or quality of judgement? More tasks or healthier boundaries?

AI won’t answer these questions for us. That requires intentional practices, explicit norms, and active shaping of how AI integrates into the workflow.

The question isn’t whether AI will change work. The question is whether we will actively shape that change – or let it quietly shape us.

Have you encountered similar intensification patterns in your team? How do you balance productivity and sustainability?

Sources

- AI Doesn’t Reduce Work–It Intensifies It (Harvard Business Review) – original article by Aruna Ranganathan and Xingqi Maggie Ye on the 8-month study

- Product Manager Practices for Delegating Work to Generative AI (arXiv:2510.02504) – Microsoft’s study on how 62% of PMs use Gen AI daily, yet 56% deny that effort has decreased

- AI Delegation: Why Accountability Stays With Humans – our analysis of Microsoft’s research on practices for delegating work to generative AI