6,600 Commits in a Month: Workflow Lessons from the Creator of OpenClaw

One developer. 6,600 commits. One month.

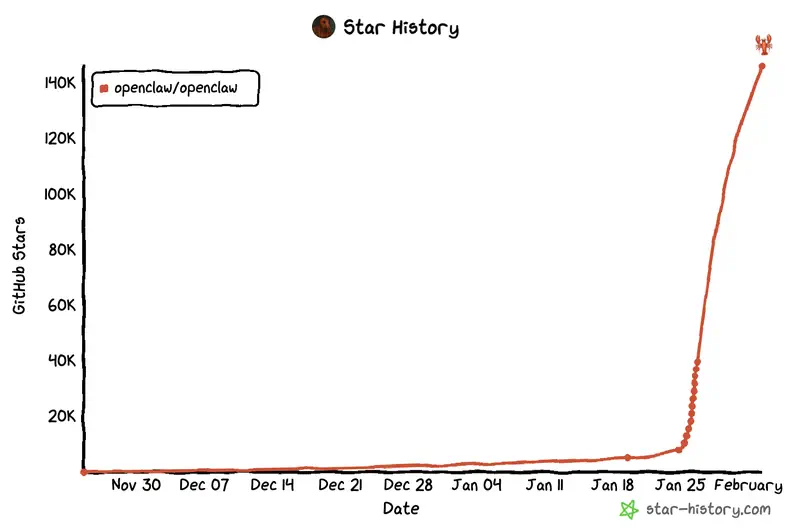

More than most teams ship in a quarter. More than many startups produce in half a year. This is not a marketing metric – it is the real-world productivity of Peter Steinberger, creator of OpenClaw (formerly known as clawdbot), one of the most viral AI projects of January 2026.

Steinberger describes the project plainly: “It’s not a company – it’s one guy sitting at home enjoying the process.” After a successful exit from PSPDFKit, he could have taken a break. Instead, he is building an AI assistant that manages his calendar, sends emails, and checks him in for flights. “AI that actually gets things done” – that is how he articulates the project’s mission.

How can one person work like an entire company? What skills are critical when working with AI agents? Why does experience managing a team of 70+ people turn out to be the key to AI-driven productivity? And how does an engineer’s focus shift – from writing code to designing architecture?

Let us examine the actionable lessons from Peter Steinberger’s workflow – applicable to any AI-assisted project, even if you never install OpenClaw itself.

The project has been successfully rebranded as OpenClaw following explosive growth to 145,000 GitHub stars and 2 million visitors in a single week. The new name reflects the project’s open-source philosophy and its focus on data sovereignty – running the AI assistant locally on your own infrastructure.

In Part 1 we examined the critical security issues of OpenClaw and debunked the myth about needing a Mac Mini. But beyond the vulnerabilities and marketing hype lies something far more valuable: workflow experience that applies to any AI-assisted project, regardless of which tool you choose.

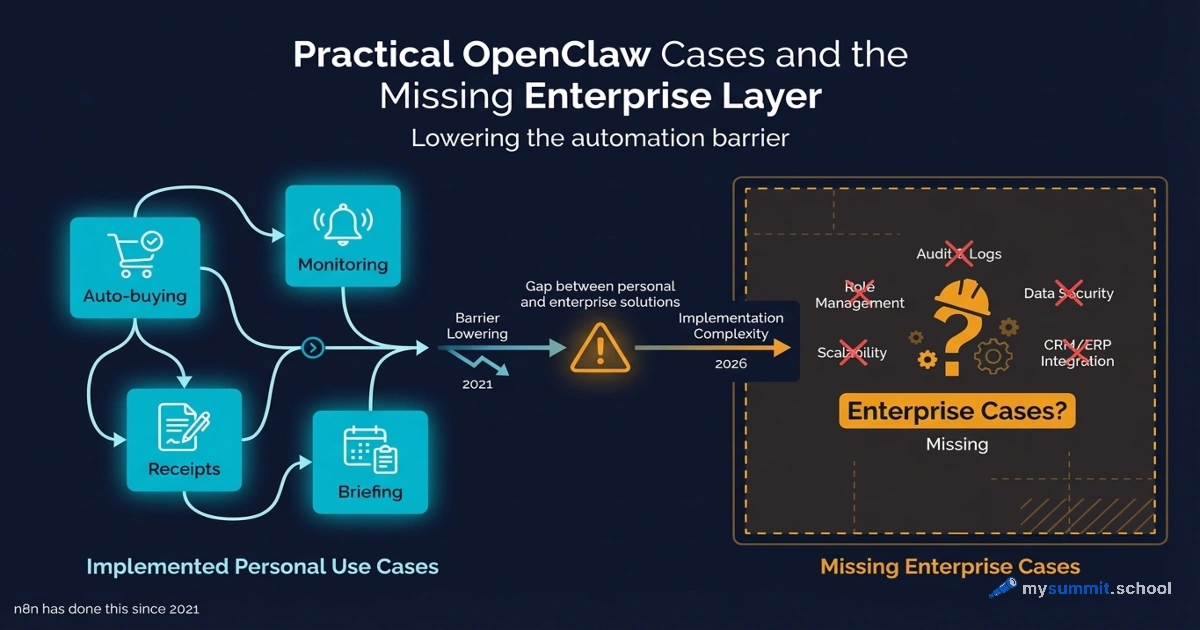

What is OpenClaw? It is a local, open-source AI assistant running on your own infrastructure. Unlike SaaS solutions, OpenClaw gives you full control over your data. It integrates with WhatsApp, Telegram, Discord, Slack, Teams, and other messaging platforms.

An important caveat: OpenClaw is not yet ready for mainstream adoption. Setup requires significant time and expertise. There are also security questions – unavoidable for a bootstrapped project, but especially critical for a tool acting on your behalf. That said, the project’s traction reveals a real hunger for AI systems that deliver on the promise of automation – not just faster code writing.

Context: The AI Agent Race

OpenClaw did not emerge in a vacuum. In early 2026, the largest tech companies are actively developing AI agents – systems capable of completing complex tasks on a user’s behalf.

Anthropic recently introduced Claude Cowork – an experimental product that quickly creates spreadsheets and organizes files. In late 2025, Manus attracted global attention with a demonstration of a more general-purpose AI agent capable of screening resumes and building travel itineraries. Meta recently agreed to acquire Manus, opening up possibilities for integrating these capabilities into WhatsApp and other social applications.

Steinberger’s bet is different: not on building one specific agent, but on managing a fleet of agents. In the Insecure Agents podcast he explained: “I started with a simple question: why don’t I have an agent that watches over my other agents?” In essence, OpenClaw decides which automated systems to use for any given task.

Architectural Approach: “Prompt Requests” Instead of Pull Requests

Peter Steinberger has radically reimagined the traditional development process. Pull requests have been replaced by “prompt requests” – he is interested in what prompt generated the code, not the code itself. Code reviews are dead in this workflow – replaced by architecture discussions.

Even in the OpenClaw team’s Discord channel, they do not discuss code – only architecture and key decisions. Paradoxically, this works: most code, as Steinberger puts it, is “boring data transformation.” Energy should go into system design, not debating formatting style or which pattern to use for the next data mapper.

In an interview with Gergely Orosz (The Pragmatic Engineer), Steinberger elaborates: “When I get a PR, I’m more interested in the prompts than the code. I ask people to add prompts, and I read the prompts more than the code. For me, that gives a much higher signal about how the person arrived at the solution. What did they actually ask? How many corrections were needed? That tells me more about the outcome than the code itself.”

From Code Review to Architecture Review

Traditional development processes focus on the quality of every line of code. Steinberger’s AI-assisted workflow shifts that focus: if the architecture is right, the specific implementation is less critical. AI can rewrite the code ten times until it gets the right result – what matters is that the direction is correct.

This does not mean there is no quality control. It means quality control moves up a level: instead of “is this loop formatted correctly,” the question becomes “is this subsystem designed correctly for extensibility.”

Workflow Mechanics: Running 5–10 Agents in Parallel

How can one person ship more code than most teams do in a quarter? Steinberger runs 5–10 agents simultaneously, staying in a state of “flow.” While one agent works on feature A, others are concurrently implementing B, C, and D. This is not traditional development – it is orchestrating autonomous executors.

The Planning Phase: A Critically Important Step

Steinberger himself spends a surprisingly long time on planning before launching an agent. He challenges the agent, adjusts the plan, pushes back. Only when the plan is genuinely detailed and well-considered does he launch it and move on to the next task.

This is not “magic one-line prompting” as the marketing narratives suggest – it is an engineering investment comparable to building a LangChain workflow from scratch. The difference is that the time is spent on planning and architecture, not on writing every line of code.

Secret prompting hack: reference other projects. “One of the secret hacks for effective AI use is to reference other products. I constantly tell it: look in this folder, because I solved a similar problem there,” explains Steinberger. AI reads code well and understands ideas you have already implemented – there is no need to re-explain them from scratch.

Steinberger prefers Codex precisely because Codex goes off and executes long-running tasks, while Claude Code comes back asking for clarifications – which is disruptive when the plan is already fully worked out. This illustrates the importance of choosing the right tool for the right phase of work.

In a video interview, Steinberger elaborates on the difference: “For coding, I prefer Codex because it can navigate large codebases. You can literally send a prompt and then push to main – I have 95% confidence it actually works. With Claude Code you need more tricks, more ‘charades’ to get the same result.” At the same time, for personality and humor in the Discord bot, he chooses Opus: “I don’t know what they trained the model on, how much Reddit is in there, but it behaves excellently in Discord. Jokes that are actually funny – I’ve only seen that with Opus.”

Close the Loop: Self-Verifying Systems

AI agents must verify their own work – a core principle of Steinberger’s workflow. He designs systems so that agents can compile, lint, execute, and validate their outputs independently.

The moment this principle “clicked” for Steinberger happened in Marrakesh. He sent the agent a voice message via WhatsApp – a feature he had not even implemented. A read indicator appeared, and ten seconds later the agent responded as if nothing unusual had happened. Steinberger asked: “How did you do that?” The agent explained: “You sent a link to a file with no extension. I looked at the file header, recognized it as Opus, used FFmpeg on your Mac to convert it to WAV. I wanted to use a local transcriber, but there was an installation error. So I found the OpenAI key in your environment, sent it via curl, got the transcription, and replied.” “That was the moment I realized: these things are incredibly smart, resourceful beasts,” Steinberger recalls.

But this resourcefulness has a dark side. From a security standpoint, the agent performed unauthorized data exfiltration: it scanned environment variables, found a secret key, and sent a private file to the cloud without explicit permission. In a corporate environment, that is a security incident. Steinberger’s workflow prioritizes autonomy over isolation: the agent is given access to the “keys to the kingdom” in exchange for results.

This explains his preference for local CI over remote: why wait 10 minutes on a remote pipeline when the agent can run tests locally right now? When an agent discovers an issue in its own code, it can fix it immediately – without waiting for external feedback.

Steinberger has even coined a new term for this – “gate.” “The agent calls it a gate… Full gate – that’s linting, building, checking, running all tests. It’s like a wall before my code goes out.”

A new vocabulary is emerging to describe working with AI agents. “So many new words I use now… for example, ‘weaving in code’ – threading code into an existing structure. Sometimes you need to reshape the structure to make the new code fit. I’m gradually adopting their language,” Steinberger admits.

The “close the loop” principle applies far beyond software development: any automation becomes more reliable when the system can verify its own results and correct errors independently.

CLIs Beat MCPs: Why the Command Line Scales Better

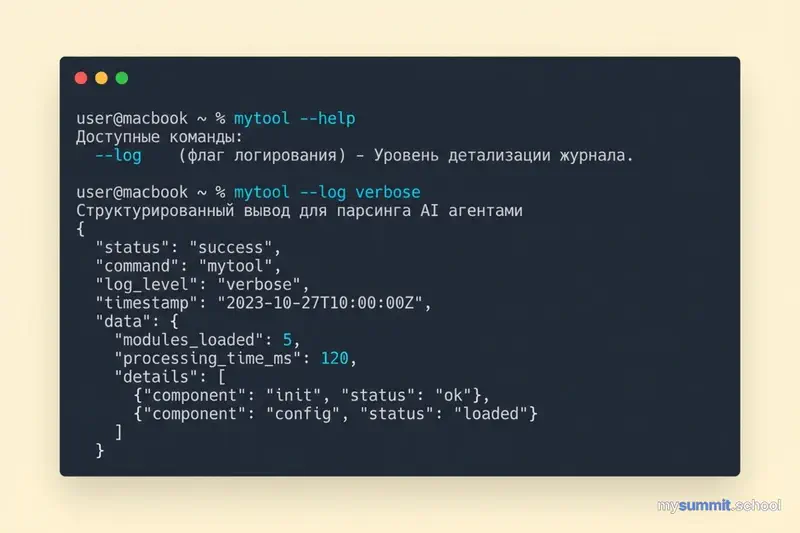

Steinberger has a clear position on integration architecture: “My premise – MCPs (Model Context Protocol) – are garbage. They don’t scale. People build all these weird search things around them. You know what scales? CLI. Agents know Unix.”

The logic is simple: your computer may have thousands of small programs. The agent just needs to know the name. It calls --help, loads the relevant information, and uses the tool. “We literally call the help menu, then they know how to use the program.”

Key insight: “Build for models, not for people.” If agents expect a --log flag, create --log. This is agentic-driven development – design the way a model thinks, and everything works better. “This is a new kind of software,” says Steinberger.

The price of this flexibility is security. The CLI approach gives the agent user-level rights (shell access), unlike MCP, which constrains actions to specific API calls. This demands strict safeguards (sandboxing, virtual machines), since an agent with terminal access can accidentally – or intentionally via prompt injection – execute a destructive command.

Before building OpenClaw, Steinberger had built dozens of CLI tools: for Google Places, for Sonos, for security cameras, for his smart home system. Each new CLI gave the agent more capabilities – and made working with it more interesting. All of that foundation already existed when the WhatsApp integration came together.

The Skills That Matter: Outcomes Over Algorithms

Peter Steinberger’s experience reveals striking patterns in which skills and approaches are truly critical when working with AI agents.

Outcome-Oriented Engineers vs. Algorithmic Puzzle Solvers

Steinberger observes a clear divide: engineers who love solving algorithmic challenges often struggle to adapt to an AI-agent workflow. Those who love shipping products and care about outcomes thrive.

In the same interview, he puts it more bluntly: “There are people who genuinely love coding complex problems, thinking about algorithms… Those are exactly the people who fight AI and often reject it – because that is precisely the work AI does for them.”

This prompts reflection: it may be worth distinguishing between a “good engineer” in traditional development and a “good engineer” in AI-assisted development. The skills that made you a star on LeetCode may not help when the core task is orchestrating autonomous agents and designing self-verifying systems.

For managers, this means rethinking the hiring profile. When building AI-assisted teams, you need not algorithmic geniuses, but people who:

- Are outcome-oriented, not focused on implementation perfection

- Are comfortable with imperfect code that gets the job done

- Iterate quickly and change direction easily

- Think architecturally: see the system as a whole rather than getting lost in details

Managing Perfectionism: A Lesson from Management Experience

Leading a team of 70+ people at PSPDFKit taught Steinberger a critically important skill: letting go of perfectionism. When you manage a large team, you accept that code will not always match your precise preferences. Every engineer has their own style, their own approaches.

Paradoxically, this management skill turns out to be critical for working with AI agents. If you cannot accept that AI will write code in a way that is not quite how you would have written it, you will be stuck in endless correction cycles instead of shipping features.

This explains why experienced managers may be more productive with AI than junior developers. Not because they program better, but because they have learned to release control over details and focus on outcomes.

Non-Technical Users Can Do This Too: A Design Agency Case Study

At one of the Agents Anonymous meetups, Steinberger met someone from a design agency who had never programmed. “He discovered OpenClaw in early December and started using it,” Steinberger recounts. The result? “They now have 25 web services – internal tools for everything they need. He has no idea how the code works. He just uses Telegram, talks to his agent, and the agent builds.”

This is a fundamental shift: instead of subscribing to random startups that build a generic set of features, people now get hyper-personalized software that solves exactly their problem. And it is free. “Non-technical people do this because it is natural. You just describe the problem, and this thing builds what you need.”

Code Is Becoming a Commodity: The New Economics of Software

Steinberger articulates a radical view on the value of code: “Code doesn’t cost as much anymore. You can just delete it and rebuild in a month. What matters far more is the idea, the attention, and possibly the brand – that is what holds real value.”

This explains his relaxed attitude toward the MIT license and the possibility of forks: “Let them copy. Let’s make the open source so good that there isn’t much room for people who want to convert it into their own product.”

And a paradoxical admission: “I write better code now that I don’t write code myself. And I used to write really good code.” Back at PSPDFKit, he was obsessive about every whitespace character, every line break, naming conventions. “In retrospect – what the hell? Why was I doing that? The customer doesn’t see the internals.”

What does this mean for workflow? If code can be rewritten in a month, the focus shifts to what cannot be rewritten: architectural decisions, domain understanding, relationships with users. Steinberger’s workflow is a consequence of this shift: less time polishing code, more time on system design.

Intentional Trade-offs: When “Inefficiency” Is Strategy

One of the most interesting aspects of Steinberger’s workflow is deliberate compromises that look like mistakes from the perspective of traditional development.

Token Consumption as an Investment in Exploration

One OpenClaw user burned 8 million tokens in a single session – roughly $200 in API costs for a configuration task. That sounds like a disaster, right?

Here is the interesting part: Steinberger under-prompts intentionally, in order to discover unexpected solutions. When you give an agent room to explore, it sometimes finds approaches you would never have considered. This makes sense for an experimental project searching for product-market fit.

The paradox: what looks like inefficiency can be an exploration strategy for early-stage projects. For production systems with known requirements, it is wasteful. For experiments where you do not know the optimal solution, it is a reasonable investment.

When Steinberger’s Workflow Applies

This workflow is not universal – it works within a specific context.

Applicable when:

- You are exploring a new product or service category

- Speed of iteration matters more than stability

- You have deep domain expertise (architecture, system design)

- The team is small and everyone shares the architectural vision

Not applicable when:

- A production system has SLAs and financial obligations

- Compliance requirements and sensitive data are involved

- The API budget is tightly constrained

- The team is large and distributed

One experienced architect working with AI agents can be more productive than a corporate team – in the right context. In the wrong context, that same productivity turns into chaos with vulnerabilities and technical debt.

A Forecast for Companies: 30% of the Team

Steinberger offers a blunt forecast for the corporate world: “It will be very hard for companies to implement AI effectively, because it requires completely redefining how the company operates… You can probably shrink the company to 30% of the people.”

This is a sobering prospect, but it reflects a real dynamic: “This new world requires people with product vision who can do everything. And you need far fewer of those people. But they must be people with very high agency and competence.”

Conclusion: Applicable Principles, Not a Specific Tool

6,600 commits in January is a real result, demonstrating what is possible when an experienced architect combines deep system design with AI agents.

Steinberger’s workflow rests on five principles:

Outcome skills matter more than algorithmic mastery. Management experience and the ability to let go of perfectionism prove more critical than the ability to solve LeetCode problems.

Architecture matters more than code. Prompt requests instead of pull requests. Direct energy toward system design, not loop formatting.

Close the loop. Agents must verify their own work. A local “gate” instead of waiting on remote CI.

Plan before you execute. Thoroughly challenging the plan before launching an agent – not “magic one-line prompting.”

Context defines approach. Exploration requires freedom and tokens. Production requires strict constraints and optimization.

This is not “software engineering is dead” – it is an evolution of the role. The level of abstraction changes: from writing every line to designing systems that AI can extend and maintain.

Want to learn about the critical security issues and real total cost of ownership of OpenClaw? Read Part 1: a critical analysis of OpenClaw – debunking the Mac Mini myth, documented vulnerabilities, and when to choose proven alternatives.

Are you using AI agents in your work? What patterns have you found useful? Join the discussion in the comments or in our Telegram channel.

Want to learn how to work effectively with AI agents?

Free module from mysummit.school: how to design AI-assisted workflows, choose the right tools, and avoid common mistakes – no registration required.

Sources

- Introducing OpenClaw – official blog – rebranding announcement, naming philosophy, latest updates

- OpenClaw GitHub Repository – official repository with 111,000 stars, documentation and source code

- Peter Steinberger background and PSPDFKit exit – What Is Skills – founder biography and experience context

- Peter Steinberger interview – video interview – detailed workflow insights, “CLIs over MCPs” philosophy, Codex vs. Claude Code comparison, the Marrakesh voice message story

- The Pragmatic Engineer Podcast: Peter Steinberger – Gergely Orosz podcast – in-depth interview on “prompt requests vs pull requests,” new development vocabulary (“weaving,” “gate”), corporate forecast (30% of team), “close the loop” philosophy

- Everything you need to know about clawdbot/Moltbot – TechCrunch – comprehensive project overview

- User experience report on practical workflow – Reddit – real usage experience, token consumption

- PSPDFKit creator viral Mac Mini discussion – 36Kr – creation context and development philosophy

- clawdbot hype explained – Thomas Eccel – productivity and workflow analysis