GLM-5 Review: Chat.z.ai Pricing, Benchmarks & Agent Mode (2026)

On February 6, 2026, an anonymous model called “Pony Alpha” appeared on OpenRouter – free, with zero details about its creators. The AI community immediately set about identifying it. Its coding abilities came remarkably close to Claude Opus 4.5. When asked “who are you?”, the model responded: “I am GLM.” But when prompted to write a web page describing itself – it wrote: “I am Claude, created by Anthropic.”

This was reproducible one hundred percent of the time. And that single fact frames everything you need to know about GLM-5 before we get to benchmarks and pricing.

Try It Yourself: GLM-5 vs GigaChat vs Claude

Before we dig into the history, the benchmarks and the pricing – run a prompt right here and compare three models on the same task: GLM-5 (Z.ai), GigaChat-2-Max (Sber) and Claude Sonnet 4.6 (Anthropic). These are three fundamentally different bets: the cheap Chinese challenger, the Russian “home” model with dense local-context knowledge, and the Western premium flagship.

Example 1. A tactful letter to a long-time supplier

This task probes language control, sense of tone and business etiquette all at once. Claude usually wins on the balance of empathy and clarity, GigaChat tends to be stronger on local stylistic conventions and formal register, and GLM-5 is interesting as an indicator of how far a cheap Chinese model can stretch into polished business writing outside its native language. Further down the article there’s a second prompt on an analytical task, where the strengths shuffle differently.

What Is GLM-5 and Who’s Behind It

Zhipu AI – a spinoff from Tsinghua University, founded in 2019 – rebranded to Z.ai by 2025 and went public on the Hong Kong Stock Exchange in January 2026. The IPO was impressive: within three days of the official GLM-5 announcement, shares climbed 60%.

GLM-5 launched on February 11, 2026, and immediately staked its claim as the strongest open model in the world. Three things matter for a manager:

- The model is free and open – code is available under an MIT license, any company can download and run it on their own servers

- A single request can “read” up to ~400 pages of text – useful for working with long documents, reports, contracts

- Trained entirely on Chinese-made Huawei chips – without a single NVIDIA component

That last point isn’t just a technical detail. Under US export restrictions, it’s a political statement: China can build competitive AI models without access to Western chips. For business, it means the provider doesn’t depend on Western supply chains – unlike OpenAI or Anthropic.

The Pony Alpha Story: A Detective Case Without a Resolution

“Pony” – a nod to the Year of the Horse in the Chinese calendar. On February 11, Zhipu officially confirmed: Pony Alpha is GLM-5. The company’s shares jumped 60% in three days.

As for what actually happened with the identity confusion – there’s been no official explanation. Zhipu never commented.

And it’s not an isolated case. In December 2025, MIT researchers documented that GLM-series models identified themselves as Claude roughly 50% of the time when queried through non-standard methods. DeepSeek V3 had a similar quirk – under certain prompts, it called itself ChatGPT or GPT-4. OpenAI directly accused DeepSeek of distilling from its models and updated its terms of service. Anthropic, Mistral, and xAI followed with similar anti-distillation clauses.

Distillation – training a smaller model on the outputs of a larger one – is, by all appearances, an open secret of the industry. Confirming its use in GLM-5 is impossible: we have no technical audit. Denying it is equally impossible: the behavioral patterns are too specific.

This raises a question worth sitting with: if the model “pretended” to be Claude under indirect queries – what exactly was it absorbing during training? And how much should a manager who needs a working tool actually care?

What the Benchmarks Show

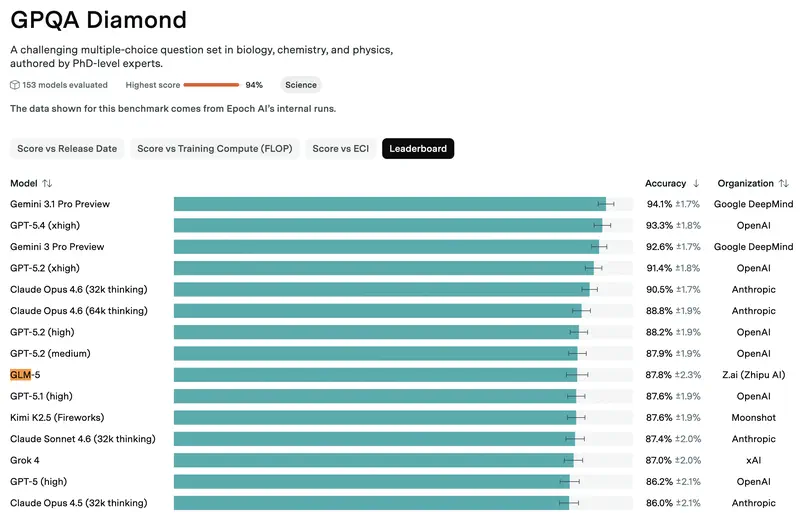

On standard industry benchmarks, GLM-5 competes with the best closed models – and for a free, open model, that’s genuinely noteworthy. Here’s what matters for a manager:

Coding – solves 77.8% of real-world tasks from GitHub. For comparison: Claude Opus 4.5 – 80.9%, GPT-5.2 – 75.4%. The gap with the leaders is minimal.

Business simulation (Vending Bench 2 – a test where the model “runs a business” for a year) – GLM-5 finished with a balance of $4,432, Claude Opus 4.5 – $4,967. The model makes strategic decisions at roughly the same level as the best Western competitors.

Web search – first place among all models tested, including GPT-5.2 and Claude.

Hallucinations – the best result in the industry. GLM-5 is more likely to say “I don’t know” than to fabricate an answer. For work involving facts and figures, this is critically important.

As always, benchmarks and real-world performance are different things. But the direction is clear: GLM-5 plays in the same league as ChatGPT and Claude.

How GLM-5 Performed in Our Testing

As part of our comparison, we tested GLM-5 on real managerial tasks across 8 categories.

Overall result: upper-middle tier – a solid performer, though not among the elite. The devil is in the details.

Where GLM-5 surprised us:

- Team management – one of the strongest results among all models. GLM-5 performed exceptionally well at employee evaluation, designing motivation systems, delivering feedback, and conflict resolution. Our testing showed these strong results held across English-language tasks as well

- Training and development – above average

- Business communication – mid-range

Where it fell short:

- Cultural and regional nuance – notably weaker. The model scored lower on tasks requiring Western business culture context – idiomatic email tone, country-specific compliance references, local market conventions

- Information search and analysis – below average

- Problem-solving – among the weakest results

The takeaway for a manager is pragmatic: GLM-5 is one of the best tools for people-related tasks. If you’re writing a performance review, designing a KPI system, or preparing for a difficult conversation with a team member – this model deserves your attention. If you need culturally nuanced business writing or up-to-date information retrieval – the results will be weaker.

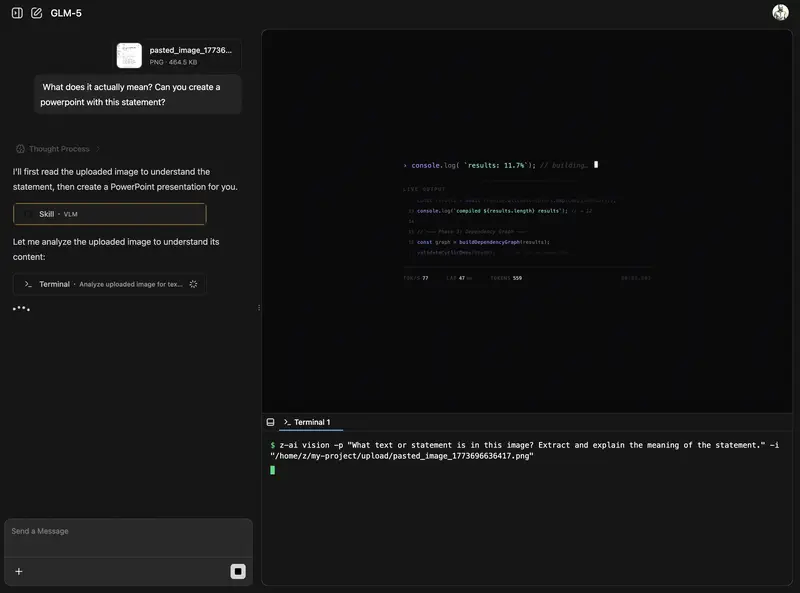

How to Use GLM-5 Right Now

chat.z.ai – the official web interface, accessible globally. Sign in with a Google account. The interface is in English and Chinese; the model understands and responds in many languages, though English and Chinese produce the strongest results.

Two modes of operation:

Chat Mode – the familiar dialogue format. Suitable for most tasks: writing text, analyzing documents, answering questions.

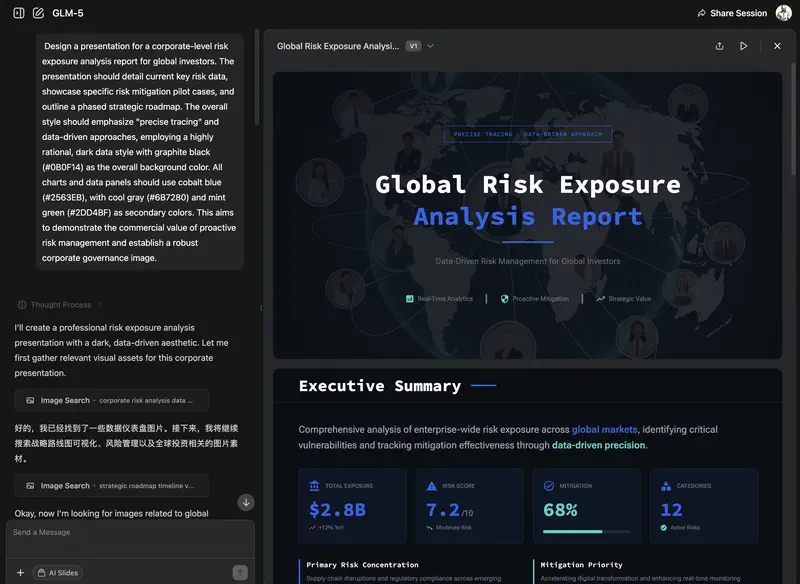

Agent Mode – where GLM-5 truly comes into its own. The model can use tools: generate files in .docx, .pdf, .xlsx formats, access web search, execute multi-step tasks. If you’re asking it to prepare a report with tables – this is the mode you want.

A practical note on language: English is GLM-5’s second strongest language after Chinese, and it performs well for most business tasks. That said, native English speakers may notice occasional awkward phrasing compared to Claude or ChatGPT – particularly in creative writing and nuanced argumentation. For analytical and structured tasks, the difference is minimal. This is the same dynamic as with Qwen: Chinese models perform best on the languages they were trained on most heavily.

The week after GLM-5’s launch was turbulent: traffic grew 10x, the service was unstable for several days, and Zhipu issued a public apology. By mid-March the situation had stabilized, but it’s worth keeping in mind: this is a young service with rapidly growing demand.

Example 2. A pre-mortem before a product launch

The second task is analytical. This is traditionally Claude’s home turf, and for GLM-5 it’s an honest test: can a cheap Chinese model deliver structured thinking at flagship level? For GigaChat, it’s a chance to show how it handles a local market context.

Watch not just the content but the structure of each response: the ability to hold all the requirements of the prompt in mind at once (6–8 causes, categories, signals, actions) is precisely what separates a working tool from a good-looking demo.

Benchmark Results

We tested GLM-5 in our independent benchmark for managers, covering planning, analysis, team management, and other real-world business tasks. The results paint a nuanced picture.

GLM-5 lands in the upper-middle tier overall – competitive, but not elite. Its strongest showing is in planning tasks, where it performs near the top, and in analysis and decision-making, where it holds its own against mid-range paid alternatives. Team management results are decent as well.

The weak spots are clear: learning and development content – here GLM-5 falls significantly below the leaders – and regional awareness, where it struggles with non-Chinese business contexts.

For international users, GLM-5 is worth considering as a strong free alternative to mid-tier paid models. It competes comfortably with Gemini 2.5 Pro and DeepSeek V3.2 on analytical and planning tasks. However, it falls noticeably below elite models like ChatGPT (GPT-5.4), Claude Sonnet 4.5, and Kimi K2.5.

If you need a model for generating training materials or learning content, Claude or ChatGPT remain the stronger choices. But for analytical work on a budget – GLM-5 is a serious contender among the best Chinese open models, alongside DeepSeek and Qwen.

Limitations and Risks

Chinese censorship works predictably: politically sensitive topics, historical criticism of the state, certain events – all blocked. For a manager, this rarely becomes a problem in practice, but it’s worth knowing.

Occasional Chinese-language artifacts – while English performance is solid overall, the model occasionally shows Chinese-language patterns in output formatting: stray Chinese punctuation marks, formatting conventions that feel unfamiliar. Our testing confirmed these are infrequent but noticeable.

Response speed in deep analysis mode is noticeably slower than Claude and GPT – roughly 30–40%. Not critical for one-off tasks, but noticeable during intensive work.

The distillation question remains open. This doesn’t mean the model is technically unreliable – it works. But for organizations that use Claude and care about the ethics of AI usage, this fact is worth considering.

Self-hosting – technically possible (the code is open), but requires server hardware costing tens of thousands of dollars. Unlike the more compact Qwen models, GLM-5 isn’t something your IT department can spin up casually.

No mobile app – web only.

Pricing

| Option | Cost | For Whom |

|---|---|---|

| chat.z.ai | Free (with limits) | Try it with no commitment |

| API via OpenRouter | ~$0.15 for a 100-page report analysis | Integration into workflows |

For comparison: the same analysis via Claude Opus 4.5 would cost roughly $3, via GPT-5.2 – about $1.50. GLM-5 is 20 times cheaper with comparable capabilities on many tasks.

That said, among Chinese open models GLM-5 is the most expensive. DeepSeek and Qwen cost 3–5x less. What are you paying for? The best result in team management and web search – if those are your priorities, the premium is justified.

One caveat: after the GLM-5 launch, Zhipu raised prices on the Pro plan by roughly 30%, which drew user complaints.

Is It Worth Trying?

GLM-5 is a model with honest strengths and honest weaknesses, wrapped in a story that still hasn’t gotten a definitive answer.

The impressive team management result – among the strongest of all models we tested – is real and reproducible. If you regularly work on HR and people management tasks – performance reviews, motivation system design, feedback, conflict resolution – GLM-5 is worth trying. The key question for a manager who already uses ChatGPT or Claude is whether GLM-5 earns a spot as a free complement for specific tasks. On team management, the answer is a clear yes.

If you need a model for culturally nuanced business communication, current information retrieval, or tasks with strong regional specificity – GLM-5 lags behind competitors. For those purposes, Claude or DeepSeek will serve you better.

The Pony Alpha story and the Claude identity confusion – not a reason to dismiss the tool, but a reason to maintain analytical distance. The industry has long operated in a gray zone where the line between “inspiration” and “distillation” is blurred by design. This isn’t an exception for GLM-5 – it’s the general picture, and it’s worth keeping honestly in mind.

Access couldn’t be simpler: chat.z.ai is available globally, sign in with Google, and a free tier exists. It’s worth spending an hour testing – and forming your own opinion.

We break down GLM-5 and other AI tools in practice

9 diagnostic lessons: try GLM-5 and other models on real tasks – and discover what mistakes most managers make. No registration required.

Continue learning

Open the textbook and pick up where you left off

Stanislav Belyaev

Engineering Leader at Microsoft18 years leading engineering teams. Founder of mysummit.school. 700+ graduates at Yandex Practicum and Stratoplan.