P5.express and Agentic AI: Where It Helps, Where It Breaks Things

In the PMI world, portfolio management is typically imagined as something monumental: steering committees, Tableau dashboards, hundreds of Jira fields, weekly status meetings with a deck of slides. P5.express offers a different approach. Three cycles, five documents, two roles. The entire system fits on a single page.

This is exactly the kind of system where agentic AI makes sense: minimalist architecture that’s easy to understand, clearly defined roles, structured data. But “makes sense” doesn’t mean “everywhere.” Some parts of P5.express stop working when automated – not because the AI is bad, but because those parts derive their value from the human process itself.

Below is a cycle-by-cycle breakdown. What’s worth delegating to an agent, what’s better left to people, and which model fits these tasks best.

What Is P5.express

P5.express is a portfolio management methodology developed by OMIMO. It sits one level above familiar frameworks like Scrum or PRINCE2 and answers a different question – not “how to run projects” but “which projects to run at all.”

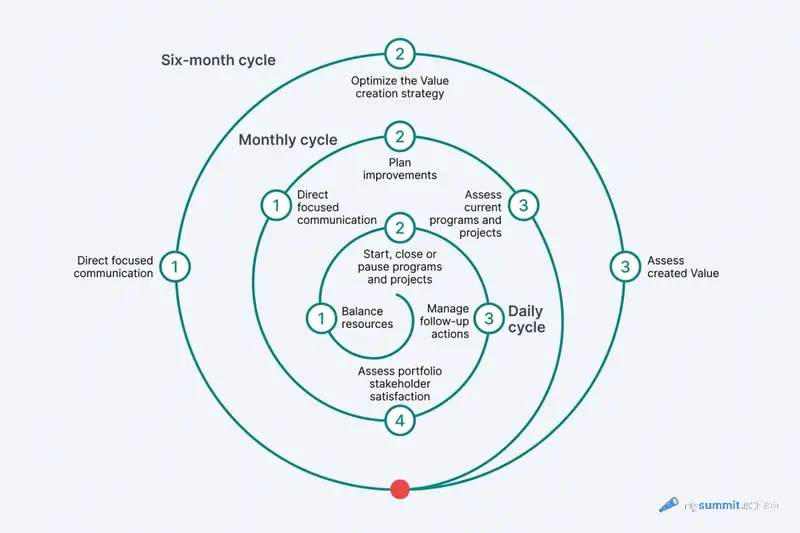

The structure consists of three nested cycles.

X – every six months (strategic): evaluate generated value (X1), optimize the value generation strategy (X2), conduct focused communication (X3). The key event is a one-day X2 workshop where the portfolio board reprioritizes.

Y – monthly (health and improvements): assess stakeholder satisfaction (Y1), evaluate running programs and projects (Y2), plan improvements (Y3), conduct focused communication (Y4).

Z – daily (execution): manage follow-up items (Z1), start/stop/pause programs and projects (Z2), balance resources (Z3).

Five documents: Portfolio Description, Value Generation Matrix, Global Follow-Up Register, Global Health Register, and Business Cases.

The most important one is the Value Generation Matrix. It’s a table of all programs and projects with columns: name, sponsor, status, progress, investment, benefits, Value. Value is calculated as benefits / investment. Rows are ordered: completed – running – pending – cancelled – rejected. Pending items are balanced within the “balancing horizon” by value categories.

Two roles: the Portfolio Board (department heads, collegial decisions) and the Portfolio Manager (facilitator, analyst, with no direct role in any project – otherwise it’s a conflict of interest).

That’s the entire system. The rest is application.

Where the Agent Genuinely Helps

Y2 (Monthly Project Evaluation) – This Is Agent Work

The most expensive rhythm in P5.express is Y2 – the monthly evaluation of running programs and projects. The portfolio manager must collect data on all active programs and projects every month: progress, completion forecast, recalculated expected benefits. Then update the Value Generation Matrix.

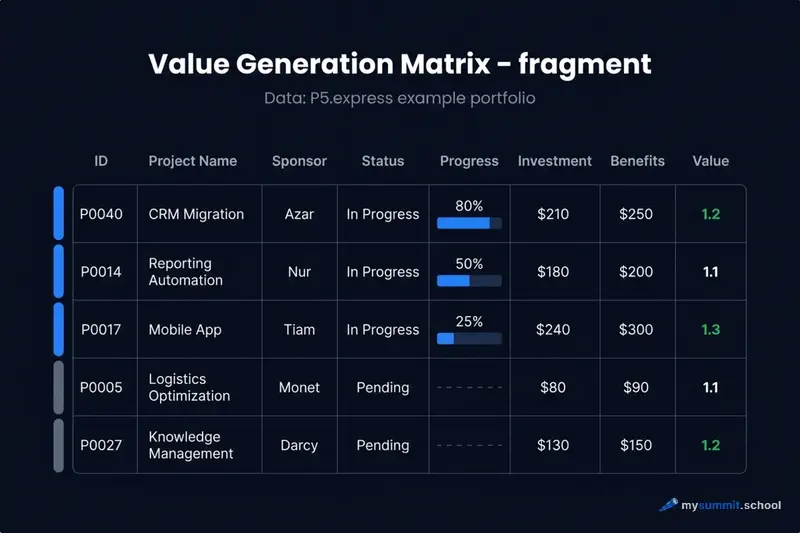

Here’s what a fragment of a real matrix looks like from the P5.express example – active projects only:

In practice, updating this table means: open Jira (or Monday, or Smartsheet, or three Excel files from three different project managers), pull the current progress for P0040, P0014, P0017, compare with last month – has the completion forecast slipped, have investment or benefit estimates changed – and recalculate Value. One to two hours of manual work, repeated every month.

An agent does this faster. The instruction looks roughly like this:

The critical condition: the agent proposes changes – a human approves them. The matrix doesn’t update automatically after this. The portfolio manager reviews the diff and clicks “accept” or “adjust.” A draft for review, not an autonomous update – exactly the format that reduces losses from hallucinations. Separately, always verify the arithmetic: the agent will confidently output Value = 1.2 even when the underlying investment and benefit figures contradict each other.

Z1 (Daily Follow-Up Monitoring) – Pattern Matching Across N Registers

The Follow-Up Register in P5.express isn’t just about risks. It’s a list of risks, issues, change requests, improvement plans, and lessons learned. In RAID terminology (Risks, Assumptions, Issues, Dependencies) or RICs – risk is just one category. The P5.express manual explicitly states: the portfolio manager must continuously monitor local registers to find patterns that need attention at the portfolio level.

“Continuously monitoring N registers across all categories” is exactly the kind of work that agentic mode was built for. Once a week, the agent scans Follow-Up Registers from all active projects – risks, issues, change requests, lessons – clusters similar items by topic, and proposes candidates for the Global Follow-Up Register along with a suggested owner. The portfolio manager sees a ready-made list and decides what to escalate.

The shape of the task changes: instead of “I scan 8 registers manually” – “I review the agent’s proposal and make a decision.” The second version scales; the first doesn’t. In the terms of the work intensification research – the agent here doesn’t speed up existing work but redistributes cognitive load: data scanning goes to the machine, decision-making stays with the human.

X3/Y4 (Stakeholder Communication) – Draft Communications

Focused communication is the part of P5.express that, in practice, gets done least often. The reason is mundane: writing monthly stakeholder updates that always look the same is boring.

The agent solves the motivation problem. After the Value Generation Matrix is updated, it drafts the communication: what changed this month, what stands out, which projects moved between statuses. The draft takes 5 minutes to edit – versus 40 minutes to write from scratch.

The output is predictably similar every month – which is exactly what you need from a standard update.

A separate task is ensuring that cycles actually happen. Minimalist systems die from skipped rituals: miss one matrix update and it’s stale, miss two and it stops being the source of truth. The simplest watchdog is a weekly calendar task or a cron script that checks the modification dates of portfolio files and sends a reminder if a document hasn’t been updated within the expected cycle.

Portfolio management is one of the tasks covered in the course. Try 9 practical AI management tasks – free, no registration required.

No payment required • Get notified on launch

The Gray Zone: Helps, but with Caveats

X2 (Semi-Annual Workshop) – Workshop Preparation

X2 is the semi-annual portfolio reassessment workshop. It’s the key event in the system, and it cannot be automated. But the preparation for it can be.

A week before the workshop, the agent can assemble: an opportunity cost simulation, a gap analysis by value categories (which categories are underrepresented in the current portfolio), a benefits realization delta (what was forecasted vs. what was delivered for completed projects), and a clustering of bottom-up ideas.

The opportunity cost simulation is the most valuable of these tasks and also the most tedious to do manually. Here’s what the prompt looks like:

The board arrives at the workshop with pre-built scenarios instead of modeling them on the fly. The quality of the conversation shifts: they discuss trade-offs rather than doing mental arithmetic.

The caveat: the agent prepares the analytical brief, not the agenda. Priority decisions are collegial and political in the best sense of the word. They cannot be automated, and they shouldn’t be.

Red Team for Business Cases

P5.express requires a business case before every new project enters the portfolio: rationale / alternatives / expected benefits / measurement method / investment estimate / key risks.

The agent can play devil’s advocate. A sponsor describes a project in a few sentences – the agent scaffolds a full P5.express business case with all mandatory fields, where every number is tagged [needs verification], and immediately runs a pre-mortem on the same case.

The agent doesn’t fill in numbers – it helps the sponsor verify them. In practice, stress-testing a business case is a chain of several techniques: pre-mortem surfaces failure scenarios, a WBS audit finds missed tasks and dependencies, a stakeholder simulator prepares you for tough questions during the defense. The full chain – from failure scenarios to rehearsing the defense before the CFO – is broken down step by step in the Project Management specialization on the platform.

The key danger worth stating explicitly: if the agent proposes numbers and the sponsor accepts them without verification, hallucinated benefits end up in the matrix. The ranking silently drifts. The agent as red team – valuable. The agent as a source of plan figures – dangerous.

Related – Z2/Z3 (start/stop projects and resource balancing): the agent can steelman arguments against a specific sponsor’s request, referencing thresholds from the Portfolio Description. This depersonalizes politically sensitive conversations.

Where AI Breaks the System

An honest section, because P5.express is one of those methodologies where automation can cause methodological harm, not just technical harm.

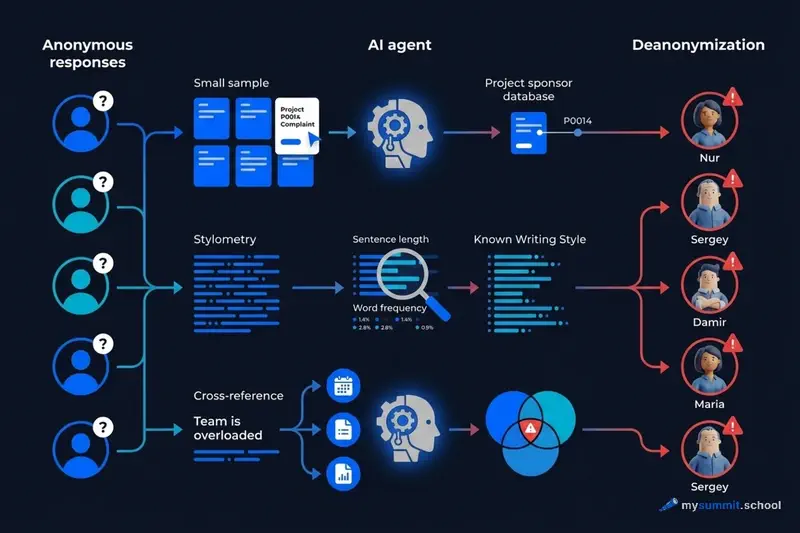

Y1 (satisfaction surveys) through AI destroys the data. Y1 consists of anonymous stakeholder satisfaction surveys. Anonymity is the precondition for honesty. The problem is that an agent breaks anonymity through several paths, even if you didn’t intend it.

The first is de-anonymization through small sample size. A portfolio typically has 5–8 stakeholders. If a respondent writes about delays in project P0014 and the agent knows that P0014’s sponsor is Nur, the response is no longer anonymous. A human analyst might notice this too, but the agent does it systematically across all responses simultaneously.

The second is stylometry. LLMs recognize individual writing patterns: sentence length, characteristic vocabulary, argument structure. In a group of six people where the agent has read each person’s work correspondence, matching an anonymous response to its author is a trivial task. The respondent doesn’t even need to mention a project – writing in their usual style is enough.

The third is cross-referencing with context. The portfolio manager’s agent has access to registers, correspondence, meeting minutes. An anonymous response saying “timelines are unrealistic, the team is overloaded,” combined with resource utilization data from Z3, points to a specific manager. The agent isn’t malicious – it simply connects dots, because that’s what it’s designed to do.

The result: stakeholders will quickly realize that anonymity is nominal and start giving socially desirable answers. Y1 data becomes useless. Satisfaction surveys are one of the parts of P5.express that must remain entirely human: collection, aggregation, analysis.

Mechanical optimization of the Value Generation Matrix. The P5.express manual warns explicitly: “the matrix can never be accurate enough to be optimized mechanically.” Value = benefits / investment is an estimative construct, not a precise number. If the agent sorts the matrix by Value and suggests “launch projects from the top, reject from the bottom” – it creates false precision. The board starts trusting the ranking instead of discussing it. This destroys the core purpose of collegial decision-making.

Adding data fields for the agent’s benefit. P5.express contains an explicit anti-bloat principle: don’t collect data you don’t use. If you add 15 new fields to the matrix for the sake of better analytics, the system becomes more complex, more expensive to maintain, and the minimalism – the very reason you chose it – disappears. The agent should work with the data that already exists.

Practical Checklist: What to Try This Week

If you’re running a portfolio with P5.express or thinking about starting – here’s a concrete first step for each cycle.

Z-cycle (daily): Try giving the agent two or three Follow-Up Registers (risks, issues, change requests) from active projects and ask it to find recurring themes. This is your first touch of Z1 (follow-up monitoring) – and a good test of whether your registers are machine-readable at all.

Y-cycle (monthly): Before your next monthly project evaluation (Y2), export progress data from your management system into a file and ask the agent to propose updated matrix rows. Notice where it makes mistakes – that shows you where the data has gaps.

X-cycle (semi-annually): Before your next semi-annual workshop (X2), ask the agent to formulate 10 arguments against your current portfolio – which projects could have been skipped, why the opportunity cost is high. This isn’t an agenda; it’s a tool for a better conversation.

All three tasks can be run in any chat – ChatGPT, Kimi, DeepSeek – by copying data from your system. A coding agent (OpenCode or similar) is needed when you want to automate the cycle: the agent reads files on its own, runs on a schedule, and proposes updates without manual copy-pasting. The OpenCode article covers three tasks for day one. The agentic approach to data analysis is covered in more detail in a separate case study with three files.

For a closer look at the operational rhythm of a manager working with AI, check that article if P5.express feels too high-level – it covers specific weekly tasks.

Working with multiple tools at once looks simple. The difficulty appears when you need not a one-off analysis but a reproducible process – an agent that runs on a schedule, knows the structure of your files, and produces a consistent format. The difference between “played with an agent” and “integrated an agent into my workflow” comes down to the skill of task formulation and knowing the boundaries of autonomy.

Portfolio tasks, business cases, cycle rhythms – test your AI approach on 9 real management tasks in the open module. Free, no registration required.

No payment required • Get notified on launch

Which Model to Choose for These Tasks

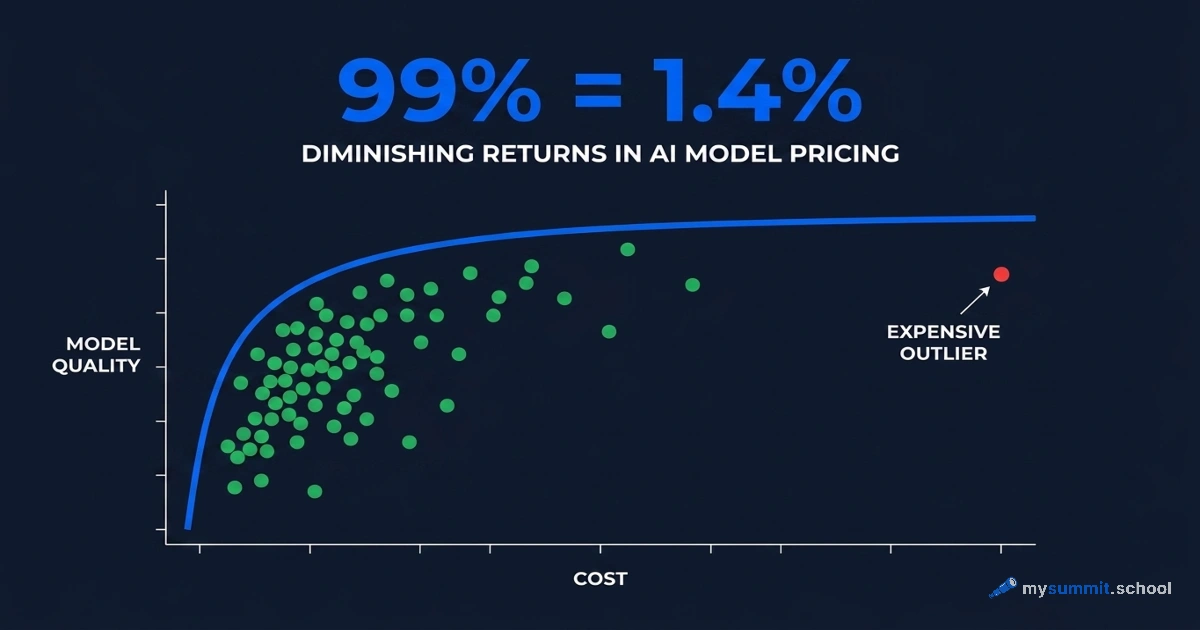

The agentic tasks in P5.express are primarily structured work: reading files, comparing data, drafting communications, asking questions by template. This doesn’t require maximum intelligence, but it does require reliability and strong performance with tables and documents.

Based on our benchmark of 54 models for management tasks, here’s the picture.

Top tier: Claude Sonnet 4.5 leads in the “Analysis & Decision-Making” and “Planning” categories with a score of 4.84. For red team business case tasks and X2 workshop preparation, it’s a strong choice. If your organization has access to the Anthropic API, this is the best option for complex analytical work.

Strong alternatives: Kimi K2.5 scored 4.67 in our benchmark – the top-ranking model among those available without regional restrictions. For daily and monthly cycle tasks, it’s more than sufficient. Qwen3.5 Plus at 4.57 is cheaper ($0.26 per million input tokens vs. $0.45 for Kimi) and handles these tasks well. DeepSeek V3.2 at the same price point performs reliably with tabular data.

The practical takeaway: for daily monitoring (Z1) and monthly project evaluation (Y2) – drafts for review, working with tables – Qwen3.5 Plus or DeepSeek is enough. For the semi-annual workshop (X2), red team, and complex analysis – Kimi K2.5. Claude via a coding agent, if you have access, is the best option for complex tasks.

Coding agents like OpenCode or Claude Code fit both scenarios: they’re vendor-neutral and connect to any provider. For a manager, this is a practical advantage – you pick the best available model for each task type without locking into a single vendor.

Conclusion

P5.express is designed in a way that makes automation work asymmetrically. Where you need data processing and a reproducible process, the agent reduces friction and makes cycles realistic. Where collegial process and human contact matter, automation destroys exactly what the methodology exists for.

Surprisingly, a minimalist system turns out to be a good filter for evaluating agentic tools: if the task sounds like “collect data, compare with last time, propose a draft” – the agent fits. If the task sounds like “talk to people and help them reach a decision” – it doesn’t.

That’s a useful question for any other methodology, too.

From Experiment to System

Agentic tasks like the ones analyzed here require skill – not just tools. The course foundation covers prompt engineering and critical thinking with AI. The Project Management specialization breaks down AI in the manager's operational rhythm: from business cases to monthly cycles.

Stanislav Belyaev

Engineering Leader at Microsoft18 years leading engineering teams. Founder of mysummit.school. 700+ graduates at Yandex Practicum and Stratoplan.