What if AI Succeeds Too Well? Breaking Down the Citrini 2028 Scenario

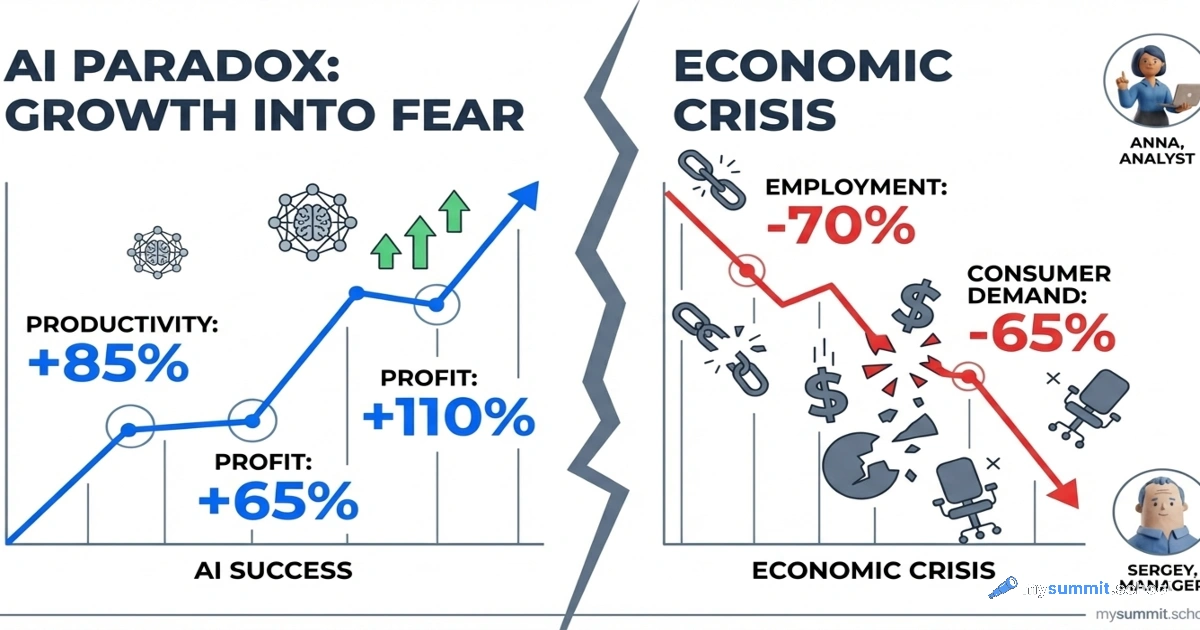

In February 2026, the investment research newsletter Citrini Research published a scenario that flips the usual logic on its head. Bears typically predict that AI will underdeliver. Citrini asks a different question: what if AI delivers on every promise – and that’s exactly what causes the problem?

Their piece «The 2028 Global Intelligence Crisis» is a fictional memo dated June 2028. Not a forecast, but a stress test: what happens to the economy if machine intelligence really does replace white-collar workers as fast as the developers claim?

Read more