Qwen by Alibaba in 2026: Free Open-Source AI for Business

While managers pay for ChatGPT Plus and Claude Pro, Alibaba has quietly built one of the most powerful — and free — AI ecosystems in the world. Qwen (pronounced “chwen”, from 通义千问 – “A Thousand Questions”) had, by March 2026, surpassed all Western competitors in download counts and become a tool every manager should know — especially if cost or data control has ever been on the agenda.

The core appeal of Qwen for managers is simple: it’s a full-featured AI assistant available for free through Qwen Chat — no subscription, no request limits. By April 2026, Qwen model downloads have approached 1 billion — the family accounts for over 50% of all open-source model downloads worldwide. Qwen’s consumer app reached 203 million monthly active users, ranking third globally after ChatGPT and DouBao.

What makes Qwen different?

The key differentiator from most competitors is openness and scale. While OpenAI and Anthropic keep their models closed, Alibaba publishes model code openly on Hugging Face. This means any company can download a model and run it on their own servers — no data ever leaves the company perimeter. Among those who have used Qwen as a foundation for their own products: GigaChat from Sber and Oylan, Kazakhstan’s national language model.

- All major Qwen models are available for download and local deployment. This is critical for companies with strict data security requirements — finance, healthcare, public sector.

- Flagship models operate on a specialization principle: each request is processed by only the relevant “knowledge segment”, not the entire model. In practice, a laptop-sized model delivers response quality that previously required server hardware costing hundreds of thousands.

- The Qwen3 text model series supports 119 languages and dialects; newer versions support 201 languages. Russia alone accounts for around 30% of Qwen platform traffic.

- Text, images, video, audio, speech synthesis — all in one ecosystem.

What Qwen does in April 2026

The Qwen lineup today covers nearly every type of task a modern company faces:

- Long-document work. The flagship Qwen3.6-Plus holds up to 1 million tokens in memory — around 2,000 A4 pages per request. You can load an entire annual report, a customer support archive, or a full code repository and query the whole thing at once.

- Complex reasoning. Qwen3-Max-Thinking unrolls step-by-step reasoning for financial analysis, legal questions, and logic verification. It leads Chinese labs on instruction-following accuracy and nearly doubled its score on one of the toughest reasoning benchmarks (Humanity’s Last Exam).

- Agentic coding. Qwen3.6-35B-A3B plugs into Claude Code, Qwen Code, and similar tools and solves tasks at the level of Claude Sonnet 4.5 at a fraction of the cost. Open license — deployable inside the company.

- Voice and video in one model. Qwen3.5-Omni natively understands text, images, audio, and video within a single architecture. It powers “Audio-Visual Vibe Coding” — describe a task in voice and show your screen on video, and the model writes the code.

- Image and speech generation. Qwen-Image-2512 produces photorealistic images with accurate multi-line text rendering — rare among generative models. Qwen3-TTS synthesizes speech with voice cloning for narration and voice assistants.

- Top-tier among open models. Qwen3.5-Max-Preview sits in the LM Arena top 5 with a score of 1464 — first among Chinese models, sixth globally. Qwen3.5-397B-A17B is the third-best open-source model in the world, comparable to GPT-5 and Claude Opus.

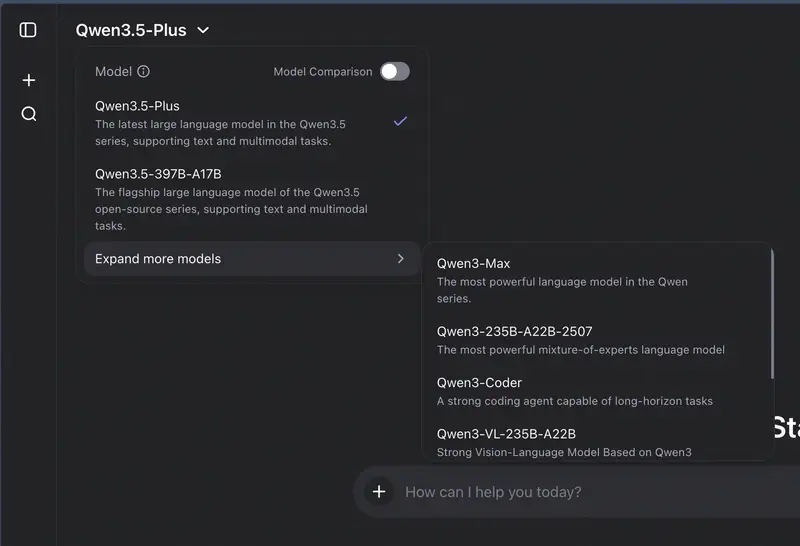

Core Qwen Models (April 2026)

By 2026, Alibaba has assembled one of the most extensive model lineups in the industry.

Qwen3.6-Plus – New flagship with 1M token context

Released in April 2026 as a response to Gemini 2.5 and GPT-5. The hybrid architecture combines linear attention and a sparse mixture of experts — enabling work with massive documents without sacrificing speed.

- Context up to 1 million tokens — around 2,000 A4 pages per request. Load an entire code repository, an annual report, or a customer support database.

- 78.8% on SWE-bench Verified — near the level of closed flagship models on programming tasks.

- Audio-Visual Vibe Coding: describe a task in voice and show the mockup on camera — the model generates the code.

- Apache 2.0 license — weights downloadable for local deployment.

Qwen3.5 – Multimodal model family

Released in March 2026, available on Microsoft Azure. The key difference: these models “understand” text, images, audio, and video as a unified whole within a single architecture. A single prompt can simultaneously reference an uploaded document, a screenshot, a video clip, and text context.

The series spans an unprecedented range — from 0.8B parameters (smartphone-runnable) to 397B (comparable to GPT and Claude):

- Qwen3.5-4B / 9B – the smartest models in their weight classes; run on a laptop and outperform models 2–3x larger. Even on a phone.

- Qwen3.5-27B – strict instruction-following: structured reports, form completion, multi-step tasks.

- Qwen3.5-35B-A3B – balanced quality and speed: 35B parameters, only 3B active.

- Qwen3.5-122B-A10B – complex analytics and large information volumes.

- Qwen3.5-397B-A17B – open-source flagship; on the GDPval-AA benchmark, outperforms its predecessor Qwen3-235B by 361 points. Third-best open-source model in the world.

Shared across the series:

- Around 400 pages of A4 per request; context can be extended to a small book equivalent.

- Two modes — “Think” (step-by-step reasoning for complex tasks) and “No Think” (instant answers for routine) — switchable within a single model without switching services.

- Support for 201 languages.

- Competes with leading multimodal models on independent benchmarks at significantly lower cost.

Mode switching is one of the key innovations in Qwen3/3.5. No need to choose between a “smart” and a “fast” model: the same model adapts to task complexity. Deep analysis mode for a financial report. Instant answer for “when’s the meeting?”

Qwen3-235B – Flagship open-source text model

Released in April 2025, trained on twice the data of the previous generation. Specialized architecture achieves flagship quality at significantly lower compute costs — noticeable when working via API.

- Model code is open — any company can download and run it on their own servers. Serious server hardware required.

- The model “thinks” before answering — checking logic step by step. Important for complex calculations, legal analysis, financial planning.

- Qwen3-Max — cloud version via API for those who prefer not to manage their own installation.

- Up to 200 pages of A4 per request; up to 400 pages in extended mode.

Qwen3-30B – Optimal for corporate server deployment

An enterprise-class model: processes requests quickly and cheaply despite its large size. Outperforms older models that required three times the resources.

- For teams that need a powerful assistant on their own servers without data center costs.

- Up to 200 pages of A4 per request.

Qwen3-Coder-Next – Developer model (February 2026)

Specialized model for writing and reviewing code. Outperforms DeepSeek models on independent benchmarks, with results comparable to Claude Sonnet 4.5.

For managers, this is likely a tool for the development team rather than direct use: the model can hold an entire project in memory — not just a single file, but the full repository. Local deployment requires approximately 51 GB of RAM.

Qwen3-VL – Image and document analysis

Understands images, video (over an hour long), PDF documents, and handwritten text. Text recognition works in 32 languages: upload a contract scan or a photo of a whiteboard after a meeting and get structured text back.

Qwen3.5-Omni – Native multimodality

Text, images, audio, and video are processed by a single architecture, without separate encoders. Best-in-class results on 215 audio and audio-visual understanding tasks. Supports real-time voice input and output, built-in web search, and external tool calls. It powers the Audio-Visual Vibe Coding scenario: a developer describes the requirements in voice and shows the interface on video — the model writes the code.

Qwen3-TTS and Qwen-Image-2512 – Voice and images

Qwen3-TTS (open license) — speech synthesis with voice cloning and streaming generation for voice assistants and narration. Qwen-Image-2512 — an updated image generation model with photorealistic detail and accurate text rendering: multi-line headlines, paragraphs, branded elements — the same areas where Midjourney and DALL-E typically fall short.

Models for local deployment

Alibaba offers a range of compact models that can be run on your own hardware — from a laptop to a corporate server:

| Where to run | Model | Max document | Best for |

|---|---|---|---|

| Smartphone or tablet | Qwen3-0.6B | ~50 pages | Autocomplete, simple prompts |

| Regular laptop (4 GB RAM) | Qwen3-1.7B | ~50 pages | Email drafts, summarization |

| Powerful laptop (8 GB RAM) | Qwen3-4B | ~50 pages | Document analysis, quality of old top models |

| Workstation (16 GB RAM) | Qwen3-8B | ~200 pages | Complex documents, tables, long dialogues |

| Department server (32 GB RAM) | Qwen3-14B | ~200 pages | Full team assistant |

| Corporate server (48 GB RAM) | Qwen3-32B | ~200 pages | Quality close to top cloud models |

| Server cluster (140+ GB) | Qwen3-235B | ~400 pages | Flagship quality, competes with GPT and Claude |

If your company cannot send data to external servers — finance, healthcare, public sector — you can download the model and deploy it inside your corporate network. Data never leaves your perimeter. This is impossible with ChatGPT or Claude.

Qwen3-4B, fitting on a regular laptop (from 4 GB RAM), demonstrates quality comparable to a model that previously required a full server rack. This is one of the clearest examples of how rapidly open model efficiency is advancing.

A practical guide to choosing hardware, installing Ollama, and comparing local models against cloud alternatives – in the local LLM guide for managers.

How Qwen compares to competitors

The flagship Qwen3-235B competes with DeepSeek-R1, o1, Grok-3, and Gemini 2.5 Pro on independent benchmarks. For everyday business tasks, mid-tier models deliver results on par with the best cloud services.

| Criterion | Qwen (open-source) | ChatGPT / Claude | DeepSeek |

|---|---|---|---|

| Flagship quality | Top-tier (competitor to GPT and Claude) | Top-tier | Top-tier |

| Free web access | Yes, chat.qwen.ai unlimited | Limited | Limited |

| Self-hostable | Yes, open code | No | Partially |

| API cost | Lowest in class | Medium–high | Low |

| Image and video support | Yes (Qwen3.5 series) | Yes | Limited |

| Language quality | Good across 200+ languages | Excellent | Good |

For 90% of business tasks — writing, data analysis, document summarization — you won’t notice a difference between Qwen and paid competitors, at 5–10x lower cost.

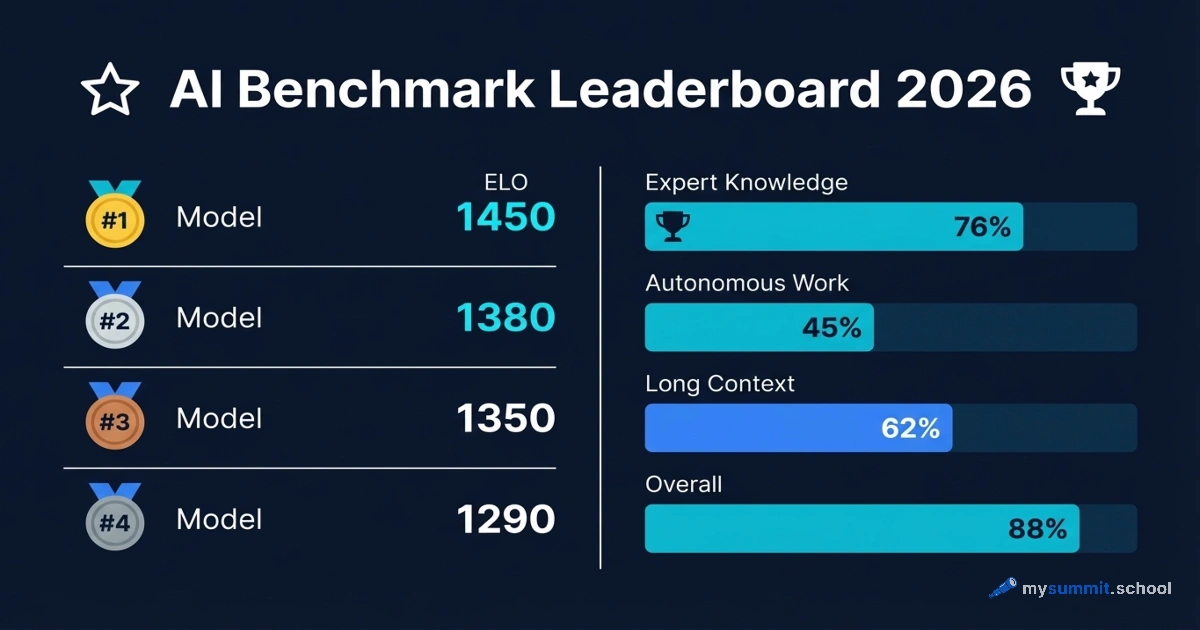

Benchmark Results

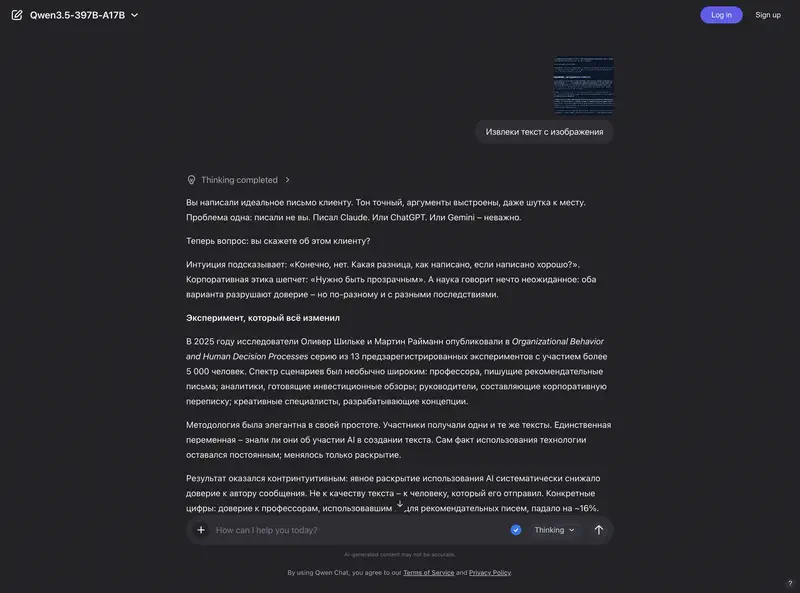

We tested Qwen models on real-world management tasks – analysis, decision-making, planning, and team communication – alongside ChatGPT, Claude, DeepSeek, and dozens of other models.

Qwen3.5 Plus landed in the strong upper tier – one of the best free models we tested. It performed particularly well in analysis, decision-making, and planning tasks. The one consistent weakness: a tendency to default to “politically safe” answers, hedging where a more opinionated response would be more useful. Qwen3.5 397B delivered nearly identical quality.

Qwen3 Max showed strong results in analysis and planning, with solid performance in team management scenarios as well.

The surprise of the lineup was Qwen3.5 9B – a model that runs on a regular laptop with 8 GB of RAM, yet outperformed many larger models in our tests. For anyone who needs a capable AI assistant where data never leaves the machine, this is a genuinely unique option.

Conversely, Qwen3 235B was surprisingly weak for its size. The cloud-optimised Plus variant consistently outperformed it – a clear reminder that parameter count does not equal quality.

For managers looking for a free, open-source alternative to ChatGPT or Claude – particularly for analytical and planning work – Qwen is a serious contender.

Practical applications for managers

Most managers who try Qwen start with Qwen Chat — a free web interface with access to current models. It supports web search, image generation, and document analysis without any subscription. A good entry point for those who want to evaluate the tool before talking to IT.

Qwen handles multilingual documents well: contract scans, handwritten notes, whiteboard photos from meetings. Upload a photo — get structured text. Recognition works in 32 languages with quality notably better than many Western tools for non-English content.

A separate use case is corporate deployment without the cloud. For companies prohibited from sending data outside their perimeter, Qwen offers something unavailable with ChatGPT or Claude: models can be downloaded and run on your own servers. This is the same logic that sovereign AI systems use — data control matters more than cloud convenience.

Finally, Qwen3-Omni and Qwen3-TTS enable building a voice assistant in any language: speech recognition, request processing, speech synthesis — all from one ecosystem, without connecting multiple separate services.

Limitations and risks

- Censorship and filters: Like all Chinese models, Qwen has built-in restrictions on certain political and historical topics. Rarely a problem for business tasks.

- Geopolitical risk: The model belongs to Alibaba (China). For some organizations, this may be a constraint when using the cloud API. Solution: local deployment of open-source versions.

- Language quality variance: Despite support for 200+ languages, quality can vary by language. Test on your specific use cases.

- Integrations: Ready-made connectors to Western services — Slack, Notion, Google Workspace — are far fewer than for ChatGPT or Claude. Qwen is better integrated with Chinese enterprise tools.

Pricing and availability

| Option | Cost | Best for |

|---|---|---|

| chat.qwen.ai | Free | Try Qwen without API registration |

| Qwen3-0.6B / 4B (download) | Free | Laptop deployment, full data control |

| Qwen3-235B (download) | Free | Flagship locally, server cluster required |

| Qwen3.6-Plus (preview) | Free during preview | 1M token context, agentic scenarios |

| Qwen3.5-Plus (API) | $0.4 / $2.4 per 1M input/output tokens | Regular tasks, cost-quality balance |

| Qwen3.5-397B-A17B (API) | $0.39 / $2.34 per 1M input/output tokens | Open-source flagship |

| Qwen3-Max-Thinking (API) | from $1.2 / $6 per 1M tokens | Complex reasoning, long context up to 256K |

For comparison: equivalent analysis of a 100-page report via GPT-4o costs approximately $0.35; via Claude Sonnet, around $0.55. Qwen-Plus is 7–10x cheaper at comparable quality for most tasks.

Qwen offers a path to start free on chat.qwen.ai, scale via cheap API when needed, and fully switch to local deployment under strict security requirements. No Western competitor offers all three options simultaneously.

Is it worth trying?

The paradox of Qwen is that the most compelling argument in its favor is not response quality. Most top models today deliver comparable results on typical tasks, and the difference is not obvious in daily work.

The compelling argument is structural flexibility. Qwen is the only major tool where a manager can choose any of three entry points: free chat without registration, cloud API at 7–10x lower cost than Western alternatives, or fully private on-premises deployment. And you can move between these options as needs grow — without changing tools.

This raises a question: why do most managers still pay for expensive subscriptions when an equivalent is available free with better data control?

We cover Qwen and other AI tools with hands-on practice

40 free lessons on AI for managers: 9 tool reviews, practical scenarios, quizzes and assignments — no registration or payment required.

Continue learning

Open the textbook and pick up where you left off

Sources

- Qwen Chat – free web interface – Alibaba’s official chat, works without registration

- Official Qwen website – documentation and model descriptions

- Qwen models on Hugging Face – 433 open models for download, 300M+ downloads

- Qwen on GitHub – source code and documentation, 40+ repositories

- API via Alibaba Cloud Model Studio – cloud access to Qwen-Plus and Qwen-Max

Stanislav Belyaev

Engineering Leader at Microsoft18 years leading engineering teams. Founder of mysummit.school. 700+ graduates at Yandex Practicum and Stratoplan.