When AI Hurts Learning – and When It Doubles Results

In March 2025 at SXSW EDU, strategic foresight advisor Sinead Bovell delivered a talk on AI and the future of education. No hype, no panic. But with two studies that change how you should think about AI’s role in learning.

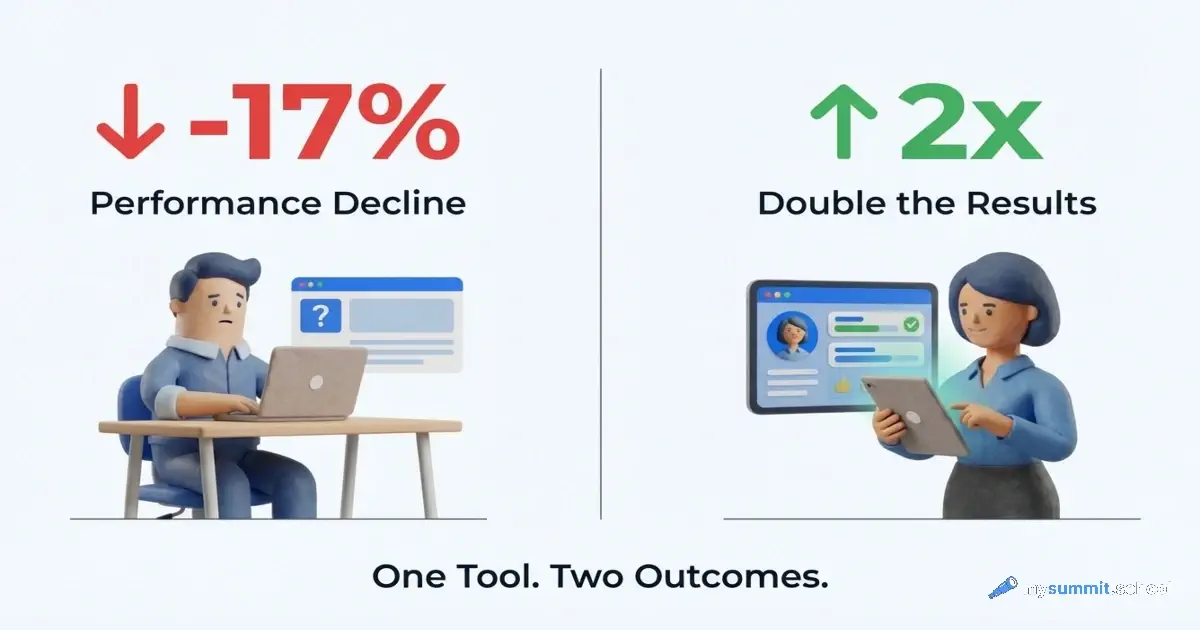

First: a group of students who used ChatGPT without restrictions scored 17% worse than the control group working from a textbook. Second: a different group, where AI was deployed within a fully redesigned instructional system, outperformed a traditional lecture by a factor of two.

Same tool. Opposite outcomes. The difference is in the approach.

Попробуйте ИИ на 9 реальных задачах – бесплатно, без регистрации. Увидите, где он помогает, а где создаёт ложную уверенность.

No payment required • Get notified on launch

The Wharton Experiment: When AI Hurts

Researchers from the Wharton School of Business at the University of Pennsylvania, working alongside educators at the British International School of Budapest, split students into three groups. The control group used a textbook. The “GPT-base” group used ChatGPT without restrictions. The “GPT-tutor” group worked with a guided AI tutor.

During practice, the performance gap looked like an obvious win for AI: the GPT-base group completed tasks 48% better than the control, and the GPT-tutor group performed 127% better.

Then AI was removed. Students were asked to take a final test on their own.

The GPT-base group scored 17% worse than the students who had used a textbook. The GPT-tutor group matched the control – no better, no worse.

This isn’t an isolated pedagogical quirk. According to Bovell, the finding is universal: unrestricted generative AI undermines learning. Students were getting correct answers while bypassing the cognitive work that builds understanding. When the AI was taken away, there was no understanding left.

The study passed peer review and was published in PNAS in June 2025 – moving it from “interesting experiment” to verified scientific evidence.

The same pattern appears in smaller-scale experiments. At an underfunded public school in the US, researchers from the University of Illinois configured AI strictly around one teacher’s rubric – six essay evaluation criteria, materials drawn only from the curriculum. Result: 5 out of 6 students improved their work. The key difference from GPT-base: the AI didn’t give answers – it pointed to exactly what needed to be fixed, against specific criteria. Students described the feedback as “direct” and “fixable” – concrete and actionable.

The Harvard Experiment: When AI Works Twice as Well

The second half of Bovell’s talk focused on a Harvard physics study that points in exactly the opposite direction. The AI group outperformed the traditional lecture group by a factor of two.

Surprisingly, there was no magic prompt or special model involved. The difference was this: the entire learning system was rebuilt for the AI group. Students worked at their own pace, received immediate feedback at every step, and the system adapted to their knowledge gaps in real time.

This wasn’t “adding AI to existing lessons.” The entire process was redesigned around what the tool makes possible. The sample was around 194 students – enough for preliminary conclusions, but not final ones.

Bovell states the key finding directly: if you simply embed AI into an existing process without changing its underlying logic, you’ll most likely end up with the first scenario. If you redesign the system, you have a chance at the second.

Alpha School: Two Hours Instead of Six

One of the most provocative examples in the talk is Alpha School. There, children spend two hours a day with an AI tutor on core academic skills. The rest of the time goes to life skills, emotional intelligence, projects, and social interaction.

Result: 99th percentile academic performance. Some students cover in two hours what a traditional school would take 5–10 hours to teach.

The mechanism is the same as in the Harvard study: adaptive, immediate feedback at the student’s own pace. Students don’t wait for a teacher to review their notebook. The system catches an error the moment it happens and brings the student back to the right place – not next week, but within seconds.

The skeptical question is obvious: what about knowledge the student gained “with AI” but can’t reproduce without it? Bovell doesn’t sidestep this. Her answer: the line isn’t between “used AI” and “didn’t use AI” – it’s between “thought” and “didn’t think.” A guided tutor that asks questions and demands explanations keeps thinking in the process. A tool that simply delivers answers kills it.

The Cheating Crisis and the Confidence Crisis

Bovell describes two phenomena that seem like opposites but are actually two sides of the same problem.

The first: students write essays in ChatGPT after 3 PM – and this has become the norm. The numbers back it up: 86% of US students use AI for schoolwork, while 70% of teachers see a decline in critical thinking. Bovell calls this “the safest assumption” – if an assignment was done at home, it was probably done with AI. The answer isn’t trying to catch violators (that’s a race teachers will always lose), but flipping the logic: deep learning and assessment happen in the classroom; research with AI happens at home. More unannounced knowledge checks where no external tool is available.

The second phenomenon is more unexpected. Students replace their handwritten essays with ChatGPT output – even when it doesn’t save them time. Not out of laziness. Out of self-doubt. They don’t trust their own thinking.

This is a more serious problem than cheating. The Microsoft Research study Bovell cites shows that over-reliance on AI reduces critical thinking capacity. People stop exercising judgment – and gradually lose faith in it.

9 уроков с реальными задачами – бесплатно. Видно, где AI помогает думать, а где заменяет мышление.

No payment required • Get notified on launch

Entrepreneurs and Thinking: Who Benefits from AI

One of the most uncomfortable findings in the talk comes from an entrepreneurship study. High-performing entrepreneurs with strong critical thinking skills saw significantly improved results when working with AI. Low-performing entrepreneurs saw worse results.

The difference wasn’t the model. It was the quality of questions asked and what was done with the answers.

A high-performing entrepreneur arrives at AI with a strategic problem already structured in their mind. They know what they want to test, which assumptions to challenge, what data is missing. AI accelerates their thinking. A low-performing entrepreneur arrives with a vague request – and accepts the first answer, without knowing whether the right question was even asked.

This invites some reflection on what’s often called “the democratization of AI.” The tool is genuinely available to everyone. But it amplifies what’s already there. If critical thinking is present, it gets stronger. If it isn’t, AI creates the illusion of competence without the substance.

The Most Important Future Skills Have Nothing to Do with Technology

This is probably Bovell’s most unexpected point. Despite her subject matter – strategic AI foresight – she talks about skills that have nothing to do with technology.

Critical thinking. Reading. Play. Long-term thinking. The ability to work across disciplines.

The last point she makes concrete: today’s children will hold roughly 17 jobs across 5 different industries over their careers. The only skill that works across 17 jobs and 5 industries is the ability to switch between domains and see connections that a narrow specialist misses.

But why do these skills feature in a talk about AI? Because they’re exactly what determines whether you end up in the “AI amplifies” category or the “AI harms” category. The Wharton experiment isn’t about ChatGPT. It’s about what happens when a tool replaces thinking instead of supporting it.

The most practical observation: most of these skills are free to develop. Read more books. Play strategy games. Discuss decisions out loud. Ask yourself uncomfortable questions. These aren’t new educational technologies – they’re old practices being crowded out by scrolling and AI-outsourced thinking.

Three Levels of Response

Bovell describes three levels at which education needs to respond to AI.

The first level is safe integration. Children need to understand what AI is, how it works, what it can and can’t do. Not “here’s a tool,” but “here’s what’s happening under the hood and why you can’t blindly trust the output.”

The second level is adapting practices. If children are known to use AI after school, what happens in the classroom needs to change. Which uses of AI are acceptable and which aren’t – and how this is embedded into the learning process rather than banned by decree.

The third level is fundamental redesign. Not “add AI to an existing curriculum,” but rethink the entire process from scratch. The Harvard experiment was at this level. And this is what produces double outcomes instead of minus 17%.

Bovell is explicit about one thing: the third level isn’t a task for teachers. It belongs to governments, education system leaders, and curriculum designers. Teachers already serve as social workers, psychologists, and mentors on top of their primary role. Adding systemic pedagogical reinvention on top of that means shifting institutional responsibility onto someone with neither the time nor the authority to carry it.

How real is this problem in practice? 2025 Microsoft data: 67% of school principals believe teachers are trained to work with AI. 57% of teachers themselves say they received no preparation. Bovell’s third level isn’t theory. It’s what’s already happening without a systemic response.

A separate issue is the quality of what AI generates for teachers. An analysis of AI-generated lessons found that 45% are stuck at the “remember” level of Bloom’s taxonomy. Only 4% reach analysis or creation. AI saves teachers time – but doesn’t improve what they produce with that time. Without understanding how AI works, a lesson looks polished but stays at workbook depth in terms of cognitive rigor.

Where We Are Now: The 1992 of the Internet

Bovell closes her talk with a metaphor worth sitting with. AI today is roughly the 1992 of the internet. The technology is understood by a small number of people, everyone else is just beginning to explore it, and Google and Amazon haven’t been invented yet.

Electricity didn’t disappear. It simply stopped being a topic of conversation. No one today thinks: “How should I use electricity in my life?” It’s just there – in light bulbs, servers, elevators, phones.

AI is moving toward the same point. Not in the sense that preparation is impossible. In the sense that those who learn to work with the tool now, while it’s still visible, gain an advantage precisely because they understand the principles – not just press buttons. When AI becomes invisible like electricity, button-pressing will no longer be a competitive advantage.

Someone who understood in 1995 how HTTP works and why PageRank treats links as votes had a fundamentally different horizon of opportunity in 2005 – not because they wrote code, but because they understood the logic. The Wharton experiment shows the same is true for AI. Understanding why the tool causes harm in one scenario and helps in another matters more than knowing how to quickly hit “generate.”

Попробуйте ИИ на реальных задачах – и увидьте, где ошибаетесь

Полная программа курса: от основ промпт-инжиниринга и критического мышления до специализаций по проектному управлению и аналитике. Практические задания на реальных управленческих кейсах – галлюцинации, утечки данных, ложная эффективность.

What This Means for Educators

If you distill Bovell’s talk and the research findings into concrete actions, you get a short list.

Right now:

- Assume homework is being done with AI. Don’t fight it – move deep learning and assessment into the classroom.

- Add short, unannounced knowledge checks without device access – not for grades, but to see what knowledge is actually there.

- Explain to students how AI works: what hallucinations are, why you can’t trust an answer without verification, that AI is a tool, not a collaborator.

In the medium term:

- If your school deploys an AI tutor, require immediate feedback and adaptation to the student’s pace. Without these two elements, AI is more likely to hurt than help.

- Flip the homework logic: research with AI happens at home; discussion, critical analysis, and defending conclusions happen in the classroom.

Not the teacher’s job:

- Fundamental curriculum redesign belongs to ministries, curriculum designers, and education system architects. Teachers are already juggling a dozen roles as it is.