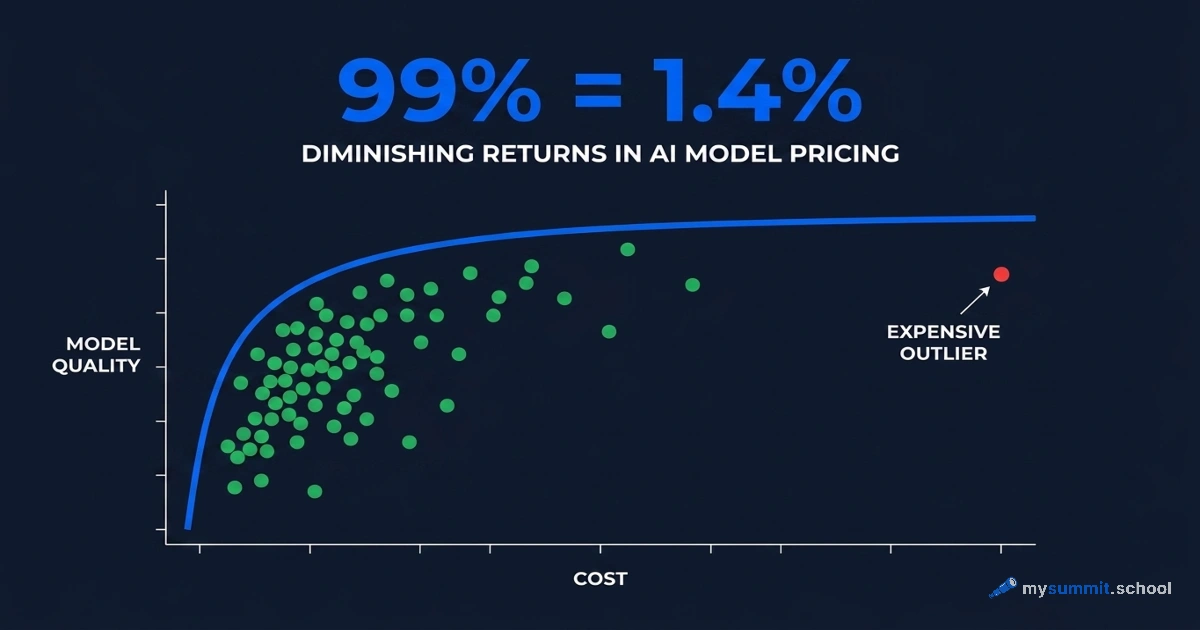

99% Quality at 1.4% of the Price: What's Wrong with the AI Model Market

Most managers pick an AI model the same way: grab the most expensive one available. The logic makes sense – pricier means better. That’s how enterprise software worked for the last twenty years.

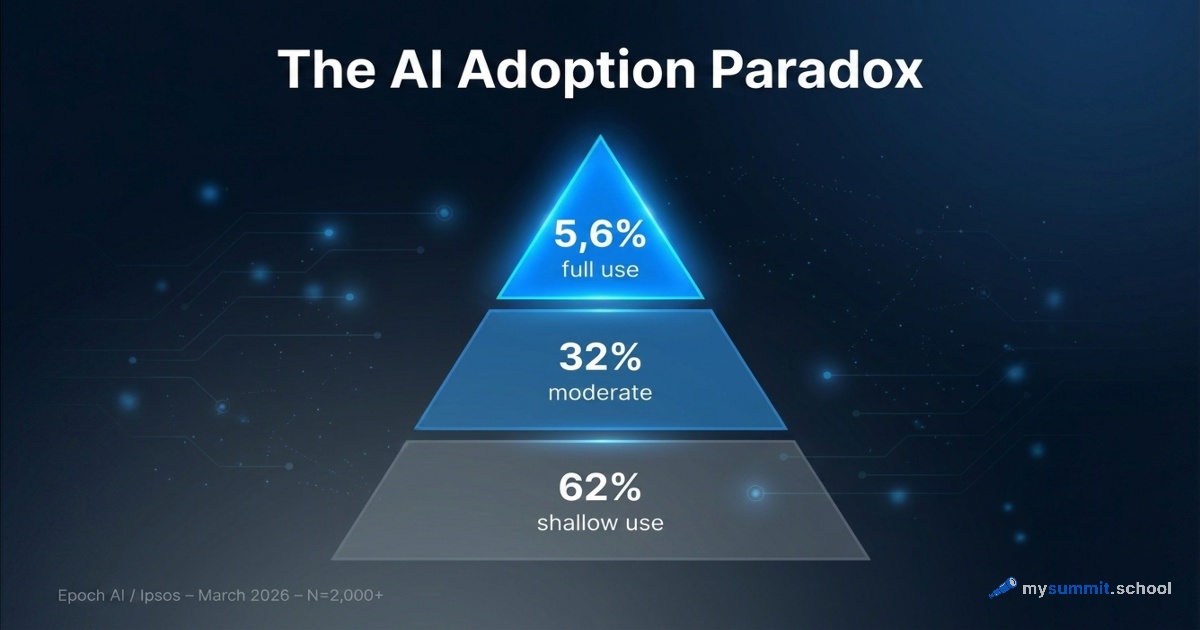

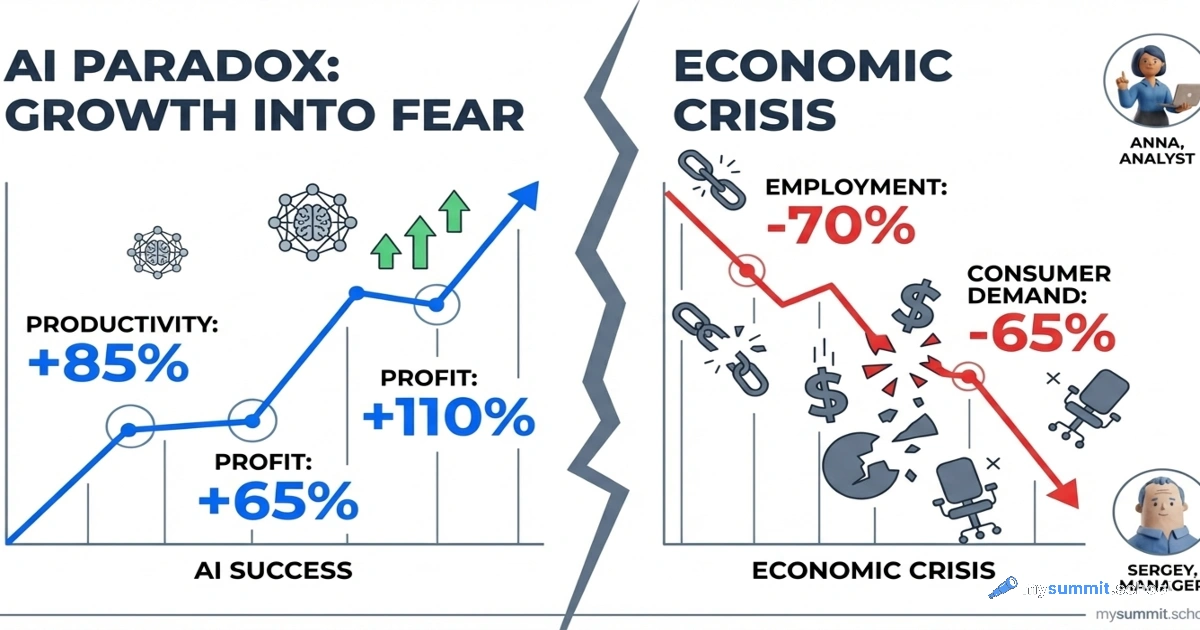

The AI model market in 2026 works differently. The cost per query ranges from $0.0001 to $0.17 – three orders of magnitude. And the actual quality difference between the top ten models? 0.24 points on a five-point scale. Meanwhile, Wharton / GBK Collective reports that a third of corporate AI projects never get past the pilot stage. And Epoch AI shows that only 5.6% of users apply AI in any genuinely deep way.

Maybe the question isn’t which model is best, but whether paying a premium delivers proportionally better results for typical management tasks.

We tested it. The answer was harsher than we expected.

Read more