KazLLM and Sovereign AI: A Guide for Kazakhstan's Civil Servants

On 11 February 2026, at a government meeting, President Tokayev publicly criticised KazLLM. The model, launched with great fanfare in December 2024, has just 600,000 users – 3% of the country’s population. For comparison: 2.6 million people in Kazakhstan use ChatGPT. The president was blunt: KazLLM “cannot compete with ChatGPT.”

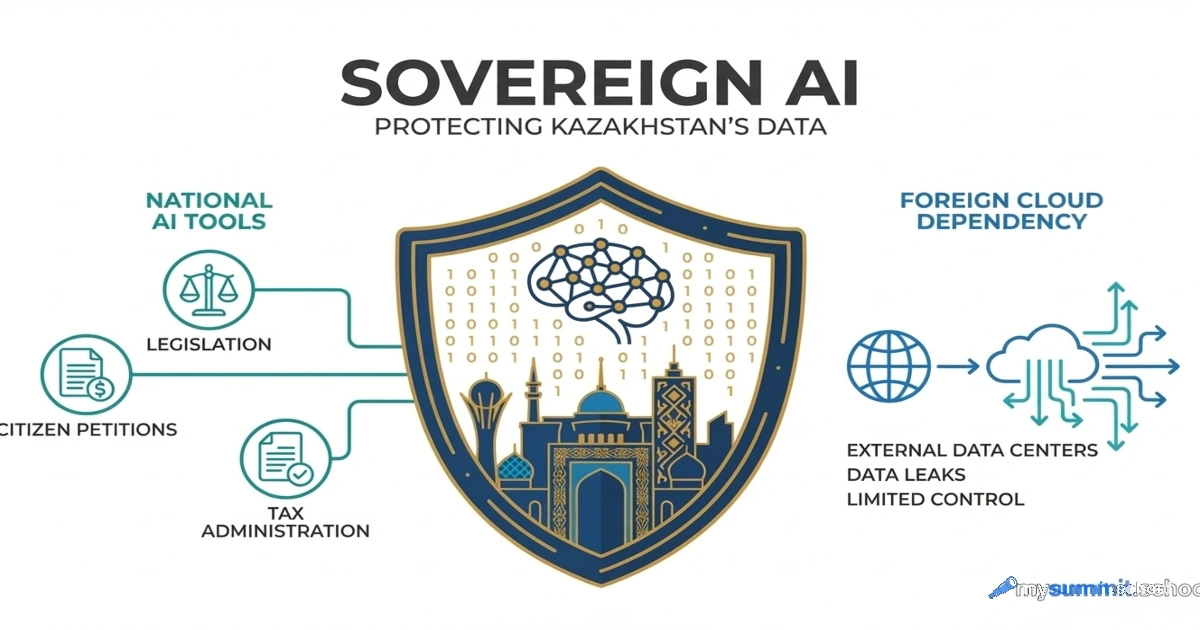

This statement cuts to the heart of the matter. Why does Kazakhstan need its own language model if global solutions work better? And if sovereign AI is necessary – why is it losing?

The answer is more complicated than it seems. Because KazLLM is not “Kazakhstan’s ChatGPT.” It’s a fundamentally different tool with a different mission. Comparing them is like comparing a national power plant with an imported household appliance.

Read more