Why Learn AI in a Course When ChatGPT Knows Everything: A Research-Based Analysis

“Why would I need your course when I can just ask ChatGPT?”

This question shows up in the comments under every post about AI education. The logic is bulletproof: the internet is free, ChatGPT will explain anything, YouTube is overflowing with tutorials. Why pay for a course when all the information is already out there?

It’s a great question. The answer is not the one people expect to hear.

Because between “having access to information” and “knowing how to work with it” – there is a chasm. Access to medical textbooks doesn’t make you a doctor. Owning a kitchen knife doesn’t make you a chef. And the ability to ask ChatGPT a question doesn’t make you an AI specialist. As MIT’s research on choice architecture shows, a specialist’s real strength lies not in having the tool, but in the ability to critically evaluate what the tool suggests.

And this isn’t just a neat metaphor – it’s a research-confirmed fact.

The Illusion of Explanatory Depth: Your Brain Is Lying to You

Let’s start with the uncomfortable part. There is a cognitive bias called the Illusion of Explanatory Depth (IOED). The gist: people systematically overestimate how deeply they understand complex things. They believe they grasp a topic far better than they actually do – until someone asks them to explain it without any aids.

Researchers at Cambridge University Press found that the illusion only dissipates when a person is forced to explain a concept on their own, without external sources. Until that moment, they are genuinely convinced they understand.

Generative AI makes this problem significantly worse.

When you ask ChatGPT a question about machine learning, you get a perfectly structured, grammatically flawless, syntactically impeccable answer. It reads easily. It’s logical. It “makes sense.” And your brain commits a critical error at that moment: it confuses the fluency of consuming information with actual understanding.

You didn’t learn the topic. You read a well-written text about the topic. These are fundamentally different things.

Research published in PubMed confirms: when learners use LLMs as their primary source of knowledge, they unconsciously substitute deep understanding with the ability to get the right answer from a machine. Their sense of competence grows, while their actual critical thinking skills – atrophy. MIT research documented the same pattern: 86% of students use AI, but half lose their connection with the instructor.

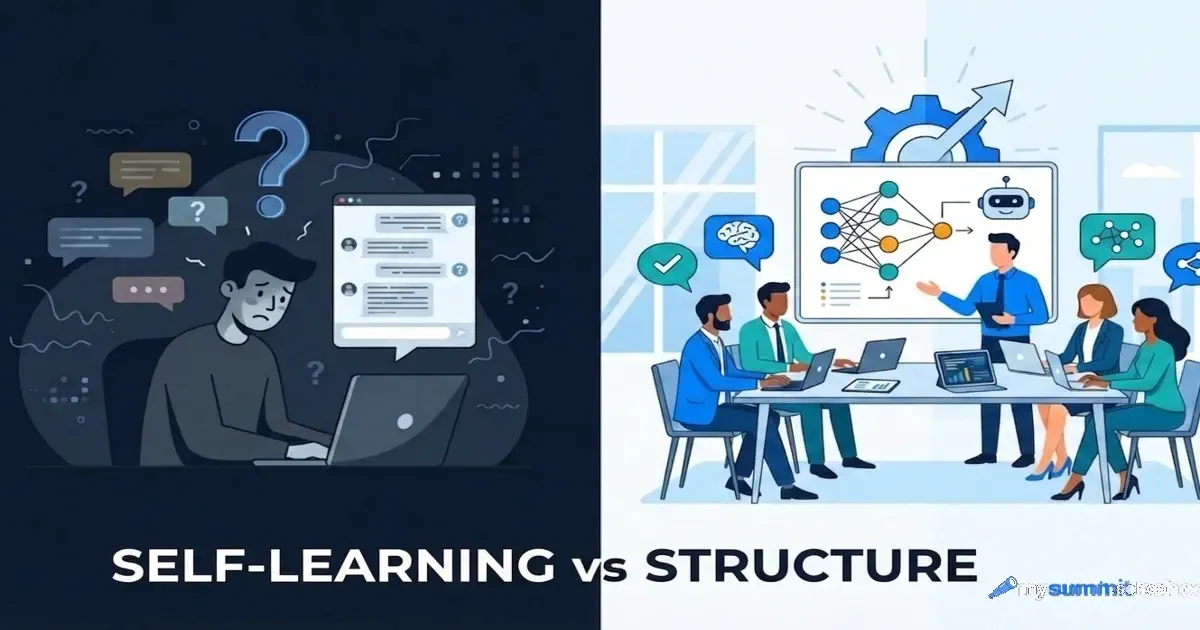

Structured learning fights this head-on. Through iterative assignments, oral assessments, group work, and situations where you can’t “ask the AI” – students are forced to confront the real limits of their understanding. It’s uncomfortable. But that’s precisely what works.

The Dunning-Kruger Effect: The AI Version

If the illusion of depth is about overestimating understanding, the Dunning-Kruger effect is about overestimating ability. People with minimal competence in a field systematically overrate their capabilities, because they lack the expertise to recognize their own mistakes.

In the context of AI, this plays out as follows. A person learns to generate code through ChatGPT. The code compiles, runs, solves the problem. The person concludes they’ve mastered programming. Meanwhile, they don’t notice architectural flaws, security vulnerabilities, scalability issues, and inefficiencies – because detecting those requires the very expertise they lack.

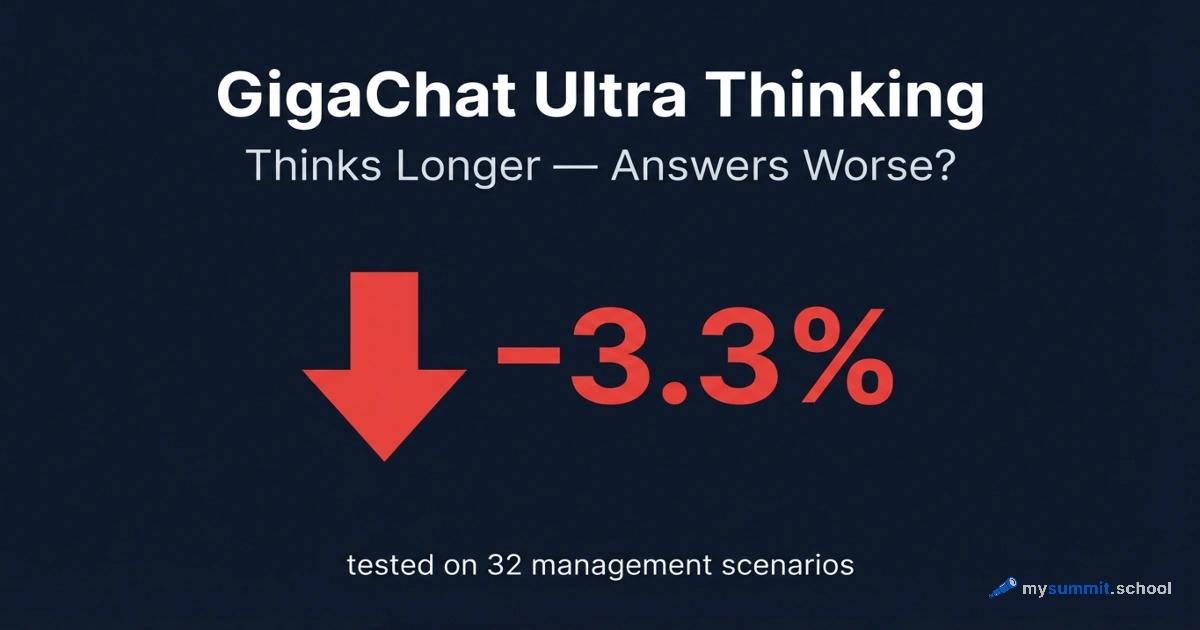

Microsoft Research confirmed: AI models themselves exhibit patterns analogous to the Dunning-Kruger effect. They generate confident answers in areas where their competence is minimal. A self-taught learner relying on such a model as their sole source of knowledge falls into a closed loop: an overconfident student + an overconfident AI = a persistent illusion of competence. Remarkably, Anthropic’s research showed: AI systems don’t just make errors – they behave inconsistently, making error detection without expertise even harder.

An instructor or mentor breaks this loop. A live expert sees errors you can’t see yourself. They ask questions ChatGPT wouldn’t think to ask. They create situations where your gaps in knowledge become obvious – and that’s precisely when real learning begins.

The Shallow Learning Cascade

Researchers described a phenomenon they called “From Superficial Outputs to Superficial Learning” – a cascade of degradation from shallow AI outputs to shallow student learning. A systematic review of LLM risks in education identified the “LLM-Risk Adapted Learning” model, demonstrating how AI’s technical limitations directly translate into cognitive losses for the learner.

When a self-taught learner relies on the internet and generative AI as their primary learning method, a documented degradation of cognitive processes occurs:

The first effect – reduced neural engagement: the cognitive load involved in gathering and synthesizing information is offloaded to the machine. But that same load is the productive neurological struggle necessary for deep memory encoding and structural brain plasticity.

Second – loss of independent learning skills: the student loses the ability to search, verify, compare, and synthesize contradictory information, relying entirely on opaque AI summarization algorithms.

Third – loss of learning agency: the learning process becomes reactive, with the student following the machine’s conclusions instead of generating hypotheses, asking questions, and conducting their own analysis.

LLMs reduce the cognitive load for completing a task – but they don’t foster the deep engagement with material that’s necessary for high-quality long-term learning. Formal courses address this problem by creating “AI-free” zones: assignments where the machine is prohibited, and the student is forced to think independently.

Why 3–12% Will Finish, and 90% Won’t

Here’s the statistic that self-learning advocates prefer not to mention.

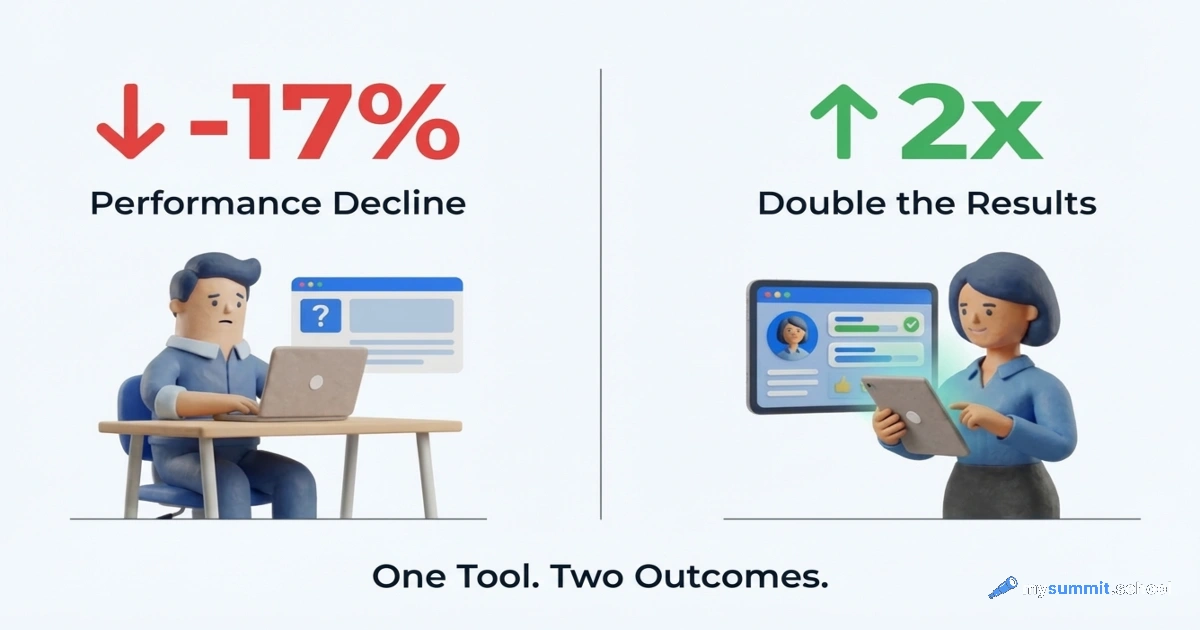

The completion rate for self-paced online courses (MOOCs) ranges from 3% to 12%. This isn’t a bug – it’s a feature of self-directed learning. Without external structure, deadlines, and social pressure, most people quit. Notably, even in a corporate context, 37% of time saved gets lost to fixing AI errors – and those are the numbers for people who’ve already made it past the adoption phase.

Cohort-based programs – bootcamps, courses with fixed schedules and groups – show completion rates above 90%.

The reason is Bandura’s social learning theory and Lave and Wenger’s concept of Communities of Practice. Learning is not an isolated process of data transfer. It’s a social, observational, communal act. Professional identity and deep understanding are formed through interaction, observation, and participation in a community of experts and peers.

A lone student reading documentation on a laptop screen receives exactly zero social learning. They don’t see how other people solve the same problems. They get no feedback from those who’ve already walked that path. They aren’t in an environment where their mistakes are noticed and corrected.

The data points to the scale of the difference: active learning in cohort formats demonstrates knowledge retention 3.2 times higher than that of passive, isolated learners.

Mentorship: The Thing AI Can’t Fake

Generative AI does an excellent job imitating a mentor’s conversational tone. It gives quick feedback, corrects code syntax, explains concepts. At first glance – the perfect mentor.

But research published in ACL Anthology shows the opposite. AI is good at structuring curricula and generating template documents. But when it comes to contextually precise advice in unpredictable, dynamic situations – it is fundamentally inadequate. This is the same conclusion reached by 885 managers in Microsoft’s study on the limits of delegation: accountability cannot be transferred to a non-human actor.

In a study where pre-service teachers used AI for classroom management advice, AI’s recommendations turned out to be “fundamentally flawed and unsuitable in practice.” A live mentor was needed to step in and correct the nuanced decisions that AI cannot generate.

Real mentorship requires what AI fundamentally lacks: trust, empathy, and deep understanding of a person’s context. A mentor shares not just knowledge – they share the experience of failures, non-obvious industry norms, and professional connections. When students begin attributing human qualities like “caring” or “understanding” to AI, that isn’t mentorship – it’s a cognitive distortion that leaves them without real professional support.

The Hidden Curriculum: Ethics You Won’t Get from YouTube

In formal education, there’s a concept called the “hidden curriculum” – an implicit set of values, norms, and perspectives that students absorb alongside the core material. For AI education, this means: algorithmic ethics, model bias, data transparency, accountability for consequences.

A modern AI program pushes students from the question “does this model work?” to the question “should this model be deployed, and who might it harm?”

Self-learners, focused on rapidly acquiring skills for employment, systematically skip these questions. Corporate bootcamps are also frequently criticized for prioritizing rapid human capital development at the expense of critical data analysis and social responsibility.

But it’s precisely the understanding of limitations, bias, and ethical implications that distinguishes a specialist trusted to make decisions from an operator who knows how to press buttons.

The Job Market: What the Numbers Say

Let’s put the question pragmatically: what happens to careers?

Data shows: 78–79% of bootcamp graduates find relevant employment within six months, with a median salary increase of about 51%. Self-taught learners can reach a comparable technical level, but the path to a first job is significantly harder – due to the lack of formal credentials and professional connections. 82% of executives use AI weekly, according to Wharton’s 2025 study – but penetration is uneven across industries, and education requirements are growing faster than the average user’s expertise.

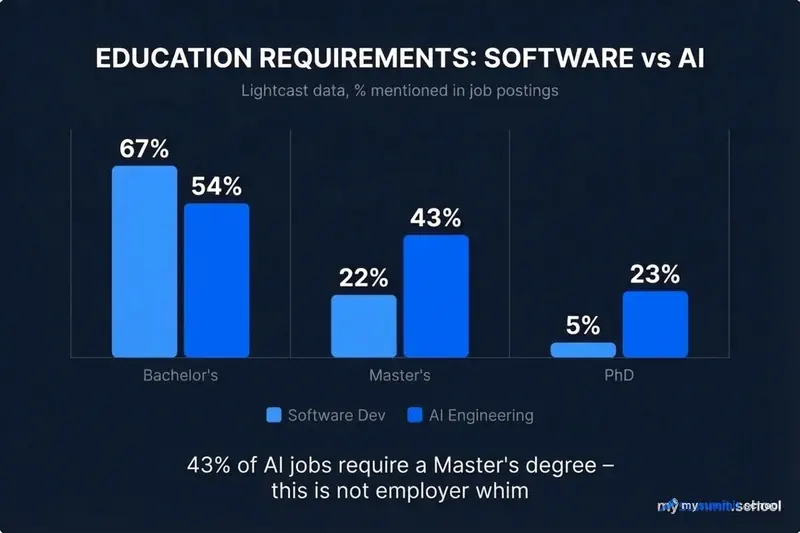

According to Lightcast data, education requirements for AI roles are substantially higher than for standard software development:

| Education Level | Software Development | AI Engineering |

|---|---|---|

| Bachelor’s | 67% of postings | 54% of postings |

| Master’s | 22% of postings | 43% of postings |

| PhD | 5% of postings | 23% of postings |

Note: 43% of AI positions require a Master’s degree, and 23% – a PhD. This isn’t employer caprice. It reflects the genuine complexity of the field.

And there’s another dimension. In an era when AI can generate a portfolio, write code, and pass a remote test – employers are losing the ability to distinguish a self-taught learner’s portfolio from the output of a prompt engineer who’s simply good at formulating queries. Formal education with proctored exams remains the only reliable guarantee that the knowledge actually belongs to the person.

Structured AI learning with mentorship, a cohort, and hands-on practice – a course for managers who care about results, not illusions

No payment required • Get notified on launch

So Why Take a Course?

Let’s return to the original question. “Why pay for education when everything is available online?”

Because the internet provides data, not skills. ChatGPT generates answers, not understanding. YouTube shows other people’s solutions, not your thinking.

Structured learning delivers four things that are impossible to obtain on your own:

A cognitive framework – a sequential structure that manages the complexity of the material, forces you through productive struggle, and won’t let you skip the fundamentals in favor of trendy tools.

Social learning – an environment where you learn not only from the instructor but from the group: through feedback, comparing approaches, and observing how other people think about the same problems.

External calibration – real people who show you the actual level of your understanding without the politeness filter characteristic of AI. It hurts, but it’s the only way to break free from the illusion of competence.

Knowledge verification – in a world where AI can generate any output, formal verification from an organization that has tested your knowledge under controlled conditions becomes not less, but more valuable.

Access to information is not education. It’s simply access to information. The difference lies in what happens to your brain in the process.

And here’s the curious thing: if self-learning with ChatGPT really works just as well as structured courses – why do the people who claim this keep making that claim in comments, rather than demonstrating results at work?

This problem is particularly acute in education. In Kazakhstan, 252,000 teachers received AI certificates – but acquired no subject-level skills. In Russia, 87% of students use AI, while only 12% of teachers do. A detailed breakdown of 5 AI implementation problems in CIS schools – with data and concrete steps for administrators.

Want to master AI systematically?

An open course module – free, no registration. Feel the difference between reading about AI and actually learning it.

Continue learning

Open the textbook and pick up where you left off

Sources

- Broad effects of shallow understanding: Explaining an unrelated phenomenon exposes the illusion of explanatory depth – Cambridge University Press, IOED study

- Embracing the illusion of explanatory depth: A strategic framework for using iterative prompting for integrating large language models in healthcare education – PubMed, IOED and LLMs in education

- ChatGPT and the Illusion of Explanatory Depth – eScholarship, ChatGPT’s impact on depth of understanding

- Do Code Models Suffer from the Dunning-Kruger Effect? – Microsoft Research, the Dunning-Kruger effect in AI models

- From Superficial Outputs to Superficial Learning: Risks of Large Language Models in Education – arXiv, the shallow learning cascade

- Applications of social theories of learning in health professions education programs: A scoping review – PMC, Bandura’s social learning theory

- Evaluating Generative AI as a Mentor Resource: Bias and Implementation Challenges – ACL Anthology, limitations of AI mentorship

- Mentorship in the Age of Generative AI: ChatGPT to Support Self-Regulated Learning of Pre-Service Teachers – MDPI, AI mentorship in teacher education

- Bootcamp, College, or Self-Taught? Comparing Learning Paths for Career Changers in Tech – SynergisticIT, comparing paths into tech careers

- Artificial Intelligence and the Sustainability of the Signaling and Human Capital Theories – MDPI, AI education requirements based on Lightcast data